As usual, I am not answering any questions about licensing. That’s the job of your reseller or distributor, so ask them.

Microsoft released the updating licensing details for WS2012 R2 several weeks ago. Remember that once released, you will be buying WS2012 R2, even if you plan to downgrade to W2008 R2. In this post, I’m going to cover the licensing for “core” editions of Windows Server.

The Core Editions

There aren’t any huge changes to the “core” editions of Windows Server (Datacenter and Standard). As with WS2012, the two editions are identical technically, having the same scalability and features … except one.

Processors

Both the Standard and Datacenter edition cover a licensed server for 2 processors. Processors are CPUs or sockets. Cores are not processors. A server with 2 Intel Xeon E5 processors with 10 cores each has 2 processors. It requires one Window Server license. A server with 4 * 16 core AMD processors has 4 processors. It needs 2 Windows Server licenses.

This applies no matter what downgraded version you plan to install.

Downgrade Rights

According to Microsoft:

If you have Windows Server 2012 R2 Datacenter edition you will have the right to downgrade software bits to any prior version or lower edition. If you have Windows Server 2012 R2 Standard edition, you will have the right to downgrade the software to use any prior version of Enterprise, Standard or Essentials editions.

The One Technical Feature That Is Unique To Datacenter Edition

Technically the Datacenter and Standard editions of WS2012 R2 are identical. With one exception, which is really due to the exceptional virtualization licensing rights granted with the Datacenter edition.

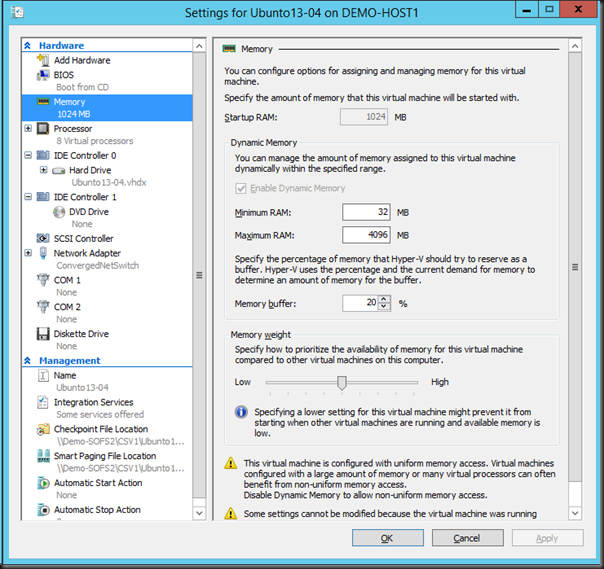

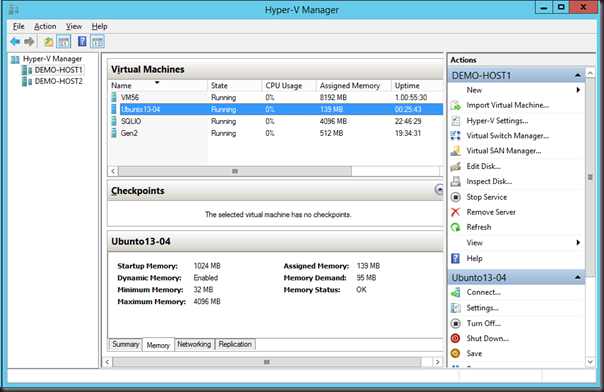

If you use the Datacenter edition of WS2012 R2 (via any licensing program) for the management OS of your hosts Hyper-V then you get a feature called Automated Virtual Machine Activation (AVMA). With this you get an AVMA key, that you install into your template VMs (guest OS must be WS2012 R2 DC/Std/Essentials) using SLMGR. When that template is deployed on to the WS2012 R2 Datacenter hosts, then the guest OS will automatically activate without using KMS or online activation. Very nice for multi-tenant or Network Virtualization-enabled clouds.

Virtualization Rights

Everything in this section applies to Windows Server licensing on all virtualization platforms on the planet outside of the SPLA (hosting) licensing program. The key difference between Std and DC is the virtualization rights. Any host licensed with DC gets unlimited VOSEs. A VOSE (Virtual Operating System Environment) is licensing speak for a guest OS. In other words:

- Say you license a host with the DC edition of Windows Server.

- You can install Windows Server (DC or Std) on an unlimited number of VMs that run on that host.

- You cannot transfer those VOSEs (licenses) to another host.

- You can transfer a volume license of DC (or Standard for that matter) once every 90 days to another host. The VOSEs move with that host.

The Standard edition comes with 2 VOSEs. That means you can install the Std edition of Windows Server in two VMs that run on a licensed host:

- Say you license a host with the Std edition of Windows Server.

- You can install Windows Server Standard on up to 2 VMs that run on that host.

- You cannot transfer those VOSEs (licenses) to another host.

- You can transfer a volume license of Standard (or DC for that matter) once every 90 days to another host. The VOSEs move with that host.

You can stack Windows Server Standard edition licenses to get more VOSEs on a host:

- Say you license a host with 3 copies of the Std edition of Windows Server. This is an accounting operation. You do not install Windows 3 times on the host. You do not install 3 license keys on the host.

- You can install Windows Server Standard on up to 6 (3 Std * 2 VOSEs) VMs that run on that host.

- You cannot transfer those VOSEs (licenses) to another host.

- You can transfer a volume license of Standard (or DC for that matter) once every 90 days to another host. The VOSEs move with that host.

There is a sweet spot (different for every program/region/price band) where it is cheaper to switch from Std licensing to DC licensing for each host.

If you need HA or Live Migration then you license all hosts for the maximum number of VMs that can (not will) run on each host, even for 1 second. The simplest solution is to license each host for the DC edition.

Upgrade Scenarios

WS2012 CALs do not need an upgrade. WS2012 server licenses require one of the following to be upgraded:

- Software Assurance (SA)

- A new purchase

In my opinion anyone using virtualization is a dummy for not buying SA on their Windows Server licensing. If you plan on availing of new Hyper-V features (assuming you are using Hyper-V) or you want to install even 1 newer edition of Windows Server, then you need to buy the licenses all over again … SA would have been cheaper, and remember that upgrades are just one of the rights included in SA.

Pricing

This is what everyone wants to know about! The $US Open NL (the most expensive volume license) pricing is shown, as it’s the most commonly used example:

The Standard edition went up a small amount from W2008 R2 to WS2012. It has not increased with WS2012 R2.

The Datacenter edition did not increase from W2008 R2 to WS2012. It has increased with the release of WS2012 R2. However, think of how much you’re getting with the DC edition: unlimited VOSEs!

Reminder: There is no difference in Windows Server pricing no matter what virtualization you use. The price of Windows Server on a Hyper-V host is the same as it is on a VMware host. Please send me the company name/address of your employer or customers if you disagree – I’d love an easy $10,000 for reporting software piracy ![]()

Calculating License Requirements

Do the following on a per-server basis. This applies whether you are using virtualization or not, and no matter what virtualization you plan to use.

Step 1: Count your physical processors

If you have 1 or 2 physical processors in a server then your server needs 1 copy of Windows Server. If your server will have 4 processors then you need 2 copies of Windows Server. If your server will have 8 processors then you will need 4 copies of Windows Server.

Step 2: Count your virtual machines

How many virtual machines running Windows Server will possibly run on the host. This include VMs that normally run on another host, but could be moved (Quick Migration, Live Migration, vMotion) manually or automatically, or failed over due to cluster high availability (HA).

You have 2 hosts in a cluster. Each is running 2 VMs normally but could run 4 VMs, then you need to license each host for 4 VMs. A copy of Windows Server Standard gives you 2 VOSEs. Each host will need 4 VOSEs because 4 VMs could run on each host. Therefore you need 2 copies of Standard per host.

When is the sweet spot? That depends on your pricing. Datacenter costs $6,155 and Standard costs $882 under US Open NL. $6,155 / $882 = 6.97. 7 copies of Windows Std = the price of Windows DC. Therefore the sweet spot for switching is 14 VMs per host. Once you get close to 14 VMs that could run on a host, you would be better off economically by buying the DC edition.