Read the notes from the session recording (original here) on Windows Server 2016 (WS2016) Storage Spaces Direct (S2D) and hyper-converged infrastructure, which was one of my most anticipated sessions of Microsoft Ignite 2016. The presenters were:

- Claus Joergensen: Program Manager

- Cosmos Darwin, Program Manager

Definition

Cosmos starts the session.

Storage Spaces Direct (S2D) is software-defined, shared-nothing storage.

- Software-defined: Use industry standard hardware (not proprietary, like in a SAN) to build lower cost alternative storage. Lower cost doesn’t mean lower performance … as you’ll see

- Shared-nothing: The servers use internal disks, not shared disk trays. HA and scale is achieved by pooling disks and replicating “blocks”.

Deployment

There’s a bunch of animated slides.

- 3 servers, each with internal disks, a mix of flash and HDD. The servers are connected over Ethernet (10 GbE or faster, RDMA)

- Runs some PowerShell to query the disks on a server. The server has 4 x SATA HDD and 2 x SATA SSD. Yes, SATA. SATA is more affordable than SAS. S2D uses a virtual SAS bus over the disks to deal with SATA issues.

- They form a cluster from the 3 servers. That creates a single “pool” of nodes – a cluster.

- Now the magic starts. They will create a software-defined pool of virtually shared disks, using Enable-ClusterStorageSpacesDirect. That cmdlet does some smart work for us, identifying caching devices and capacity devices – more on this later.

- Now they can create a series of virtual disks, each which will be formatted with ReFS and mounted by the cluster as CSVs – shared storage volumes. This is done with one cmdlet, New-Volume, which is doing all the lifting. Very cool!

There are two ways we can now use this cluster:

- We expose the CSVs using file shares to another set of servers, such as Hyper-V hosts, and those servers store data, such as virtual machine files, using SMB 3 networking.

- We don’t use any SMB 3 or file shares. Instead, we enable Hyper-V on all the S2D nodes, and run compute and storage across the cluster. This is hyper-converged infrastructure (HCI)

A new announcement. A 3rd scenario is SQL Server 2016 (supported). You install SQL Server 2016 on each node, and store database/log files on the CSVs (no SMB 3 file shares).

Scale-Out

So your S2D cluster was fine, but now your needs have grown and you need to scale out your storage/compute? It’s easy. Add another node (with internal storage) to the cluster. In moments, S2D will claim the new data disks. Data will be re-balanced over time across the disks in all the nodes.

Time to Deploy?

Once you have the servers racked/cabled, OS installed, and networking configured, you’re looking at under 15 minutes to get S2D configured and ready. You can automate a lot of the steps in SCVMM 2016.

Cluster Sizing

The minimum number of required nodes is an “it depends”.

- Ideally you have a 4-node cluster. This offers HA, even during maintenance, and supports the most interesting form of data resilience that includes 3-way mirroring.

- You could do a 3 node cluster, but that’s limited to 2-way mirroring.

- And now, as of Ignite, you can do a 2-node cluster.

Scalability:

- 2-16 nodes in a single cluster – add nodes to scale out.

- Over 3PB of raw storage per cluster – add drives to nodes to scale up (JBODS are supported).

- The bigger the cluster gets, the better it will perform, depending on your network.

The procurement process is easy: add servers/disks

Performance

Claus takes over the presentation.

1,000,000 IOPS

Earlier in the week (I blogged this in the WS2016 and SysCtr 2016 session), Claus showed some crazy numbers for a larger cluster. He’s using a more “normal” 4-node (Dell R730xd) cluster in this demo. There are 4 CSVs. Each node has 4 NVMe flash devices and a bunch of HDDs. There are 80 VMs running on the HCI cluster. They’re using a open source stress test tool called VMFleet. The cluster is doing just over 1 million IOPS, over 925,000 read and 80.000 write. That’s 4 x 2U servers … not a rack of Dell Compellent SAN!

Disk Tiering

You can do:

You must have some flash storage. That’s because HDD is slow at seek/read. “Spinning rust” (7200 RPM) can only do about 75 random IOs per second (IOPS). That’s pretty pathetic.

Flash gives us a built-in, always-on cache. One or more caching device (a flash disk) is selected by S2D. Caching devices are not pooled. The other disks, capacity devices, are used to store data, and are pooled and dynamically (not statically) bound to a caching device. All writes up to 256 KB and all reads up to 64 GB are cached – random IO is intercepted, and later sent it to capacity devices as optimized IO.

Note the dynamic binding of capacity devices to caching devices. If a server has more than one caching device, and one fails, the capacity devices of the failed caching device are dynamically re-bound.

Caching devices are deliberately not pooled – this allows their caching capability to be used by any pool/volume in the cluster –the flash storage can be used where it is needed.

The result (in Microsoft’s internal testing) was that they hit 600+ IOPS per HDD …. that’s how perfmon perceived it … in reality the caching devices were positively greatly impacting the performance of “spinning rust”.

NVMe

WS2016 S2D supports NVMe. This is a PCIe bus-connected form of very fast flash storage, that is many times faster than SAS HBA-connected SSD.

Comparing costs per drive/GB using retail pricing on NewEgg (a USA retail site):

Comparing performance, not price:

If we look at the cost per IOP, NVMe becomes a very affordable acceleration device:

Some CPU assist is require to move data to/from storage. Comparing SSD and NVMe, the NVMe has more CPU for Hyper-V or SQL Server.

The highest IOPS number that Microsoft has hit, so far, is over 6,000,000 read IOPS from a single cluster, which they showed earlier in the week.

1 Tb/s Throughput (New Record)

IOPS are great. But IOPS is much like horsepower in a car, we care more about miles/KMs per hour or amounts of data we can actually push in a second. Microsoft recently hit 1 terabit per second. The cluster:

- 12 nodes

- All Micron NVMe

- 100 GbE Mellanox RDMA network adapters

- 336 VMs, stress tested by VMFleet.

Thanks to RDMA and NVMe, the CPU consumption was only 24-27%.

1 terabit per second. Wikipedia (English) is 11.5 GB. They can move English Wikipedia 14 times per second.

Fault Tolerance

Soooo, S2D is cheaper storage, but the performance is crazy good. Maybe there’s something wrong with fault tolerance? Think again!

Cosmos is back.

Failures are not a failure mode – they’re a critical design point. Failures happen, so Microsoft wants to make it easy to deal with.

Drive Fault Tolerance

- You can survive up to 2 simultaneous drive failures. That’s because each chunk of data is stored on 3 drives. Your data stays safe and continuously (better than highly) available.

- There is automatic and immediate repair (self-healing: parallelized restore, which is faster than classic RAID restore).

- Drive replacement is a single-step process.

Demo:

- 3 node cluster, with 42 drives, 3 CSVs.

- 1 drive is pulled, and it shows a “Lost Communication” status.

- The 3 CSVs now have a Warning health status – remember that each virtual disk (LUN) consumes space from each physical disk in the pool.

- Runs: Cluster* | DebugStorageSubSystem …. this cmdlet for S2D does a complete cluster health check. The fault is found, devices identified (including disk & server serial), fault explained, and a recommendation is made. We never had this simple debug tool in WS2012 R2.

- Runs: $Volumes | Debug-Volume … returns health info on the CSVs, and indicates that drive resiliency is reduced. It notes that a restore will happen automatically.

- The drive is automatically marked as restired.

- S2D (Get-StorageJob) starts a repair automatically – this is a parallelized restore writing across many drives, instead of just to 1 replacement/hot drive.

- A new drive is inserted into the cluster. In WS2012 R2 we had to do some manual steps. But in WS2016 S2D, the disk is added automatically. We can audit this by looking at jobs.

- A rebalance job will automatically happen, to balance data placement across the physical drives.

So what are the manual steps you need to do to replace a failed drive?

- Pull the old drive

- Install a new drive

S2D does everything else automatically.

Server Fault Tolerance

- You can survive up to 2 node failures (4+ node cluster).

- Copies of data are stored in different servers, not just different drives.

- Able to accommodate servicing and maintenance – because data is spread across the nodes. So not a problem if you pause/drain a node to do planned maintenance.

- Data resyncs automatically after a node has been paused/restarted.

Think of a server as a super drive.

Chassis & Rack Fault Tolerance

Time to start thinking about fault domains, like Azure does.

You can spread your S2D cluster across multiple racks or blade chassis. This is to create the concept of fault domains – different parts of the cluster depend on different network uplinks and power circuits.

You can tag a server as being in a particular rack or blade chassis. S2D will respect these boundaries for data placement, therefore for disk/server fault tolerance.

Efficiency

Claus is back on stage.

Mirroring is Costly

Everything so far about fault tolerance in the presentation has been about 3-copy mirror. And mirroring is expensive – this is why we encounter so many awful virtualization deployments on RAID5. So if 2-copy mirror (like RAID 10) gives us the raw storage as usable storage, and only 1/3 with 3-way mirroring, this is too expensive.

2-way and 3-way mirroring give us the best performance, but parity/erasure coding/RAID5 give us the best usable storage percentage. We want performance, but we want affordability too.

We can do erasure coding with 4 nodes in an S2D cluster, but there is a performance hit.

Issues with erasure coding (parity or RAID 5):

- To rebuild from one failure, you have to read every column (all the disks), which ties up valuable IOPS.

- Every write incurs an update of the erasure coding, which tiers up valuable CPU. Actively written data means calculating the encoding over and over again. This easily doubles the computational work involved in every write!

Local Reconstruction Codes

A product of Microsoft Research. It enables much faster recovery of a single drive by grouping bits. The XO the groups and restore required bits instead of an entire stripe. It reduces the number of devices that you need to touch to do a restore of a disk when using parity/erasure coding. This is used in Azure and in S2D.

This allows Microsoft to use erasure coding on SSD, as do many HCI vendors, but also on HDDs.

The below depicts the levels of efficiency you can get with erasure coding – note that you need 4 nodes minimum for erasure coding. The more nodes that you have, the better the efficiencies.

Accelerated Erasure Coding

S2D optimizes the read-modify-write nature of erasure coding. A virtual disk (a LUN) can combine mirroring and erasure coding!

- Mirror: hot data with fast write

- Erasure coding: cold data – fewer parity calculations

The tiering is real time, not scheduled like in normal Storage Spaces. And ReFS metadata handling optimizes things too – you should use ReFS on the data volumes in S2D!

Think about it. A VM sends a write to the virtual disk. The write is done to the mirror and acknowledged. The VM is happy and moves on. Underneath, S2D is continuing to handle the persistently stored updates. When the mirror tier fills, the aged data is pushed down to the erasure coding tier, where parity is done … but the VM isn’t affected because it has already committed the write and has moved on.

And don’t forget that we have flash-based caching devices in place before the VM hits the virtual disk!

As for updates to the parity volume, ReFS is very efficient, thanks to it’s way of abstracting blocks using metadata, e.g. accelerated VHDX operations.

The result here is that we get the performance of mirroring for writes and hot data (plus the flash-based cache!) and the economies of parity/erasure coding.

If money is not a problem, and you need peak performance, you can always go all-mirror.

Storage Efficiency Demo (Multi-Resilient Volumes)

Claus does a demo using PoSH.

Note: 2-way mirroring can lose 1 drive/system and is 50% efficient, e.g. 1 TB of usable capacity has a 2 TB footprint of raw capacity.

- 12 node S2D cluster, each has 4 SSDs and 12 HDDs. There is 500 TB of raw capacity in the cluster.

- Claus creates a 3-way mirror volume of 1 TB (across 12 servers). The footprint is 3 TB of raw capacity. 33% efficiency. We can lose 2 systems/drives

- He then creates a parity volume of 1 TB (across 12 servers). The The footprint is 1.4 TB of raw capacity. 73% efficiency. We can lose 2 systems/drives

- 3 more volumes are created, with different mixtures of 3-way mirroring and erasure coding.

- The 500 GB mirror + 500 dual parity virtual disk has 46% efficiency with a 2.1 TB footprint.

- The 300 GB mirror + 700 dual parity virtual disk has 54% efficiency with a 1.8 TB footprint.

- The 100 GB mirror + 900 dual parity virtual disk has 65% efficiency with 1.5 TB footprint.

Microsoft is recommending that 10-20% of the usable capacity in “hybrid volumes” should be 3-way mirror.

If you went with the 100/900 balance for a light write workload in a hybrid volume, then you’ll get the same performance as a 1 TB 3-way mirror volume, but by using half of the raw capacity (1.5 TB instead of 3 TB).

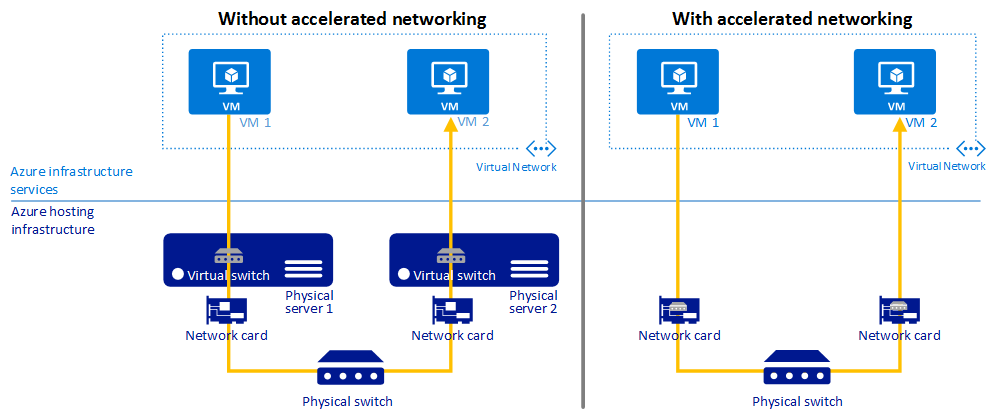

CPU Efficiency

S2D is embedded in the kernal. It’s deep down low in kernel mode, so it’s efficient (fewer context switches to/from user mode). A requirement for this efficiency is using Remote Direct Memory Access (RDMA) which gives us the ultra-efficient SMB Direct.

There’s lots of replication traffic going on between the nodes (east-west traffic).

RDMA means that:

- We use less CPU when doing reads/write

- But we also can increase the amount of read/write IOPS because we have more CPU available

- The balance is that we have more CPU for VM workloads in a HCI deployment

Customer Case Study

I normally hate customer case studies in these sessions because they’re usually an advert. But this quick presentation by Ben Thomas of Datacom was informative about real world experience and numbers.

They switched from using SANs to using 4-node S2D clusters with 120 TB usable storage – a mix of flash/SATA storage. Expansion was easy compared to compute + SAN > just buy a server and add it to the cluster. Their network was all Ethernet (even the really fast 100 Gbps Mellanox stuff is Ethernet-based) so they didn’t need fibre networks for SAN anymore. Storage deployment was easy. In SAN there’s create the LUN, zone it, etc. In S2D, 1 cmdlet creates a virtual disk with the required resilience/tiering, formats it, and it appears as a replicated CSV across all the nodes.

Their storage ended up costing them $0.04 / GB or $4 / 1000 IOPS. The IOPS was guaranteed using Storage QoS.

Manageability

Cosmos is back.

You can use PowerShell and FCM, but mid-large customers should use System Center 2016. SCVMM 2016 can deploy your S2D cluster on bare metal.

Note: I’m normally quite critical of SCVMM, but I’ve really liked how SCVMM simplified Hyper-V storage in the past.

If you’re doing a S2D deployment, do a Hyper-V deployment and check a single box to enable S2D and that’s it, you get a HCI cluster instead of a compute cluster that requires storage from elsewhere. Simple!

SCOM provides the monitoring. They have a big dashboard to visualize alerts and usage of your S2D cluster.

Where is all that SCOM data coming from? You can get this raw data yourself if you don’t have System Center.

Health Service

New in WS2016. S2D has a health service built into the OS. This is the service that feeds info to the SCOM agents. It has:

- Always-on monitoring

- Alerting with severity, description, and call to action (recommendation)

- Root-cause analysis to reduce alert noise

- Monitoring software and hardware from SLA down to the drive (including enclosure location awareness)

We actually saw the health service information in an earlier demo when a drive was pulled from an S2D cluster.

It’s not just health. There are also performance, utilization, and capacity metrics. All this is built into the OS too, and accessible via PowerShell or API: Cluster* | Get-StorageHealthReport

DataON MUST

Cosmos shows a new tool from DataON, a manufacturer of Storage Spaces and Storage Spaces Direct (S2D) hardware.

If you are a reseller in the EU, then you can purchase DataON hardware from my employer, MicroWarehouse (www.mwh.ie) to resell to your customers.

DataON has made a new tool called MUST for management and monitoring of Storage Spaces and S2D.

Cosmos logs into a cloud app, must.dataonstorage.com. It has a nice bright colourful and informative dashboard with details of the DataON hardware cluster. The data is live and updating in the console, including animated performance graphs.

There is an alert for a server being offline. He browses to Nodes. You can see healthy node with all it’s networking, drives, CPUs, RAM, etc.

He browses to the dead machine – and it’s clearly down.

Two things that Cosmos highlights:

- It’s a browser-based HTML5 experience. You can access this tool from any kind of device.

- DataON showed a prototype to Cosmos – a “call home” feature. You can opt in to get a notification sent to DataON of a h/w failure, and DataON will automatically have a spare part shipped out from a relatively local warehouse.

The latter is the sort of thing you can subscribe to get for high-end SANs, and very nice to see in commodity h/w storage. That’s a really nice support feature from DataON.

Cost

So, controversy first, you need WS2016 Datacenter Edition to run S2D. You cannot do this with Standard Edition. Sorry small biz that was considering this with a 2 node cluster for a small number of VMs – you’ll have to stick with a cluster in a box.

Me: And the h/w is rack servers with RDMA networking – you’ll be surprised how affordable the half-U 100 GbE switches from Mellanox are – each port breaks out to multiple cables if you want. Mellanox price up very nicely against Cisco/HPE/Dell/etc, and you’ll easily cover the cost with your SAN savings.

Hardware

Microsoft has worked with a number of server vendors to get validated S2D systems in the market. DataON will have a few systems, including an all-NVME one and this 2U model with 24 x 2.5” disks:

You can do S2D on any hardware with the pieces, Microsoft really wants you to use the right, validated and tested, hardware. you know, you can put a loaded gun to your head, release the safety, and pull the trigger, but you probably shouldn’t. Stick to the advice, and use especially engineered & tested hardware.

Project Kepler-47

One more “fun share” by Claus.

2-nodes are now supported by S2D, but Microsoft wondered “how low can we go?”. Kepler-47 is a proof-of-concept, not a shipping system.

These are the pieces. Note that the motherboard is mini-ITX; the key thing was that it had a lot of SATA connectors for drive connectivity. The installed Windows on a USB3 DOM. 32 GB RAM/node. There are 2 SATA SSDs for caching and 6 HDDs for capacity in each node.

There are two nodes in the cluster.

It’s still server + drive fault tolerant. They use either a file share witness or a cloud witness for quorum. It has 20 TB of usable mirrored capacity. Great concept for remote/branch office scenario..

Both nodes are 1 cubic foot, 45% smaller than 2U of rack space. In other words, you can fit this cluster into one carry-on bag in an airplane! Total hardware cost (retail, online), excluding drives, was $2,190.

The system has no HBA, no SAS expander, and no NIC, switch or Ethernet! They used Thunderbolt networking to get 20 Gbps of bandwidth between the 2 servers (using a PoC driver from Intel).

Summary

My interpretation:

Sooooo:

- Faster than SAN

- Cheaper than SAN

- Probably better fault tolerance than SAN thanks to fault domains

- And the same level of h/w support as high end SANs with a support subscription, via hardware from DataON

Why are you buying SAN for Hyper-V?