This post is a part of the Azure Back to School 2023 online event. In this post, I will discuss using Microsoft Azure Export for Terraform, also known as Aztfexport and previously known as Azure Terrafy (a great name!), to create Terraform code from existing Azure deployments, why you would do it, and share a few tips.

Terraform

Terraform is one of a few Infrastructure-as-Code (IaC) languages out there that support Microsoft Azure. You might wonder why I would use it when Azure has ARM and Bicep. I’ll do a quick introduction to Terraform and then explain my reasoning which you are free to disagree with 🙂

Terraform is a product of Hashicorp available as a free-to-use product that is supported with some paid-for services. Like other IaC languages, it describes and desired end result. The major feature that differs from the native Azure languages is the use of state files – a file that describes what is deployed in Azure. This state file has a few nice use cases, including:

- The outputs of a resource are documented, enabling effortless integration between resources in the same or even different files – with some effort, outputs from different deployments can be included in another deployment.

- A true what-if engine that (mostly) works, unlike the native what-if in Azure, greatly reducing the time required for deployments and the ability to plan (pre-review) a deployment’s expected changes.

My first encounter with Terraform was a government project where the customer wanted to use Terraform over Bicep. Their reasoning was that elected politicians come and go, and suppliers come and go. If they were going to invest in an IaC skillset, they wanted the knowledge to be transferrable across clouds.

That’s the big advantage of Terraform. While the code itself is not cloud portable, the skill is. Terraform uses providers to be able to manage different resource types. Azure is a provider, written by Microsoft. Azure AD is a provider – ARM/Bicep still do not support Azure AD! AWS and GCP have providers. VMware has a provider. GitHub has a provider – the list goes on and on. If a provider does not exist, you can (in theory) write your own.

On that project, I was meant to be hands-off as an architect. But there were staffing and scheduling issues so I stepped up. Having never written a line of Terraform before I had my first workload, with some review help from a teammate, written in under a day. By the way, the same thing in Bicep took three days! Terraform is really well documented, with lots of examples, and the language makes sense.

Unlike Bicep, which is still beholden to a lot of the complexity of ARM. Doing simple things can involve stupidly complicated functions that only a C programmer (I used to be one) could enjoy (and I didn’t). I got hooked on Terraform and convinced my colleagues that it was a better path than Bicep, which was our original plan to replace ARM/JSON.

Aztfexport

Switching Terraform creates a question – what do we do with our existing workloads which are either deploying using Click Ops (Portal), script, or ARM/Bicep?

Microsoft has created a tool called Azure Export for Terraform (Aztfexport) on GitHub. The purpose of this tool is to take an existing resource group/resource/Graph query string and export it as Terraform code.

The code that is produced is intended to be used in some other way. In other words, Microsoft is not exporting code that should be able to immediately deploy new resources. They say that the produced code should be able to pass a terraform plan where the existing resources are compared with the state file and the code and say “the code is clean and there are no changes required”.

The Terraform configurations generated by

Azure/aztfexport: (github.com)aztfexportare not meant to be comprehensive and do not ensure that the infrastructure can be fully reproduced from said generated configurations. For details, please see limitations).

Why Use Aztfexport?

If I can’t use the code to deploy resources then what value is it? Hopefully you will see what aztfexport is a central part of my toolkit. I see it being useful in the following ways:

- Learning Terraform: If you’ve not used Terraform before then it’s useful to see how the code can be produced, especially from resources that you are already familiar with.

- Creating TF for an existing workload: You need to “terrafy” a resource/resource group and you want a starting point.

- Azure-to-Azure migrations: You have a set of existing resources and you want to get a dump of all the settings and configurations.

- Learning how a resource type/solution is coded: My favourite learning method is to follow the step-by-step and then inspect the resource(s) as code.

- Understand how a resource type/solution works: This is a logical jump from the previous example, now including more resources as a whole solution.

- Auditing: Comparing what is there with what should be there – or not there.

- Documentation: The best form of resource documentation is IaC – why create lengthy documentation when the code is the resource?

I did use Aztfexport to learn Terraform more. In my current project, I have used it again and again to do Azure-to-Azure migrations, taking legacy ClickOps deployments and rewriting them as new secure/governed deployments. I’ve save countless hours capturing settings and configurations and re-using them as new code.

The Bad Stuff

Nothing is perfect, and Aztfexport has some thorns too. Notice that the expected usage is that the produced code should pass a terraform plan. That is because in many situations (like with ARM exports) the code is not usable to deploy resources. That can be because:

- ARM APIs do not expose everything, so how can Terraform get those settings?

- The tool or the providers using used do not export everything.

One example I’ve seen includes App Services configurations that do not include the code type details. Another recent one was with WAF Policies overridden WAF rules were not documented. In both cases, the code would pass a plan. But neither would re-produce the resources. I’ve learned that I do need to double-check things with a resource type that I’ve never worked with before – then I know what to go and manually grab either from an ARM export or a visual inspection in the Portal.

Another thing is that the resources are named by a “machine” – there is no understanding of the role. Every resource is res-1, res-2, and so on, no matter the type or the role in the workload. That is a bit anonymous, but I find that useful when inspecting dependencies between resources.

A giant main.tf file is created, which I break up into many smaller files. I can find relationships based on those easy-to-track dependencies and logically group resources where it suits my coding style.

One feature of TF is the easy reuse of resource IDs. One can easy refer to resource_type.resource_name.id in a property and know that the resource ID of that resource will be used. Unfortunately, some Aztfsexport code doesn’t do that so you get static resource IDs that should be replaced – that happens with other properties of resources too, so that all should be cleaned up to make code more reusable.

Installing Aztfexport

You will need to install Terraform – I prefer to use a Package Manager for that – the online instructions for a manual installation are a mess. You will also require Azure CLI.

The full instructions for installing Aztfexport are shared on GitHub, covering Windows, MacOS and Linux. The Windows installation is easy:

winget install aztfexportYou will need to restart your terminal (Windows) to get an updated Path variable so the aztfexport binary can be found.

Before you use aztfexport, you will need to log in using Azure CLI:

Open your terminal

Login:

az login

Change subscription:

az account set -subscription <subscription ID>

Verify the correct subscription was selected by checking the resource groups:

az group listCreate an empty folder on your PC and navigate to that folder in your terminal. The aztfexport tool requires an empty folder, by default, to create an export including all the required provider files and the generated code.

If you want to create an export of a single resource then you can run:

aztfexport resource <resource ID>If you want to create an export of a resource group, then you can run:

aztfexport resource-group -n <resource group name>Not the -n above means “don’t bother me with manual confirmation of what resources to include in the export”. In Terraform, sub-resources that can be managed as their own Terraform resources would otherwise need to be confirmed and that gets pretty tiresome pretty fast.

Tips

I’ve got to hammer on this one again, the produced code is not intended for deployment. Take the code, copy and paste it into new files and clean it up.

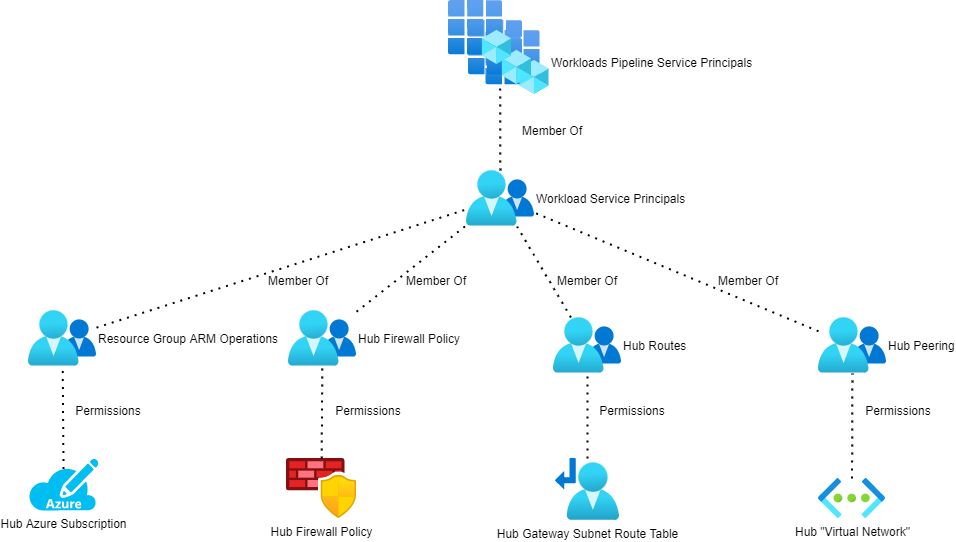

If your goal is to take over an existing IaC/ClickOps deployment with Terraform then you are going to have some fun. The resources already exist and Terraform is going to be confused because there is no state file. You will have to produce a state file using Terraform export for every resource definition in your code. That means knowing the resource IDs of everything, including Azure AD objects, role assignments, and sub-resources. You’ll need to understand the format of those resource IDs – use an existing state file for that. Often the resource ID is the simple Azure resource ID, or a derivation of a parent resource ID that you can figure out from another state file. Sometimes you need to wander through Azure AD (look at assignments in scopes that you do have access to if you don’t have direct Azure AD rights), use Azure CLI to “list” resources or items, or browse around using Resource Explorer in the Azure Portal.

Do take some time to compare your code with any previous IaC code or with an ARM export. Look for things that are missing – Terraform has many defaults that won’t be included and that code is missing because it is not required. I often include that code because I know that they are settings that Devs/Ops might want to tune later.

If you have the misfortune of having to work an existing Terraform module library then you will have to translate the exported code as parameter/variable files for the new code – I do not envy you 🙂

Summary

This post is an introduction to Microsoft Azure Export for Terraform and a quick how-to-get-started guide. There is much more to learn about, such as how to use a custom backend (if resource names in Terraform are not a big deal and to eliminate the terraform import task) or even how to use a resource map to identify resources to export across many resource groups.

The tool is not perfect but it has saved me countless hours over the last year or so, dating back to when it was called Azure Terrafy. It’s one in my toolkit and I regularly break it out to speed up my work. In my opinion, anyone starting to work with Terraform should install and use this tool.