Did you know that you do not need to use Virtual WAN to implement an SD-WAN with Azure? In fact, contrary to the recommendations from Microsoft, Virtual WAN might be the worst way to add Azure networks to an SD-WAN.

My History With Virtual WAN

You might think that the introduction of this post paints me as a complete hater who has never given Virtual WAN a chance. I have. In fact, I can point out features that some of my 1:1 feedback calls probably contributed to. I’ve implemented Virtual WAN with customers.

However, I’ve seen the problems. I’ve seen that the hype doesn’t always work. I’ve personally experienced the lack of troubleshooting capabilities that depended on my deep understanding of the hidden networking. I’ve seen colleagues struggle with the complexity. I’ve seen how some customers’ routing requirements cannot be met with Virtual WAN. And many architectural features that some organisations require cannot be deployed with Virtual WAN.

I concluded that my time with Virtual WAN was over during a proof of concept that I insisted a customer do. They had previously used Virtual WAN without a firewall. I was asked to build a new multi-region Azure environment (multiple hubs) with firewalls. I was not sure that it would go well – this was before routing intent was in preview. I tested and confirmed that Virtual WAN was not going to work; the customer implemented a Meraki SD-WAN using Virtual Network-based hubs and lost no functionality. In fact, they gained functionality.

In an older case, I convinced a customer to go with Virtual WAN. I regret this one. There was a lot of hype. They used Meraki. There was a solution from Meraki to integrate with the Virtual WAN VPN Gateway. We found bugs in the script and fixed them. But the most annoying thing about that solution was that every time the customer changed anything in the SD-WAN, every VPN tunnel to Azure was torn down and recreated. I heard recently that the customer is looking to remove SD-WAN. I don’t blame them, and I regret ever recommending it to them.

The Microsoft Claims

The Azure Cloud Adoption Framework incorrectly states the following:

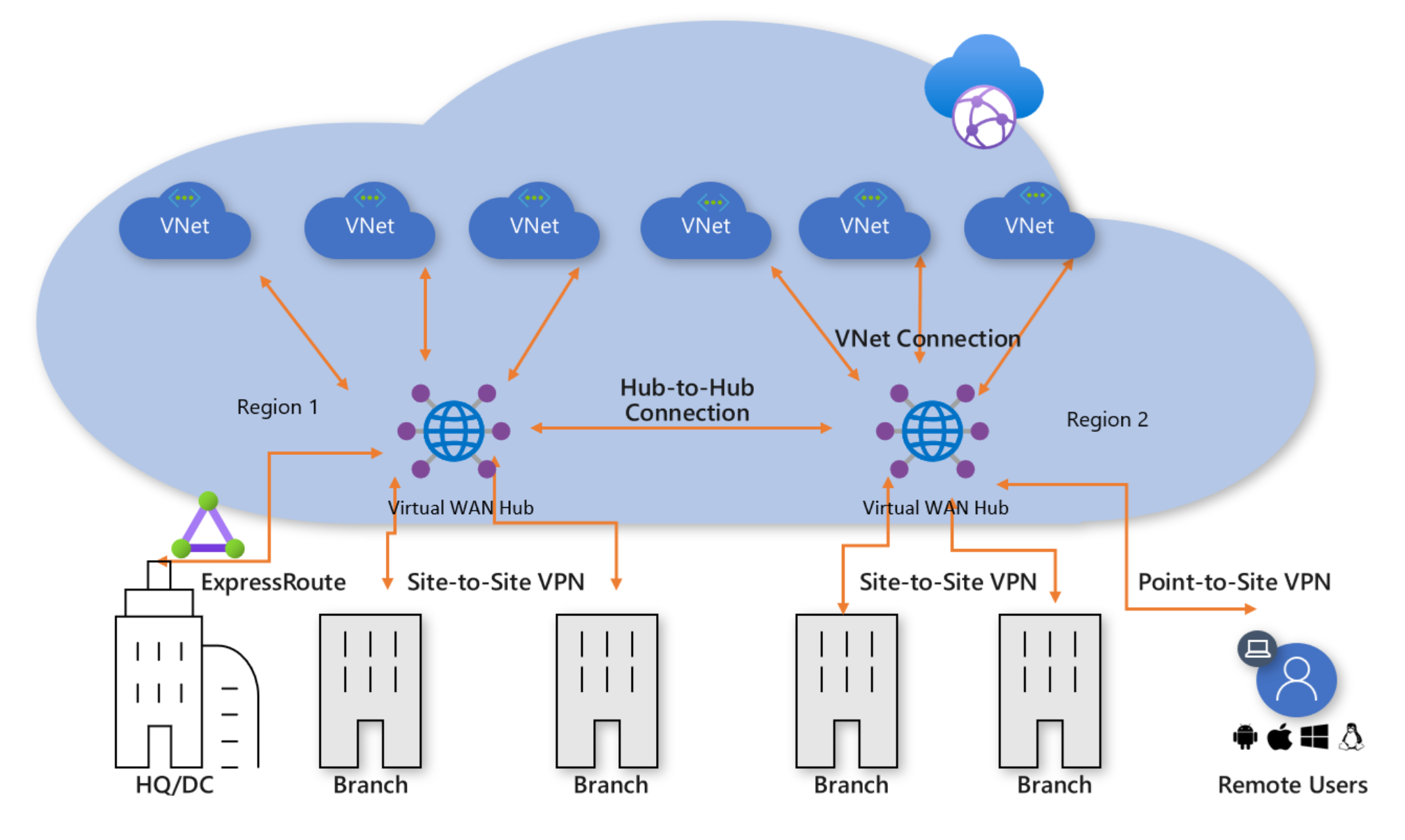

Use a Virtual WAN topology if any of the following requirements apply to your organization:

- Your organization intends to deploy resources across several Azure regions and requires global connectivity between virtual networks in these Azure regions and multiple on-premises locations.

- Your organization intends to use a software-defined WAN (SD-WAN) deployment to integrate a large-scale branch network directly into Azure, or requires more than 30 branch sites for native IPSec termination.

- You require transitive routing between a virtual private network (VPN) and Azure ExpressRoute. For example, if you use a site-to-site VPN to connect remote branches or a point-to-site VPN to connect remote users, you might need to connect the VPN to an ExpressRoute-connected DC through Azure.

I will burst those bubbles one by one.

Several Regions & Global Connectivity

Do you want to deploy across multiple regions? Not a problem. You can very easily do that with Virtual Network-based hubs. I’ve done it again and again.

Do you want to connect the spokes in different regions? Yup, also easy:

- Build each hub-and-spoke from a single IP prefix.

- Your spokes already route via the hub.

- Peer the hubs.

- Create User-Defined Routes in each firewall subnet (you will be using firewalls in this day and age) to route to remote hub-and-spoke IP prefixes via the remote hub firewalls.

Job done! The only additional steps were:

- Peer the hubs

- Add UDRs to each firewall subnet for each remote hub-and-spoke IP prefix

You do that once. Once!

How about connecting the remote sites? Simples: you connect them as usual.

There is some marketing material about how we can use the Microsoft WAN as the company WAN using vWAN. Yes, in theory. The concept is that the Microsoft Global WAN is amazing. You VPN from site A (let’s say Oslo, Norway) to a local Azure region and you VPN from site B (let’s say Houston, Texas) to a local Azure region. Then vWAN automatically enables Oslo <> Texas connectivity over the Microsoft Global Network. Yes, it does. And the performance should be amazing. I did a proof-of-concept in 2 hours with a customer. The performance of VPN directly between Oslo <> Houston was much better. Don’t buy the hype! Question it and test. And by the way, we can build this with VNets too – I was told by an MS partner that they did this solution between two sites on different continents years before vWAN existed.

SD-WAN

Microsoft suggests that you can only add Azure networks to an SD-WAN if you use Virtual WAN.

Here’s some truth. Under the covers, vWAN hub is built on a traditional Virtual Network. Then you can use (don’t) a VPN Gateway or a third-party SD-WAN appliance for connectivity.

The list of partners supporting vWAN was greatly increased recently – I remember looking for Meraki support a few months ago, and it was not there (it is now). But guess what, I bet you that everyone one of those partners offers the exact same solution for Virtual Networks via the Marketplace. And I bet:

- There are more partner options

- There are no trade-offs

- The resilience is just the same

I have done Azure/Meraki SD-WAN twice since the above customer X. In both cases, we went with the Azure Marketplace and Virtual Network. And in both cases, it was:

- Dead simple to set up.

- It worked the first time.

Transitive Routing

Virtual WAN is powered by a feature that is hidden unless you do an ARM export. That feature is a routing service that is quite similar (not exactly identical) to Azure Route Server. Did you know:

- You can deploy Azure Route Server to a Virtual Network. The deployment is a next-next-next.

- It can be easily BGP peered with a third-party networking appliance, including HA services – for example, HA Meraki gets seamless failover using AS PATH when coupled with Azure Route Server.

- The Azure Route server will learn remote site prefixes from the networking appliance/SD-WAN.

- The Azure Route Server will advertise routes to the networking appliance/SD-WAN.

Azure Route Server BGP propagation is managed using the same VNet peering settings as Virtual Network Gateway.

There is a single checkbox (true/false property) to enable transitive routing between VPN/ExpressRoute remote sites. And that setting is amazing.

I signed in to work one day and was asked a question. I had built out the environment for a large customer with an HQ in Oslo:

- Remote sites around the world with a Meraki SD-WAN.

- Leased line to Oracle Cloud – the global sites backhauled through Oslo.

- The VNet-based hub in Azure was added to the SD-WAN. All offices wre connected directly to Azure via VPN.

- Azure Route Server was added and peered to the Meraki SD-WAN.

- Azure had an ExpressRoute connection (Oracle Cloud Interconnect) to Oracle Cloud.

An excavator has torn up the leased line to Oracle. The essential services in Oracle Cloud were unavailable. I was asked if the Azure connection to Oracle Cloud coule be leveraged to get the business back online? I thought for 30 seconds and said, “Yes, give me 5 minutes”. Here’s what I did:

- I check the box to enable transitive routing in Azure Route Server.

- I clicked Save/Apply and waited a few minutes for the update task

- I asked the client to test.

And guess what? Contrary to the above CAF text, the client was back online. A few weeks later, I was told that not only did they get back online, but the SD-WAN connection to the VIRUTAL NETWORK-BASED hub in Azure gave the global branch offices lower latency connections than their backhaul through Oslo to Oracle Cloud. Whoda-thunk-it?

vWAN is PaaS

One of the arguments for the vWAN hub is that it pushes complexity down into the platform; it’s a PaaS sub-resource.

Yes, it’s a PaaS sub-resource. Is a well-designed hub complex? A hub should contain very few resources, based around:

- Remote connectivity resource

- Firewall

- Maybe Azure Bastion

There’s not much more to a hub than that if you value security. What exactly am I saving with the more-expensive vWAN?

Limitations of vWAN

Let’s start with performance. A hub in Virtual WAN has a throughput limitation of 50 Gbps. I thought that was a theoretical limit … until I did a network review for a client a few years ago. They had a single workload that pushed 29Gbps through the hub, 1 Gbps shy of the limit for a Standard tier Azure Firewall. I recommended an increase to the 100 Gbps Premium tier, but warned that the bottleneck was always going to be the vWAN hub.

The architectural limitations of vWAN are many – so many that I will miss some:

- No VNet Flow Logs

- Impossible to troubleshoot routing/connectivity in a real way

- No support for Azure Bastion in the hub

- No support for NAT Gateway for firewall egress traffic (SNAT port exhaustion)

- Secured traffic between different secured (firewall) hubs requires Routing Intent

- No Forced Tunnelling in Azure Firewall without Routing Intent

- Routing Intent is overly simplistic – everything goes through the firewall

- No support for IP Prefix for the firewall

- Azure Firewall cannot use Route Server Integration (auto-configuration of non-RFC1918 usage in private networks)

- Hub Route Tables are a complexity nightmare

Impossible Solution

Anyone who has deployed more than a couple of Azure networks has heard the following statement made regarding failing connections over site-to-site networking:

The Azure network is broken

A new site-to-site appliance or firewall has been placed in Azure, and the root cause of the issue is “never the remote network“.

Proving that the issue isn’t the firewall can be tricky. That’s because firewall appliances are black boxes. I updated my standard hub design last year to assist with this:

- Add a subnet with identical routing configuration (BGP propagation and user-defined routes) as the (private) firewall subnet.

- Add a low-spec B-series VM to this subnet with an autoshutdown. This VM is used only for diagnostics.

The design allows an Azure admin to log into the VM. The VM mimics the connectivity of the firewall and allows tests to be done against failing connections. If the test fails from the VM, it proves that the firewall is not at fault.

No other compute resources are placed in the hub.

Here’s the gotcha. I can do this in a VNet hub. I cannot do this in a vWAN hub. The vWAN hub Virtual Network is in a Microsoft-managed tenant/subscription. You have no access, and you cannot troubleshoot it. You are entirely at the mercy of Azure support – and, sadly, we know how that process will go.

Virtual WAN In Summary

You do not need Virtual WAN for connectivity or SD-WAN. So why would one adopt it instead of VNet-based hubs, especially when you consider costs and the loss of functionality? I just do not understand (a) why Microsoft continues to push Virtual WAN and (b) why it continues to exist.