This post will discuss the pros & cons of creating & using Infrastructure-as-Code/IaC Modules – based on 2 years of experience in creating and using a modular approach.

Why Modules?

Anyone who has done just a little bit of template work knows that ARM templates can get quickly get too big. Even a simple deployment, like a hub & spoke network architecture, can quickly expand out to several hundred lines without very much being added. Heck, when Microsoft first released the Cloud Adoption Framework “Enterprise Scale” example architecture, one of the ARM/JSON files was over 20,000 lines long!

The length of a template file can cause so many issues, including but definitely not limited to:

- It becomes hard to find anything

- Big code becomes hard code to update – one change has many unintended repercussions

- Collaboration becomes near impossible

- Agility is lost

One of the pain points that really annoyed one of my colleagues is that “big code” usually becomes non-standardised code; that becomes a big issue when a “service organisation” is supporting multiple clients (consulting company, managed services, Operations, or cloud centre of excellence).

Modularisation

The idea of modularisation is that commonly written code is written once as a module. That module is then referred to by other code whenever the functions of the module are required. This is nothing new – the concept of an “include” or “DLL” is very old in the computing world.

For example, I can create a Bicep/ARM/Terraform module for an Azure App Service. My module can deploy an App Service the way that I believe is correct for my “clients” and colleagues. It might even build some governance in, such as a naming standard, by automating the naming of the new resource based on some agreed naming pattern. Any customisations for the resource will be passed in as parameters, and any required values for inter-module dependencies can be passed out as outputs.

Quickly I can build out a library of modules, each deploying different resource types – now I have a module library. All I need now is code to call the modules, model dependencies, pass in parameters, and take outputs from one module and pass them in as parameters to others.

The Benefits

Quickly, the benefits appear:

- You write less code because the code is written once and you reuse it.

- Code is standardised. You can go from one workload to another, or one client to another, and you know how the code works.

- Governance is built into the code. Things like naming standards are taken out of the hands of the human and written as code.

- You have the potential to tap into new Azure features such as Template Specs.

- Smaller code is easier to troubleshoot.

- Breaking your code into smaller modules makes collaboration easier.

The Issues

Most of the issues are related to the fact that you have now built a software product that must be versioned and maintained. Few of us outside the development world have the know-how to do this. And quite frankly, the work is time-consuming and detracts from the work that we should be doing.

- No matter how well you write a module, it will always require updates. There is always a new feature or a previously unknown use case that requires new code in the module.

- New code means new versions. No matter how well you plan, new versions will change how parameters are used and will introduce breaking changes with some or all previous usage of the module.

- Trying to create a one-size-fits-all module is hard. Azure App Services are a perfect example because there are dozens if not hundreds of different configuration options. Your code will become long.

- The code length is compounded by code complexity. Many values require some sort of input, such as NULL. Quickly you will have if-then-elses all over your code.

- You will have to create a code release and versioning system that must be maintained. These are skills that Ops people typically do not have.

- Changes to code will now be slowed down. If a project needs a previously unwritten module/feature, the new code cannot be used until it goes through the software release mechanism. Now you have lost one of the key features of The Cloud: agility.

So What Is Right?

The answer is, I do not know. I know that “big code” without some optimisation is not the way forward. I think the type of micro-modularisation (one module per resource type) that we normally think of when “IaC Modules” is mentioned doesn’t work either.

One of the reasons that I’ve been working on and writing about Bicep/Azure Firewall/DevSecOps recently is to experiment with things such as the concept of modularisation. I am starting to think that, yes, the modularisation concept is what we need, but how we have implemented the module is wrong.

My biggest concern with the micro-module approach is that it actually slowed me down. I ended up spending more time trying to get the modules to run cleanly than I would have if I’d just written the code myself.

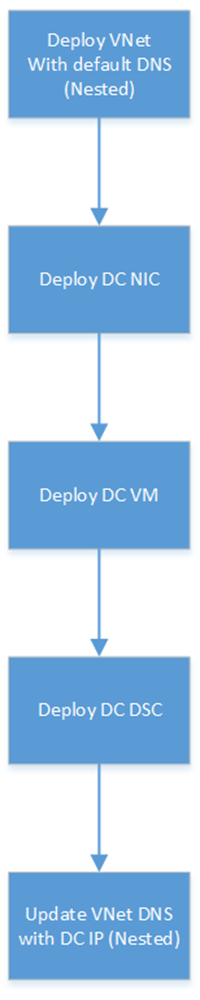

Maybe the module should be a smaller piece of code, but it shouldn’t be a read-only piece of code. Maybe it should be an example that I can take and modify to my own requirements. That’s the approach that I have used in my DevSecOps project. My Bicep code is written into smaller files, each handling a subset of the tasks. That code could easily be shared in a reference library by a “cloud centre of excellence” and a “standard workload” repo could be made available as a starting point for new projects.

Please share below if you have any thoughts on the matter.