I was recently asked to do an interview by Allen O’Neill on the C# Corner website, a site dedicated to developer readers. In this interview, I talk about being an IT pro, cloud, devops and more. You can read part 1 of the interview here. Part 2 will be published next week.

Tag: Cloud

Azure Global Bootcamp Dublin – When Disaster Strikes

I spent Saturday afternoon in the offices of Microsoft Ireland at the very successful Azure Global Bootcamp event in Dublin. Other speakers covered a variety of topics for the 160 (approx) attendees and I wrapped up the day with a session on using Azure Site Recovery as a virtual DR site in the cloud for Hyper-V, VMware, and physical servers.

I was pretty exhausted going into the session, but it was good fun for me to do it. The crowd was engaged, and they even laughed at one or two of my attempts at humour. There was loads of engagement afterwards which was as much fun, even if maybe 95% of the audience were developers ![]()

You can find my PowerPoint deck on SlideShare:

Here are a few photos that some folks took:

Starting off [Image credit: Niall Moran, Microsoft]

One of the two rooms used on the day [Image credit: Ryan Mesches, Microsoft]

I stood between the audience and food – so I had some fun [Image credit: Rob Allen, Unity]

Vikas Sahni (organiser & speaker), Bob Duffy (SQL MVP and speaker), and me.

About 95% of the audience identified themselves as developers to one of the previous speakers. Around 40% of the room claimed to already have DR services in place. So I’m curious why so many stuck around for an IT pro topic on DR. Maybe they wanted a cheaper, cloud-based alternative?

Global Azure BootCamp 2016 – Dublin

Microsoft and “the community” are partnering once again to run The Azure Global BootCamp. ICYMI, the boot camp is a one-day event in locations around the world, where Azure veterans share their knowledge with attendees at this free event.

This event is running in Dublin at 09:30 on Saturday April 16th at Microsoft Atrium Building B, at Carmanhall Road, in Sandyford Industrial Estate, Dublin 18.

The agenda is:

- What’s new in Azure – Niall Moran (Microsoft)

- Building and Deploying Azure App Services – Aidan Casey (MVP)

- Migrating SQL to Azure, an Architectural Perspective – Bob Duffy (MVP)

- Building Real World applications – Vikas Sahni

- When disaster strikes – Aidan Finn (MVP)

My session will be focusing on the hybrid cloud solution where Azure acts as a DR site for your on-premises servers (physical, VMware, or Hyper-V).

The event page, with agenda and registration can be found here.

Microsoft News – 19 October 2015

It turns out that Microsoft has been doing some things that are not Surface-related. Here’s a summary of what’s been happening in the last while …

Hyper-V

- Microsoft Loves Linux Deep Dive #2 – Linux and FreeBSD Integration Services Core Features: Talking about the services that are integrated into Linux guest OSs on Hyper-V.

- Shared Hyper-V virtual disk is inaccessible when it’s located in Storage Spaces on a Windows Server 2012 R2-based computer: A hotfix is available.

- Recover From Expanding VHD or VDHX Files On VMs With Checkpoints: Tut tut!

- Find All Virtual Machines With A Duplicate Static MAC Address On A Hyper-V Cluster With PowerShell: A handy tip from Didier to avoid networking hell.

- Microsoft Loves Linux Deep Dive #3: Linux Dynamic Memory and Live Backup: Dynamic Memory for Linux guests and backup VMs with file system consistency without shutting them down.

- Virtual Network Appliances I Use for Hyper-V Labs: A post by Didier Van Hoye.

- Microsoft Loves Linux Deep Dive #4: Linux Network Features and Performance: More about open source on Hyper-V.

- KB3095308 – VMs may not get additional memory although they’re set to use Dynamic Memory in Windows Server 2012 R2: You experience a poor performance, and IIS servers may run out of memory at peak times.

- KB3093571 Update to replicate multiple VM groups and VMs that use shared VHDs in Windows Server 2012 R2 or Windows Server 2012: This article describes a hotfix that enables multiple virtual machine (VM) groups replication and the ability to replicate VMs that use shared Virtual Hard Disks (VHDs) in Windows Server 2012 R2 or Windows Server 2012. This is BIG – I’ll have more on this topic later.

- Windows 10 Build 10565 Adds Nested Hyper-V: A post I wrote for Petri.com.

- KB3093899 – VMs that run on CSVs fail if DCM can’t query volumes in Windows Server 2012 R2: This article describes an issue that occurs when Desired Configuration Management (DCM) can’t query volumes in Windows Server 2012 R2.

- KB961804 – Virtual machines are missing, or error 0x800704C8, 0x80070037, or 0x800703E3 occurs when you try to start or create a virtual machine: This problem may be caused by antivirus software that is installed in the parent partition if the real-time scanning component is configured to monitor the Hyper-V virtual machine files. Follow Microsoft’s guidance on antimalware for Hyper-V hosts, and stop being a “yes sir, no sir” gombeen kow-towing to the know-nothing security “expert”.

- Trunking With Hyper-V Networking: Trunk the virtual switch port to allow a virtual appliance connect to multiple VLANs at once.

- Remove Lingering Backup Checkpoints from a Hyper-V Virtual Machine: A recovery snapshot stays and you cannot apply or delete it.

- Hyper V Amigos Podcast Episode 1 – Ben Armstrong: Hyper-V MVP Carsten Rachfahl interviews Microsoft PM, the Virtual PC Guy.

Windows Server

- Windows Server Containers, what are they and where do they fit? Read the Microsoft explanation, and then read my one.

- Memory Compression in Windows 10 Threshold 2: Improving resource utilization with TH2, the next major Windows release, coming in November.

- “Not enough server storage is available to process this command” error when you try to access file shares on an SOFS-configured server : You ignored the guidance and used SOFS as an end-user file server. Seriously – try reading!

- New Windows Server 2016 Storage Video Series: A set of videos on WS2016 storage.

- KB3091057 – Cluster validation fails in the “Validate Simultaneous Failover” test in a Windows Server 2012 R2-based failover cluster: This hotfix changes some timing of the “Validate Simultaneous failover” test to more accurately verify the storage compatibility with the failover cluster.

- How to setup Nano Server to send diagnostic messages off-box for remote analysis: Troubleshooting Nano server will be fun if you cannot log into it. So don’t log into it.

Windows Client

- Windows 10 Enterprise Feature – Credential Guard: Using VSM to protect LSASS.

Azure

- Monitor your Azure RemoteApp environment with OpInsight – Part 4: Monitor the User Profile Disks.

- Learn how to back up your IaaS VMs in five minutes: It’s simple: create a backup vault, discover VMs, register VMs, create a policy.

- Microsoft Azure Backup Server: Download the MAB (based on DPM) to get on-premises backup of Hyper-V, SQL, Exchange, SharePoint and clients that you can forward to Azure Backup vaults.

- September updates to Azure RemoteApp: New features in the RDS service. Note that Office 2016 is not supported yet.

- A VM that Azure Site Recovery helps protect goes into a resynchronization state: “Resynchronization is required for the machine X. Resume replication to start resynchronization” and “Resynchronization for virtual machine ‘VMName’ was marked corrupted by the service.”.

- Inside Azure File Storage: Learn more about this application data sharing facility.

- Azure RBAC is GA: Implement just enough administration (JEA) in your Azure subscription.

- Azure RemoteApp Custom Image Licensing Error: When you make your own RemoteApp image from scratch.

- Introducing Microsoft Azure Backup Server: A post I wrote for Petri.com on “Project Venus” progress.

- Azure AD Domain Services is now in Public Preview – Use Azure AD as a cloud domain controller: We’re getting closer to Server Zero. Note the lack of integration with legacy AD.

- The Azure AD App Proxy just keeps getting better and better: Cloud-enable legacy apps.

- KB3090067 – Azure Backup performance update: Update MARS to avoid when incremental backups by using the Azure Backup agent may run slowly for servers that have many files.

- User Environment Management in Azure RemoteApp – Part 1: Using UE-V with the user profile disks.

- IIS and Azure Files: Can I host my IIS web content in the cloud using Azure Files? Yes you can put your websites in Azure Files and use shared configuration for shared web content between a farm of auto-scaling, load balanced VMs in an availability set with auto-scaling enabled. Yum!

- Get Fast + Easy Support with OMS: Add Microsoft support users to a workspace so they can see the same thing as you.

Office 356

- Cloud security controls series – OneDrive for Business: Compliance and security stuff.

- Data Loss Prevention in OneDrive for Business, SharePoint Online and Office 2016 is rolling out: PDB is finally starting to improve.

- Share with the click of a button in Office 2016: How to edit cooperatively.

- What’s new – September 2015: A bit of a quiet month in Office, right? It was just Office 2016, Exchange 2016, …

- I sync therefore I am…: A preview of the new ODB sync client

- Azure PowerShell 1.0 Preview: A preview of v1.0 which appears to focus on improving admin of Azure Resource Manager.

Miscellaneous

- A message to our customers about EU – US Safe Harbour: A comment by Microsoft’s legal honcho, Brad Smith, following the recent ECJ ruling that Safe Harbour is “invalid”.

Understanding & Pricing Azure Virtual Machine Backup

In this article I want to explain how you can backup Azure virtual machines using Azure Backup. I’ll also describe how to price up this solution.

Backing up VMs

Believe it or not, up until a few weeks ago, there was no supported way to backup production virtual machines in Azure. That meant you had no way to protect data/services that were running in Azure. There were work-arounds, some that were unsupported and some that were ineffective (both solution and cost-wise). Azure Backup for IaaS VMs was launched in preview, and even if it was slow, it worked (I relied on it once to restore the VM that hosts this site).

The service is pretty simple:

- You create a backup vault in the same region as the virtual machines you want to protect.

- Set the storage vault to be LRS or GRS. Note that Azure Backup uses the Block Blob service in storage accounts.

- Create a backup policy (there is a default one there already)

- Discover VMs in the region

- Register VMs and associate them with the backup policy

Like with on-premises Azure Backup, you can retain up to 366 recovery points, and using an algorithm, retain X dailies, weeklies, monthlies and yearly backups up to 99 years. A policy will backup a VM to a selected storage account once per day.

This solution creates consistent backups of your VMs, supporting Linux and Windows, without interrupting their execution:

- Application consistency if VSS is available: Windows, if VSS is functioning.

- File system consistency: Linux, and Windows if VSS is not functioning.

The speed of the backup is approximately:

The above should give you an indication of how long a backup will take.

Pricing

There are two charges, a front-end charge and a back-end charge. Here is the North Europe pricing of the front-end charge in Euros:

The front-end charge is based on the total disk size of the VM. If a VM has a 127 GB C:, a 40 GB D: and a 100 GB E: then there are 267 GB. If we look at the above table we find that this VM falls into the 50-500 GB rate, so the privilege of backing up this VM will cost me €8.44 per month. If I deployed and backed up 10 of these VMs then the price would be €84.33 per month.

Backup will consume storage. There’s three aspects to this, and quite honestly, it’s hard to price:

- Initial backup: The files of the VM are compressed and stored in the backup vault.

- Incremental backup: Each subsequent backup will retain differences.

- Retention: How long will you keep data? This impacts pricing.

Your storage costs are based on:

- How much spaces is consumed in the storage account.

- Whether you use LRS or GRS.

Example

If have 5 VMs in North Europe, each with 127 GB C:, 70 GB D:, and 200 GB E:. I want to protect these VMs using Azure Backup, and I need to ensure that my backup has facility fault tolerance.

Let’s start with that last bit, the storage. Facility fault tolerance drives me to GRS. Each VM has 397 GB. There are 5 VMs so I will require at most €1985 for the initial backup. Let’s assume that I’ll require 5 TB including retention. If I search for storage pricing, and look up Block Blob GRS, I’ll see that I’ll pay:

- €0.0405 per GB per month for 1 TB = 1024 * €0.0405 = €41.48

- €0.0399 per GB per month for the next 49 TB = 4096 * €0.0399 = €163.44

For a total of €204.92 for 5 TB of geo-redundant backup storage.

The VMs are between 50-500 GB each, so they fall into the €8.433 per protected instance bracket. That means the front-end cost will be €8.433 * 5 = €42.17.

So my total cost, per month, to backup these VMs is estimated to be €42.17 + €204.92 = €247.09.

What Are Azure Virtual Networks

When I started learning how to use Azure IaaS, I probably spent most of my time learning about Azure virtual networking. I found VMs to be easy; it’s just self-service virtualization and it’s based on Hyper-V so it was an easy evolution for me. But the networking required a bit of learning, especially because there are lots of options, lots of it is driven by PowerShell, and it is constantly evolving.

What are Virtual Networks?

As I’ve said many times before, I used to work in the hosting business, back in a time when the VLAN was the dominant way to deploy customers. If sales signed up a customer, that customer got one or more VLANs, and the network guys panicked. More VLANs were being added, more firewall rules had to be deployed, and NAT had to be configured. We were looking at hours-to-days of waiting before I could drag a few templates from the VMM or vCenter library to have the customer up and running. In Azure, you do not call up Microsoft and say “hey, I need 2 VLANs, please”. Instead, you run some PowerShell or walk through a wizard and deploy your own isolated network address space.

A virtual network is kind of like a LAN. It is am isolated address space that can be divided into automatically routed subnets. You can create virtual networks from each of the following ranges:

- 192.168.0.0

- 172.16.0.0

- 10.0.0.0

We use these virtual networks and subnets to connect virtual machines within a single region. Each virtual network is isolated, so you might create 2 vNETs and they will be completely secure from each other unless you take steps to connect them.

The above diagram shows a deployment of a virtual network with 2 subnets, modelling the concept of a DMZ and back-end subnet. By default, there are no firewall rules or blocks between subnets in the same virtual network. However, you can deploy a policy-based set of rules called network security groups (NSGs) to isolate virtual machines or subnets.

Virtual Network and Subnet Addresses

As you can see, they are private ranges. You can get up to 5 public IP addresses for free (if you use them) to implement NAT-like “end points” with virtual machines, where you specify that if any traffic come in on a port, that traffic is sent to a VM or set of VMs (external load balancing, which is free, as is internal load balancing). This public IP addresses are usually provided by a cloud service, and are called VIPs (virtual IP addresses). Note that VIPs are dynamic by default, but you can reserve them for free (as long as the associated service is active).

You can carve up your address space to create VLANs. I recommend two practices with my customers:

- KISS: Keep it simple, stupid. I might deploy a virtual network with an address of 10.0.0.0/16. I can create plenty of subnets with a /24 address. So subnet 1 might be 10.0.0.0/24 and subnet 2 might be 10.0.1.0/24. If you are subnetting-disabled like me, this is easy to understand, you simply increase the third octet by a decimal 1. Ignore this rule (a) if you do understand subnetting and (b) you need more-smaller subnets or fewer-larger subnets.

- Plan for expansion and connectivity: If a customer is using 192.168.0.0/24 on premises then don’t deploy a virtual network of 192.168.0.0, even if you don’t plan on connecting them at the moment. Things change, and a customer’s plans with Azure will evolve. Treat Azure virtual networks like branch office networks, and plan for connectivity and routing.

Virtual Network Connectivity

As I said before, a virtual network is isolated. You can create endpoints to allow traffic into virtual machines from the Internet. However, you might want private communications between 2 vNETs or between a vNET and an on-premises network. This is made possible using a gateway.

Connecting an on-premises network via VPN to an Azure vNET using a gateway

A gateway is a virtual appliance that you deploy on your vNET, usually in a /29 subnet that supports just 3 IP addresses (default gateway to route, and 2 others); vNET can have one gateway. The gateway is actually a cluster of 2 virtual machines that are managed by Azure – you simply configure gateway settings and you cannot even see these VMs. The purpose of the gateway is to enable private and secure network connections with routing:

- Point-to-Site VPN: This is enables users to VPN into an Azure vNET. Really, this is intended as a backdoor for administrators. End users should use the software layer (Windows Server VPN/DirectAccess or VPN services from a virtual appliance).

- Website-to-vNET VPN: An Azure website can VPN into a vNET, allowing that website to talk to services hosted on a VM, e.g. MySQL for WordPress.

- vNET-to-vNET VPN: Connect two vNETs together even if they are in different regions or subscriptions, enabling VMs in different vNETs to talk to each other.

- Site-to-site VPN: Connect a customer site to an Azure vNET, enabling on-premises machines to talk freely with in-Azure VMs. For example, DCs on the customer site could replicate with DC VMs from the same domain that are running in Azure over this encrypted connection over the Internet.

- ExpressRoute: Get an SLA driven private WAN connection into your virtual networks, using an MPLS WAN or hop via a POP. This service is only supported by a small number of operators, and some of these operators are poor at communicating to their owns staff that this service is available – BT in UK/Ireland is particularly guilty of this.

Connecting multiple sites and Azure vNETs using ExpressRoute

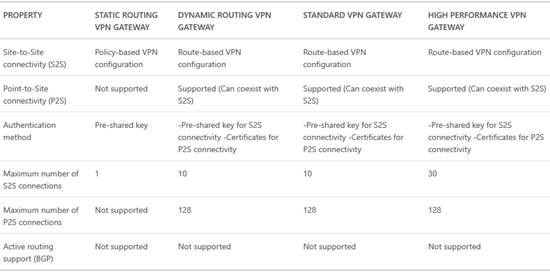

There are 3 models of gateway that you can deploy:

The basic gateway breaks down into two types:

- Static: Also known as a policy-based VPN, this is good for simple deployments, e.g. a 1:1 site-to-site VPN connection, and pretty much nothing else. This type has the most support from 3rd party firewall appliances.

- Dynamic: Also known as a route-based VPN, dynamic routing provides the most compatibility with connection features (site-to-site, point-to-site, vNET-to-vNET, and website-to-vNET)

Long story-short, we always want dynamic routing, but only a small number of on-premises appliances support it.

You can find feature support across the tiers and types of gateway below:

Remember how I said a gateway is a pair of VMs? The basic tier is a VM with 1 vCPU. This limits how many interrupts the VM can handle and this restricts inbound bandwidth to around 80 Mbps (100 is listed but 80 is what is achievable). The next tier up, Standard, uses VMs with 2 x vCPUs and this doubles the interrupt handling capability, increasing the inbound bandwidth to 200 Mbps – thank you Hyper-V vRSS.

Network Security Groups & Force Tunneling

These are two features that are almost related because they are likely to be used together. In the above diagram, NSGs are used to restrict network communications. Force Tunneling could also be used:

- Allow the DMZ VMs to communicate directly with the Internet, according to security policies. Outbound traffic will go directly to the Internet via the Azure fabric.

- Force back-end VMs to route via the Internet via a site-to-site network connection, ensuring that all traffic from these machines is more closely controlled.

Using Forced Tunneling to manage outbound traffic from Azure subnets

IP Addressing

I’ve already talked about how VIPs are used to enable the public Internet to access your vNETs, subject to endpoint rules that you create. What about internal addresses? The Azure vNET will supply the IPv4 configurations to your VMs. From the guest OS point of view, this is DHCP … AND YOU SHOULD LEAVE IT THAT WAY.

The default gateway of a vNET is the first IP address in the range – .1 if you follow my KISS rule. The first VM to come online will be assigned the 4th address (KISS: .4) and then the fifth, etc. VMs will not get static addresses. If you shutdown (de-allocate) a VM then it is not guaranteed the same IP if you reboot it – however you can reserve an IP; I typically do this with DCs.

Name services can also be deployed, e.g. DNS on a DC. You can pre-define the DNS part of the IPv4 stack for VMs in the properties of a vNET.

Multiple NICs in a VM

I dealt with this topic a little while ago in a post about finding specs for Azure VMs. Some VM specs support more than 1 vNIC. From what I can make out, this is intended for vendors who are creating virtual appliances that need to span more than one vNET; consider a firewall that connects the external world and VMs in several vNETs – you don’t want any routing other than the firewall appliance so that appliance needs to be able to communicate directly with each vNET.

Connecting a virtual appliance to multiple subnets in an Azure vNET

Pricing

Some good news: vNETs are free. You can have up to 50 vNETs in a single subscription, with up to 2048 VMs per vNET, and up to 500,000 concurrent TCP connections per VM.

NSGs and Forced Tunneling are both free. You can have up to 100 NSGs with 200 rules each per subscription.

You can have up to 50 reserved VIPs, 5 of which are free (including reservation) if they are being used. The pricing for the additional 15 or unused VIPs are found here. Private IP addresses are free.

Pricing for a gateway is found here – that covers the cost of connecting a VPN, but you also need to account for outbound data transfers (egress data). Note that you’ll need to work with an ExpressRoute partner to figure out the pricing of that solution – here’s the Microsoft element – I know that Microsoft wants to simplify this pricing, as announced at the recent AzureCon online event.

Data Transfers

This is one of the most mis-understood elements of Azure. There are two types of data transfer:

- Inbound (ingress)

- Outbound (egress)

Let’s keep this simple:

ExpressRoute is charged based on bandwidth (no data transfer charges across this private connection) or metered data (contact your ISP).

Everything else is subject to ingress/egress rules. Azure does not charge for inbound data transfers. And now things get complicated.

Despite many myths, outbound data transfer is charged for, but it depends on the service. If you are using Azure Backup, there is no network data transfer charge. If you have VMs sending data to the Internet or outbound via VPN, then that is subject to charge.

Example

Pricing is based on North Europe at the time of writing.

A customer is deploying a web farm in Azure. They want a virtual network that will have 3 subnets: web, application, and database. They need to offer online web services via 2 always active VIPs, and 1 usually de-allocated VIP. There will be 10 load-balanced web servers (external load balancing) and 5 load balanced application servers (internal load balancing). A site-to-site VPN connection is required from on-premises, the firewall is a WatchGuard Firebox, with a 50 Mbps connection. Each vNET must have policy based isolation. Web servers can talk directly to the Internet, but app and database servers must always route via the VPN to anything other than the web servers. The web servers are expected to send 1 TB of data to the Internet and receive 400 GB of data.

OK … let’s break this down:

- Virtual network & subnets: free.

- VIPs: We get up to 5 free if they are used, even if they are reserved. The de-allocated VIP will cost around €3/month.

- External load balancing (web servers): Free

- Internal load balancing (app servers): Free

- Gateway (VPN): The WatchGuard doesn’t support dynamic gateways so a static gateway is required. The static basic gateway supports up to 80 Mbps so it’s fast enough. This will cost around €23/month.

- NSG: Free

- Forced Tunneling: Free

- 1024 GB outbound data transfer: First 5 GB is free, and remaining 1019 charged at €0.0734 per GB, which is around €75/month

- 400 GB inbound data: Free

So that works out at: €3 + €23 + €75 = €101/month.

Understanding Azure Premium SSD Data Storage & Pricing

If you are deploying services that require fast data then you might need to use shared SSD storage for your data disks, and this is made possible using a Premium Storage Account with DS-Series or GS-Series virtual machines. Read on to learn more.

More Speed, Scottie!

A typical virtual machine will offer up to 300 IOPS (Basic A-Series) or 500 IOPS (Standard A-Series and up) per data disk. There are a few ways to to improve data performance:

- More data disks: You can deploy a VM spec that supports more than 1 data disk. If each disk has 500 IOPS, then aggregating the disks multiplies the IOPS. If I store my data across 4 data disks then I have a raw potential 2000 IOPS.

- Disk caching: You can use a D-Series or G-Series to store a cache of frequently accessed data on the SSD-based temporary drive. SSD is a nice way to improve data performance.

- Memory caching: Some application offer support for caching in RAM. A large memory type such as the G-Series offers up to 448 GB RAM to store data sets in RAM. Nothing is faster than RAM!

Shared SSD Storage

Although there is nothing faster than RAM there are a couple of gotchas:

- If you have a large data set then you might not have enough RAM to cache in.

- G-Series VMs are expensive – the cloud is all about more, smaller VMs.

If an SSD cache is not big enough either, then maybe shared SSD storage for data disks would offer a happy medium: lots of IOPS and low latency; It’s not as fast as RAM, but it’s still plenty fast! This is why Microsoft gave us the DS- and GS-Series virtual machines which use Premium Storage.

Premium Storage

Shared SSD-based storage is possible only with the DS- and GS-Series virtual machines – note that DS- and GS-Series VMs can use standard storage too. Each spec offers support for a different number of data disks. There are some things to note with Premium Storage:

- OS disk: By default, the OS disk is stored in the same premium storage account as the premium data disks if you just go next-next-next. It’s possible to create the OS disk in a standard storage account to save money – remember that data needs the speed, not the OS.

- Spanning storage accounts: You can exceed the limits (35 TB) of a single premium storage account by attaching data disks from multiple premium storage accounts.

- VM spec performance limitations: Each VM spec limits the amount of throughput that it supports to premium storage – some VMs will run slower than the potential of the data disks. Make sure that you choose a spec that supports enough throughput.

- Page blobs: Premium storage can only be used to store VM virtual hard disks.

- Resiliency: Premium Storage is LRS only. Consider snapshots or VM backups if you need more insurance.

- Region support: Only a subset of regions support shared SSD storage at this time: East US2, West US, West Europe, Southeast Asia, Japan East, Japan West, Australia East.

- Premium storage account: You must deploy a premium storage account (PowerShell or Preview Portal); you cannot use a standard storage account which is bound to HDD-based resources.

The maximum sizes and bandwidth of Azure premium storage

The maximum sizes and bandwidth of Azure premium storage

Premium Storage Data Disks

Standard storage data disks are actually quite simple compared to premium storage data disks. If you use the UI, then you can only create data disks of the following sizes and specifications:

The 3 premium storage disk size baselines

The 3 premium storage disk size baselines

However, you can create a premium storage data disk of your own size, up to 1023 GB (the normal Azure VHD limit). Note that Azure will round up the size of the data disk to determine the performance profile based on the above table. So if I create a 50 GB premium storage VHD, it will have the same performance profile as a P10 (128 GB) VHD with 500 IOPS and 100 MB per second potential throughput (see VM spec performance limitations, above).

Pricing

You can find the pricing for premium storage on the same page as standard storage. Billing is based on the 3 models of data disk, P10, P20, and P30. As with performance, the size of your disk is rounded up to the next model, and you are charged based on the amount of storage actually consumed.

If you use snapshots then there is an additional billing rate.

Example

I have been asked to deploy an Azure DS-Series virtual machine in Western Europe with 100 GB of storage. I must be able to support up to 100 MB/second. The virtual machine only needs 1 vCPU and 3.5 GB RAM.

So, let’s start with the VM. 1 vCPU and 3.5 GB RAM steers me towards the DS1 virtual machine. If I check out that spec I find that the VM meets the CPU and RAM requirements. But check out the last column; The DS1 only supports a throughput of 32 MB/second which is well below the 100 MB/second which is required. I need to upgrade to a more expensive DS3 that has 4 vCPUs and 14 GB RAM, and supports up to 128 MB/second.

Note: I have searched high and low and cannot find a public price for DS- or GS-Series virtual machines. As far as I know, the only pricing is in I got pricing for virtual machines from the “Ibiza” preview portal. There I could see that the DS3 will cost around €399/month, compared to around €352/month for the D3.

[EDIT] A comment from Samir Farhat (below) made me go back and dig. So, the pricing page does mention DS- and GS-Series virtual machines. GS-Series are the same price as G-Series. However, the page incorrectly says that DS-Series pricing is based on that of the D-Series. That might have been true once, but the D-Series was reduced in price and the DV2-Series was introduced. Now, the D-Series is cheaper than the DS-Series. The DS-Series is the same price as the DV2-Series. I’ve checked the pricing in the Azure Preview Portal to confirm.

If I use PowerShell I can create a 50 GB data disk in the standard storage account. Azure will round this disk up to the P10 rate to determine the per GB pricing and the performance. My 50 GB disk will offer:

- 500 IOPS

- 100 MB/second (which was more than the DS1 or DS2 could offer)

The pricing will be €18.29 per GB per month. But don’t forget that there are other elements in the VM pricing such as OS disk, temporary disk, and more.

Once could do storage account snapshots to “backup” the VM, but the last I heard it was disruptive to service and not supported. There’s also a steep per GB cost. Use Azure Backup for IaaS VMs and you can use much cheaper blob blobs in standard storage to perform policy-based non-disruptive backups of the entire VM.

Understanding Azure Standard Storage and Pricing

Imagine that you’re brand new to Azure. You’ve been asked to price up a solution with some virtual machines. You use the best pricing tool for Azure and land at a page that has a bewildering collection of 12 items. You read through them, and are left none the wiser. I’m going to try cut through a lot of stuff to help you select the right storage for IaaS solutions such as VMs, backup, and DR.

There are a few things people expect when I present on storage in Azure. They expect LUNs with predefined sizes, they expect to see RAID, and when you talk about duplicate copies, they expect to see each copy. Sorry – it’s actually all much simpler than that – that’s a good thing!

Note that I will cover SSD-based Premium Storage in another post.

Terminology

You do not create LUNs in Azure; storage in Azure comes in units called a storage account. A storage account is an address point in the Azure cloud with 2 secure access keys (a primary key and an alternate secondary key to enable resetting the primary without loss of service).

When you create a storage account you create a unique URL. This could be used publicly … only if you know the very long secret access keys. You do not set a size; you simply store what you need and pay for what you store with up to 500 TB per storage account, and up to 100 storage accounts per subscription (by default). You also set a resiliency level to provide you with some level of protection against physical system failure.

Resiliency Levels

There are 4 resiliency levels, summarized nicely here:

- Locally Redundant Storage (LRS): 3 synchronously replicated copies are stored in a single facility in your region of choice. There is no facility fault tolerance. This is the cheapest resiliency level.

- Geo-Redundant Storage (GRS): 3 synchronously replicated copies are stored in a single facility in your region of choice. 3 asynchronously replicated (no deprecation in performance) copies are stored in the neighbouring region, offering facility and region fault tolerance. This is the most expensive resiliency level.

- Read-Access Geo-Redundant Storage (RA-GRS): synchronously replicated copies are stored in a single facility in your region of choice. 3 read only asynchronously replicated (no deprecation in performance) copies are stored in the neighbouring region, offering facility and region fault tolerance, but with read-only access in that other region.

- Zone Redundant Storage (ZRS): Three copies of your data are stored across 2 to 3 facilities in one or two regions.

Note that we cannot use ZRS for IaaS (VMs, backup, DR). Typically we use LRS or GRS for VMs or backup storage. Azure Site Recovery (ASR) currently requires you to use GRS. You can switch between LRS, GRS and RA-GRS, but not from/to ZRS.

You do not see 3 or 6 copies of your data; this is abstracted from your view of the Azure fabric and you just see your storage account.

Here are the “neighbouring site” pairings:

Azure Storage Services

Once you’ve figured out the resiliency levels, the next step in pricing is determining which storage service you will be using. There are four services:

- Blob storage: In the IaaS world, we use this for Azure Backup. Files you upload are created as blobs. You can also use it to store documents, videos, pictures, and other unstructured text or binary data.

- File storage: This is a newly available service that allows you to use an shared folder (no server required) to share data between applications using SMB 3.0. This is not to be used for user file sharing – use a VM or O365.

- Page Blobs & disks: In the IaaS world this is where we store VM virtual hard disks (VHD) for running or replicated (ASR DR) VMs.

- Tables & Queues: This offers NoSQL storage for unstructured and semi-structured data—ideal for web applications, address books, and other user data. Read that as .. for the devs.

This can be confusing. Do you need to create a blob storage account and a file storage account? What if you select the wrong one? It’s actually rather simple. When you upload a file to Azure it’s placed into blob storage in your storage account. When you create a VM, the disks are put into page blobs & disks automatically. If you start using file storage to share data between services via SMB 3.0, then that’s used automatically. And you can use a single storage account to use all 4 services if you want to – Azure just figures it out and bills you appropriately.

Storage Transactions

I am confused at the time of writing this post. Up until now, transactions (an indecipherable term) were a micro-payment billed at some tiny cost per 100,000. I had no idea what they were, but I know from my labs that the costs were insignificant unless you have a huge storage requirement. In fact, in my presentations I normally said:

The cost of estimating the cost of storage transactions is probably higher than the actual cost of the storage transactions.

And when writing this post, I found that storage transactions were no longer mentioned on the Azure storage pricing web page. Hmm! It would be great if that cost was folded into the price per GB – you can actually only do so much activity anyway because of how rack stamps are designed and performance is price-banded.

I’ve been told that people are still being billed, but no rate is publicly listed on the official site. I’ll update when I find out more.

Examples

Let’s say that I need to deploy a bunch of test Windows Server virtual machines that the business isn’t worried about losing. My goal is to keep costs down. I need 1000 GB of storage, accounting for the 127 GB C: drive, and any additional data disks. I know that this will use page blobs & disks, and I’m going to use LRS for this deployment. If I select North Europe as my region then the cost per GB is €0.0422 so the monthly cost will be around 42.2 – I say around because there will be some other small files maintained on storage.

I have a scenario where I need to replicate 5 TB of vSphere virtual machines to Azure using ASR. ASR requires GSR storage and I will be using page blobs & disks. The costs will be €0.0802/GB for the first 1024 GB and €0.0675/GB for the next 4096 GB. That’s €82.1248 + €276.48 = around €359 per month.

And what if will use 100 GB of storage for Azure Backup (DPM or direct). That’s going to be using blob storage, of either LRS or GRS. I’ll opt for GRS, which will cost €0.0405/GB, so I’ll pay a teeny €4.05 per month for backup storage (Azure Backup has an additional front-end per-instance charge).

Azure Virtual Machine Specs

This post is a part of a series:

- Understanding Microsoft’s Explanation of Azure VM Specs

- The Different Azure Virtual Machine Types

- Picking an Azure Virtual Machine Tier

- Azure Virtual Machine Specs (this post)

Any prices shown are Euro for North Europe and were correct at the time of writing.

Pick a VM Type

Determine some of the hardware features that you require from the VM. You’re thinking about:

- Is it a normal VM or a machine with fast processors??

- Do you need fast paging or disk caching?

- Must data be on really fast shared storage?

- Do you need lots of RAM?

- Do you need Infiniband networking?

- Is Xeon enough, or do you need GPU computational power?

A-Series: Pick a Tier

If you opt for an A-Series VM then you need to pick a tier. Here you’re considering fabric-provided features such as:

- Load balancing

- Data disk IOPS

- Auto-scaling

CPU and RAM

Specifying a an Azure virtual machine is not much different to specifying a Hyper-V/vSphere virtual machine or a physical server. You need to know a few basic bits of information:

- How many virtual CPUs do I require?

- How much RAM do I require?

- How much disk space is needed for data?

With on-premises systems you might have asked for a machine with 4 cores, 16 GB RAM and 200 GB disk. You cannot do that in Azure. Azure, like many self-service clouds, implements the concept of a template. You can only deploy VMs using these templates which have an associated billing rate. Those templates limit the hardware spec of the machine. So let’s say we need a 4 core machine with 16 GB RAM for a normal workload (A-Series) with load balancing (Standard tier). If we peruse the available specs then we can see that we see the following Standard A-Series VMs:

There is no 16 GB VM. You can’t select a 14 GB RAM A5 and increase the RAM. Instead, your choice is a Standard A4 with 14 GB RAM, a Standard A5 with 14 GB RAM, or Standard A6 with 28 GB RAM. You need 4 cores, so that reduces the options to the A4 (8 cores) or the A6 (4 cores). The A4 costs €0.6072/hour to run, and the A6 costs €0.506/hour to run. So, the VM with the higher model number (A6) offers the 16 GB RAM (actually 28 GB) and 4 cores.

The above example teaches you to look beyond the apparent boundary. There’s more … Look at the pricing of D-Series VMs. There are a few options there that might be applicable … note that these VMs run on higher spec host CPUs (Intel Xeon) and have SSD-based temporary drives and offer “60% faster processors than the A-Series”. The D12 has 4 cores and 28 GB RAM and costs €0.506/hour – the same as the A6! But if you were flexible with RAM, you could have the D3 (4 cores, 14 GB RAM) for €0.4352/hour, saving around €53 per month.

After that, you now add disks. Windows VMs from the Marketplace (template library) come with a 127 GB C: drive and you add multiple data disks (up to 1 TB each) to add data storage capacity and IOPS.

Born-in-the-Cloud

On more than one occasion I’ve been asked to price up machines with 32 GB or 64 GB RAM in Azure. As you’ve now learned, no such thing exists. And at the costs you’ve seen, it would be hard to argue that the cloud is competitive with on-premises solutions where memory costs have been falling over the years – disk is usually the big bottleneck now, in my experience, because people are still hung up on ridiculously priced SANs.

Whether you’re in Azure, AWS, or Google, you should learn that the correct way forward is lots of smaller VMs working in unison. You don’t spec up 2 big load balanced VMs; instead you deploy a bunch of load balanced Standard A-Series or D/DS/DV2 VMs with auto-scaling turned on – this powers up enough VMs to maintain HA and to service workloads at an acceptable rate, and powers down VMs where possible to save money (you pay for what is running).

Other Considerations

Keep in mind that the following are also controlled by the VM spec:

- Quantity of data disks

- Maximum number of NICs (should normally only affect virtual network appliances)

Modifying Specs

We’ll keep things simple: you can change the spec of a VM within it’s “family” (what the host is capable of). So you can move from a Basic A1 to a Standard A7 with a few clicks and a VM reboot. But moving to a D-Series VM is trickier – you need to delete the VM while keeping the disks, and then create a new VM that is attached to the existing disks.

EDIT:

Make sure you read this detailed post by Samir Farhat on resizing Azure VMs.

The Different Azure Virtual Machine Types

This post is a part of a series:

- Understanding Microsoft’s Explanation of Azure VM Specs

- The Different Azure Virtual Machine Types (this post)

- Picking an Azure Virtual Machine Tier

- Azure Virtual Machine Specs

“Just give me a normal virtual machine” … AAAARGGHHH!

Deploying a type of virtual machine is like selecting a type of server. It’s is tuned for certain types of performance, so you need to understand your workload before you deploy a virtual machine. Here’s a breakdown of your options:

A-Series

The A-Series virtual machines are, for the most part, hosted on servers with AMD Opteron 4171 HE 2,1 GHz CPUs. There are two tiers of A-Series virtual machine, Basic and Standard. These are what I would call “normal” machines, intended for your every day workload. They are also perfect for scale-out jobs, where the emphasis is on lots of small and affordable machines.

A-Series Compute Intensive

These are a set of machine that offer VMs that run on hosts with Intel Xeon E5-2670 2.6 GHz CPUs. The VMs offer more RAM (up to 112 GB) than the normal A-Series VMs. These machines are good for CPU intensive workloads like HPC, simulations, or video encoding.

A-Series Network Optimized

These VMs are similar to the A-Series Compute Intensive machines, except there is an additional 40 Gbps Infiniband NIC that offers low latency and low CPU impact RDMA networking. These machines are ideal for the same scenarios as the Compute Intensive machines, but where RDMA networking is also required.

D-Series

The D-Series machines are based on hosts that offer Intel Xeon E5-2660 2.2 GHz CPUs (60% faster processors than the normal A-series). The big feature of these VMs is that the D: drive, the temporary & paging drive, is stored on an SSD drive that is local to the host. Data disks are still stored on standard shared HDD storage; the data disks still run at the same 500 IOPS per disk as a Standard tier A-Series VM.

D-Series VMs offer really fast temporary storage. So if you need a fast disk-based cache or paging file then this is the machine for you.

Dv2-Series

This is an improvement on the existing D-Series virtual machines, based on hosts with customised Intel Xeon E5-2673 v3 2.4 GHz CPUs, that can reach 3.2 GHz using Intel Turbo Boost Technology 2.0. Microsoft claims speeds are 35% faster than the D-Series VMs.

DS-Series

The DS-Series is a modification of the D-Series VMs. The temporary drive continues to be on local SSD storage, but data disks are stored on shared SSD Premium Storage. This offers high throughput, low latency, and high IOPS (at a cost) for data storage and access.

G-Series

These are the Goliath virtual machines, offering huge amounts of memory per virtual CPU. The biggest machine currently offers 448 GB RAM. The hosts are based on a 2.0 GHz Intel Xeon E5-2698B v3 CPU. If you need a lot of memory, maybe for caching a database in RAM, then these very expensive VMs are your choice.

GS-Series

The GS-Series/G-Series relationship is similar to the D/DS one. The GS-Series takes the G series and replaces standard shared storage with shared SSD Premium Storage.

N-Series

These VMs are not available at the time of writing – to be launched in preview “within the next few months”. The N-Series VMs are based on hosts with NVIDIA GPU (K80 and M60 will be supported) capabilities, for compute and graphics-intensive workloads. The hosts offer NVIDIA Tesla Accelerated Computing Platform as well as NVIDIA GRID 2.0 technology. N-Series VMs will also feature Infinband RDMA networking (like Network Intensive A-Series VMs).

Examples

I need to run a few machines that will be domain controllers, file servers and database servers for a small/medium enterprise. The ideal machines are the A-Series, and I’ll select Basic or Standard tier VMs depending on Azure feature requirements.

I’m going to deploy a database server that requires a fast disk based cache. The database will only require 2000 IOPS. In this case, I’ll select a D- or a DV2-Series VM, depending on CPU requirements. The SSD-based temporary drive is great for non-persistent caching, and I can deploy 4 or more data disks ( 4 x 500 IOPS) to get the required 2000 IOPS for the database files.

An OLTP database is required. Thing needs super fast database queries that even SSD cannot keep up with. Well, I’m probably going to deploy SQL 2014 (using the “Hekaton” feature) in a G-Series VM, where there’s enough memory to store indexes and tables in RAM.

I need really fast storage for terabytes of data. Aggregating disks with 500 IOPS each won’t be enough because I need faster throughput and lower latency. I need a VM that can use shared SSD-based Premium Storage, so I can use either a DS-Series VM or a GS-Series VM, depending on my CPU-to-RAM requirements.

A university is building a VM-based HPC cluster to perform scaled-out computations on cancer research or whale song analysis. They need fast networking and extreme compute power. At the time of writing this article, the Network Intensive Standard A-Series VMs are suitable; Xeon processors for compute and Infinitude networking for CPU-efficient, low latency, and high throughput data transfers. In a few months, the N-Series VMs will be better suited thanks to the computational power of the N-Series VMs.