Speakers: Elden Christensen, Hector Linares, Jose Barreto, and Brian Matthew (last two are in the front row at least)

4:12 SSDs in 60 drive jbod.

Elden kicks off. He owns Failover Clustering in Windows Server.

New Approach To Storage

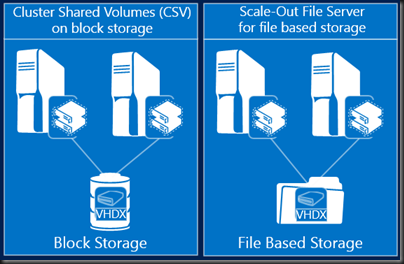

- File based storage: high performance SMB protocol for Hyper-V storage over Ethernet networks. In addition: the scale-out file server to make SMB HA with transparent failover. SMB is the best way to do Hyper-V storage, even with backend SAN.

- Storage Spaces: Cost-effective business critical storage

Enterprise Storage Management Scenarios with SC 2012 R2

Summary: not forgotten. We can fully manage FC SAN from SysCtr via SMI’-S now, including zoning. And the enhancements in WS2012 such as TRIM, UNMAP, and ODX offer great value.

Hector, Storage PM in VMM, comes up to demo.

Demo: SCVMM

Into the Fabric view of the VMM console. Fibre Channel Fabrics is added to Providers under Storage. He browses to VMs and Services and expands an already deployed 1 tier service with 2 VMs. Opens the Service Template in the designer. Goes into the machine tier template. There we see that FC is surfaced in the VM template. It can dynamically assign or statically assign FC WWNs. There is a concept of fabric classification, e.g. production, test, etc. That way, Intelligent Placement can find hosts with the right FC fabric and put VMs there automatically for you.

Opens a powered off VM in a service. 2 vHBAs. We can see the mapped Hyper-V virtual SAN, and the 4 WWNs (for seamless Live Migration). In Storage he clicks Add Fibre Channel Array. Opens a Create New Zone dialog. Can select storage array and FC fabric and the zoning is done. No need to open the SAN console. Can create a LUN, unmask it at the service tier …. in other words provision a LUN to 64 VMs (if you want) in a service tier with just a couple of mouse clicks … in the VMM console.

In the host properties, we see the physical HBAs. You can assign virtual SANs to the HBAs. Seems to offer more abstraction than the bare Hyper-V solution – but I’d need a €50K SAN and rack space to test

So instead of just adding vHBA support, but they’ve given us end-end deployment and configuration.

Requirement: SMI-S provider for the FC SAN.

Demo: ODX

In 30 seconds, 3% of BITS VM template creation is done. Using same setup but with ODX, but the entire VM can be deployed and customized much more quickly. In just over 2 minutes the VM is started up.

Back to Elden

The Road Ahead

WS2012 R2 is cloud optimized … short time frame since last release so they went with a focused approach to make the most of the time:

- Private clouds

- Hosted clouds

- Cloud Service Providers

Focus on capex and opex costs. Storage and availability costs

IaaS Vision

- Dramatically lowering the costs and effort of delivering IaaS storage services

- Disaggregated compute and storage: independent manage and scale at each layer. Easier maintenance and upgrade.

- Industry standard servers, networking and storage: inexpensive networks. inexpensive shared JBOD storage. Get rid of the fear of growth and investment.

SMB is the vision, not iSCSI/FC, although they got great investments in WS2012 and SC2012 R2.

Storage Management Pillars

Storage Management API (SM-API)

VMM + SOFS & Storage Spaces

- Capacity management: pool/volume/file share classification. File share ACL. VM workload deployment to file shares.

- SOFS deployment: bare metal deployment of file server and SOFS.

- Spaces provisioning

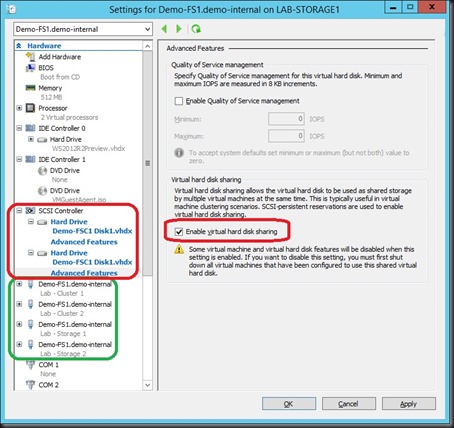

Guest Clustering With Shared VHDX

See yesterday’s post.

iSCSI Target

- Uses VHDX instead of VHD. Can import VHD, but not create. Provision 64 TB and dynamically resize LUNs

- SMI-S support built in for standards based management, VMM.

- Can now manage an iSCSI cluster using SCVMM

Back to Hector …

Demo: SCVMM

Me: You should realise by now that System Center and Windows Server are developed as a unit and work best together.

He creates a Physical Computer Profile. Can create a VM host (Hyper-V) or file server. The model is limited to that now, but later VMM could be extended to deploy other kinds of physical server in the data centre.

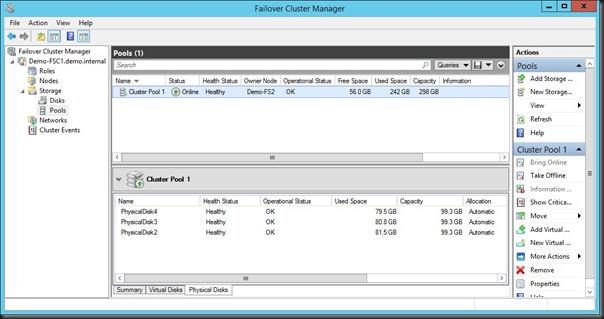

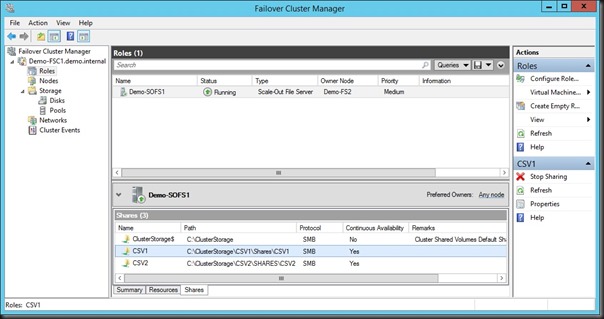

Hector deploys a clustered file server. You can use existing machine (enables roles and file shares on existing OS) OR provision a bare metal machine (OS, cluster, etc, all done by VMM). He provisions the entire server, VMM provisions the storage space/virtual disk/CSV, and then a file share on a selected Storage Pool with a classification for the specific file share.

Now he edits the properties of a Hyper-V cluster, selects the share, and VMM does all the ACL work.

Basically, a few mouse clicks in VMM and an entire SOFS is built, configured, shared, and connected. No logging into the SOFS nodes at all. Only need to touch them to rack, power, network, and set BMC IP/password.

SMB Direct

- 50% improvement for small IO workloads with SMB Direct (RDMA) in WS2012 R2.

- Increased performance for 8K IOPS

Optimized SOFS Rebalancing

- SOFS clients are now redirected to the “best” node for access

- Avoids uneccessary redirection

- Driven by ownership of CSV

- SMB connections are managed by share instead of per file server.

- Dynamically moves as CSV volume ownership changes … clustering balances CSV automatically.

- No admin action.

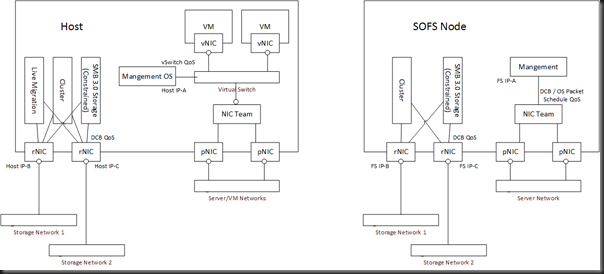

Hyper-V over SMB

Enables SMB Multichannel (more than 1 NIC) and Direct (RDMA – speed). Lots of bandwidth and low latency. Vacate a host really quickly. Don’t fear those 1 TB RAM VMs

SMB Bandwidth Management

We now have 3 QoS categories for SMB:

- Default – normal host storage

- VirtualMachine – VM accessing SMB storage

- LiveMigration – Host doing LM

Gives you granular control over converged networks/fabrics because 1 category of SMB might be more important than others.

Storage QoS

Can set Maximup IOPS and Minimum IOPS alerts per VHDX. Cap IOPS per virtual hard disk, and get alerts when virtual hard disks aren’t getting enough bandwidth – could lead to auto LM to another better host.

Jose comes up …

Demo:

Has a 2 node SOFS. 1 client: a SQL server. Monitoring via Perfmon, and both the SOFS nodes are getting balanced n/w utilization caused by that 1 SQL server. Proof of connection balancing. Can also see that the CSVs are balanced by the cluster.

Jose adds a 3rd file server to the SOFS cluster. It’s just an Add operation of an existing server that is physically connected to the SOFS storage. VMM adds roles, etc, and adds the server. After a few minutes the cluster is extended. The CSVs are rebalanced across all 3 nodes, and the client traffic is rebalanced too.

That demo was being done entirely with Hyper-V VMs and shared VHDX on a laptop.

Another demo: Kicks off an 8K IO worklaod. Single client talking to single server (48 SSDs in single mirrored space) and 3 infiniband NICs per server. Averaging nearly 600,000 IOPS, sometimes getting over that. Now he enables RAM caching. Now he gets nearly 1,000,000 IOPS. CPU becomes his bottleneck

Nice timing: question on 32K IOs. That’s the next demo  RDMA loves large IO. 500,000 IOPS, but now the throughput is 16.5 GIGABYTES (not Gbps) per second. That’s 4 DVDs per second. No cheating: real usable data, going to real file system, nor 5Ks to raw disk as in some demo cheats.

RDMA loves large IO. 500,000 IOPS, but now the throughput is 16.5 GIGABYTES (not Gbps) per second. That’s 4 DVDs per second. No cheating: real usable data, going to real file system, nor 5Ks to raw disk as in some demo cheats.

Back to Elden …

Data Deduplication

Some enhancements:

- Dedup open VHD/VHDX files. Not supported with data VHD/VHDX. Works great for volumes that only store OS disks, e.g. VDI.

- Faster read/write of optimized files … in fact, faster than CSV Block Cache!!!!!

- Support for SOFS with CSV

The Dedup filter redirects read request to the chunk store. Hyper-V does buffered IO that bypasses the cache. But Dedup does cache. So Hyper-V read of deduped files is cached in RAM, and that’s why dedupe can speed up the boot storm.

Demo: Dedup

A PM I don’t know takes the stage. This demo will be how Dedup optimizes the boot storm scenario. Starts up VMs… one collection is optimized and the other not. Has a tool to monitor boot up status. The deduped VMs start up more quickly.

Reduced Mean Time To Recovery

- Mirrored spaces rebuild: parallelized recovery

- Increased throughput during rebuilds.

Storage Spaces

See yesterday’s notes. They heapmap the data and automatically (don’t listen to block storage salesman BS) promote hot data and demote cold data through the 2 tiers configured in the virtual disk (SSD and HDD in storage space).

Write-Back Cache: absorbs write spikes using SSD tier.

Brian Matthew takes the stage

Demo: Storage Spaces

See notes from yesterday

Back to Elden …

Summary