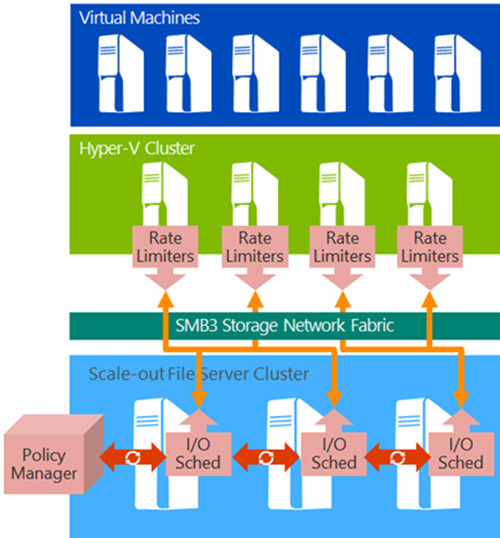

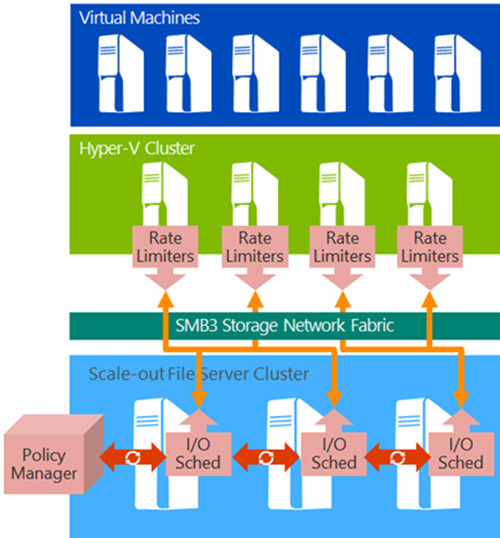

One of the bedrocks of virtualization or a cloud is the storage that the virtual machines (and services) are placed on. Guaranteeing performance of storage is tricky –some niche storage manufacturers such as Tintrí (Irish for lightning) charge a premium for their products because they handle this via black box intelligent management.

In the Microsoft cloud, we have started to move towards software-defined storage based on the Scale-Out File Server (SOFS) with SMB 3.0 connectivity. This is based on commodity hardware, and with WS2012 R2, we currently have a very basic form of storage performance management. We can set:

- Maximum IOPS per VHD/X: to cap storage performance

- Minimum IOPS per VHD/X: not enforced, purely informational

This all changes with vNext where we get distributed storage QoS for SOFS deployments. No, you do not get this new feature with legacy storage system deployments.

A policy manager runs on the SOFS. Here you can set storage rules for:

- Tenants

- Virtual machines

- Virtual hard disks

Using a new protocol, MS-SQOS, the SOFS passes storage rule information back to the relevant hosts. This is where rate limiters will enforce the rules according to the policies, set once, on the SOFS. No matter which host you move the VM to, the same rules apply.

The result is that you can:

- Guarantee performance: Important in a service-centric world

- Limit damage: Cap those bad boys that want everything to themselves

- Create a price banding system: Similar to Azure, you can set price bands where there are different storage performance capabilities

- Offer fairly balanced performance: Every machine gets a fair share of storage bandwidth

At this point, all management is via PowerShell, but we’ll have to wait and see what System Center brings forth for the larger installs.