Here are my notes from the recording of Microsoft’s New Windows Server Containers, presented by Taylor Brown and Arno Mihm. IMO, this is an unusual tech because it is focused on DevOps – it spans both IT pro and dev worlds. FYI, it took me twice as long as normal to get through this video. This is new stuff and it is heavy going.

Objectives

- You will now enough about containers to be dangerous 🙂

- Learn where containers are the right fit

- Understand what Microsoft is doing with containers in Windows Server 2016.

Purpose of Containers

- We used to deploy 1 application per OS per physical server. VERY slow to deploy.

- Then we got more agility and cost efficiencies by running 1 application per VM, with many VMs per physical server. This is faster than physical deployment, but developers still wait on VMs to deploy.

Containers move towards a “many applications per server” model, where that server is either physical or virtual. This is the fastest way to deploy applications.

Container Ecosystem

An operating system virtualization layer is placed onto the OS (physical or virtual) of the machine that will run the containers. This lives between the user and kernel modes, creating boundaries in which you can run an application. Many of these applications can run side by side without impacting each other. Images, containing functionality, are run on top of the OS and create aggregations of functionality. An image repository enables image sharing and reuse.

When you create a container, a sandbox area is created to capture writes; the original image is read only. The Windows container sees Windows and thinks it’s regular Windows. A framework is installed into the container, and this write is only stored in the sandbox, not the original image. The sandbox contents can be preserved, turning the sandbox into a new read-only image, which can be shared in the repository. When you deploy this new image as a new container, it contains the framework and has the same view of Windows beneath, and the container has a new empty sandbox to redirect writes to.

You might install an application into this new container, the sandbox captures the associated writes. Once again, you can preserve the modified sandbox as an image in the repository.

What you get is layered images in a repository, which are possible to deploy independently from each other, but with the obvious pre-requisites. This creates very granular reuse of the individual layers, e.g. the framework image can be deployed over and over into new containers.

Demo:

A VM is running Docker, the tool for managing containers. A Windows machine has the Docker management utility installed. There is a command-line UI.

Docker Images < list the images in the repository.

There is an image called windowsservercore. He runs:

docker run –rm –it windowsservercore cmd

Note:

- –rm (two hyphens): Remove the sandbox afterwards

- –it: give me an interactive console

- cmd: the program he wants the container to run

A container with a new view of Windows starts up a few seconds later and a command prompt (the desired program) appears. This is much faster than deploying a Windows guest OS VM on any hypervisor. He starts a second one. On the first, he deletes files from C: and deletes HKLM from the registry, and the host machine and second container are unaffected – all changes are written to the sandbox of the first container. Closing the command prompt of the first container erases all traces of it (–rm).

Development Process Using Containers

The image repository can be local to a machine (local repository) or shared to the company (central repository).

First step: what application framework is required for the project … .Net, node.js, PHP, etc? Go to the repository and pull that image over; any dependencies are described in the image and are deployed automatically to the new container. So if I deploy .NET a Windows Server image will be deployed automatically as a dependency.

The coding process is the same as usual for the devs, with the same tools as before. A new container image is created from the created program and installed into the container. A new “immutable image” is created. You can allow selected people or anyone to use this image in their containers, and the application is now very easy and quick to deploy; deploying the application image to a container automatically deploys the dependencies, e.g. runtime and the OS image. Remember – future containers can be deployed with –rm making it easy to remove and reset – great for stateless deployments such as unit testing. Every deployment of this application will be identical – great for distributed testing or operations deployment.

You can run versions of images, meaning that it’s easy to rollback a service to a previous version if there’s an issue.

Demo:

There is a simple “hello world” program installed in a container. There is a docker file, and this is a text file with a set of directions for building a new container image.

The prereqs are listed with FROM; here you see the previously mentioned windowsservercore image.

WORKDIR sets the baseline path in the OS for installing the program, in this case, the root of C:.

Then commands are run to install the software, and then run (what will run by default when the resulting container starts) the software. As you can see, this is a pretty simple example.

He then runs:

docker build -t demoapp:1 < which creates an image called demoapp with a version of 1. -t tags the image.

Running docker images shows the new image in the repository. Executing the below will deploy the required windowsservercore image and the version 1 demoapp image, and execute demoapp.exe – no need to specity the command because the docker file specified a default executable.

docker run –rm -it demoapp:1

He goes back to the demoapp source code, compiles it and installs it into a container. He rebuilds it as version 2:

docker build -t demoapp:2

And then he runs version 2 of the app:

docker run –rm -it demoapp:2

And it fails – that’s because he deliberately put a bug in the code – a missing dependent DLL from Visual Studio. It’s easy to blow the version 2 container away (–rm) and deploy version 1 in a few seconds.

What Containers Offer

- Very fast code iteration: You’re using the same code in dev/test, unit test, pilot and production.

- There are container resource controls that we are used to: CPU, bandwidth, IOPS, etc. This enables co-hosting of applications in a single OS with predictable levels of performance (SLAs).

- Rapid deployment: layering of containers for automated dependency deployment, and the sheer speed of containers means applications will go from dev to production very quickly, and rollback is also near instant. Infrastructure no longer slows down deployment or change.

- Defined state separation: Each layer is immutable and isolated from the layers above and below it in the container. Each layer is just differences.

- Immutability: You get predictable functionality and behaviour from each layer for every deployment.

Things that Containers are Ideal For

- Distributed compute

- Databases: The database service can be in a container, with the data outside the container.

- Web

- Scale-out

- Tasks

Note that you’ll have to store data in and access it from somewhere that is persistent.

Container Operating System Environments

- Nano-Server: Highly optimized, and for born-in-the-cloud applications.

- Server Core: Highly compatible, and for traditional applications.

Microsoft-Provided Runtimes

Two will be provided by Microsoft:

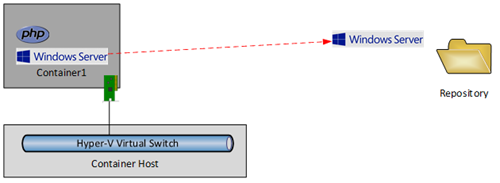

- Windows Server Container: Hosting, highly automated, secure, scalable & elastic, efficient, trusted multi-tenancy. This uses a shared-kernel model – the containers run on the same machine OS.

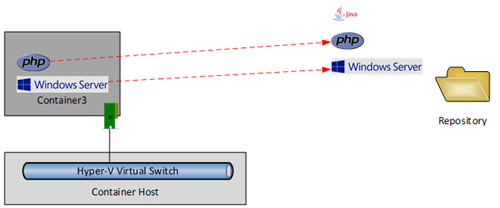

- Hyper-V Container: Shared hosting, regulate workloads, highly automated, secure, scalable and elastic, efficient, public multi-tenancy. Containers are placed into a “Hyper-V partition wrap”, meaning that there is no sharing of the machine OS.

Both runtimes use the same image formats. Choosing one or the other is a deployment-time decision, with one flag making the difference.

Here’s how you can run both kinds of containers on a physical machine:

And you can run both kinds of containers in a virtual machines. Hyper-V containers can be run in virtual machine that is running the Hyper-V role. The physical host must be running virtualization that supports virtualization of the VT instruction sets (ah, now things get interesting, eh?). The virtual machine is a Hyper-V host … hmm …

Choosing the Right Tools

You can run containers in:

- Azure

- On-premises

- With a service provider

The container technologies can be:

- Windows Server Containers

- Linux: You can do this right now in Azure

Management tools:

- PowerShell support will be coming

- Docker

- Others

I think I read previously that System Center would add support. Visual Studio was demonstrated at Build recently. And lots of dev languages and runtimes are supported. Coders don’t have to write with new SDKs; what’s more important is that

Azure Service Fabric will allow you to upload your code and it will handle the containers.

Virtual machines are going nowhere. They will be one deployment option. Sometimes containers are the right choice, and sometimes VMs are. Note: you don’t join containers to AD. It’s a bit of a weird thing to do, because the containers are exact clones with duplicate SIDs. So you need to use a different form of authentication for services.

When can You Play With Containers?

- Preview of Windows Server Containers: coming this summer

- Preview of Hyper-V Containers: planned for this year

Containers will be in the final RTM of WS2016. You will be able to learn more on the Windows Server Containers site when content is added.

Demos

Taylor Brown, who ran all the demos, finished up the session with a series of demos.

docker history <name of image> < how was the image built – looks like the dockerfile contents in reverse order. Note that passwords that are used in this file to install software appears to be legible in the image.

He tries to run a GUI tool from a container console – no joy. Instead, you can remote desktop into the container (get the IP of the container instance) and then run the tool in the Remote Desktop session. The tool run is Process Explorer.

If you run a system tool in the container, e.g. Process Explorer, then you only see things within the container. If you run a tool on the machine, then you have a global view of all processes.

If you run Task Manager, go to Details and add the session column, you can see which processes are owned by the host machine and which are owned by containers. Session 0 is the machine.

Runs docker run -it windowsservercore cmd < does not put in –rm which means we want to keep the sandbox when the container is closed. Typing exit in the container’s CMD will end the container but the sandbox is kept.

Running ps -a shows the container ID and when the container was created/exited.

Running docker commit with the container ID and a name converts the sandbox into an image … all changes to the container are stored in the new image.

Other notes:

The IP of the container is injected in, and is not the result of a setup. A directory can be mapped into a container. This is how things like databases are split into stateless and stateful; the container runs the services and the database/config files are injected into the container. Maybe SMB 3.0 databases would be good here?

Questions

- How big are containers on the disk? The images are in the repository. There is no local copy – they are referred to over the network. The footprint of the container on the machine is the running state (memory, CPU, network, and sandbox), the size of which is dictated by your application.

- There is no plan to build HA tech into containers. Build HA into the application. Containers are stateless. Or you can deploy containers in HA VMs via Hyper-V.

- Is a full OS running in the container? They have a view of a full OS. The image of Core that Microsoft will ship is almost a full image of Windows … but remember that the image is referenced from the repository, not copied.

- Is this Server App-V? No. Conceptually at a really really high level they are similar, but Containers offer a much greater level of isolation and the cross-platform/cloud/runtime support is much greater too.

- Each container can have its own IP and MAC address> It can use the Hyper-V virtual switch. NATing will also be possible as an alternative at the virtual switch. Lots of other virtualization features available too.

- Behind the scenes, the image is an exploded set of files in the repository. No container can peek into the directory of another container.

- Microsoft are still looking at which of their own products will be support by them in Containers. High priority examples are SQL and IIS.

- Memory scale: It depends on the services/applications running the containers. There is some kind of memory de-duplication technology here too for the common memory set. There is common memory set reuse, and further optimizations will be introduced over time.

- There is work being done to make sure you pull down the right OS image for the OS on your machine.

- If you reboot a container host what happens? Container orchestration tools stop the containers on the host, and create new instances on other hosts. The application layer needs to deal with this. The containers on the patched host stop/disappear from the original host during the patching/reboot – remember; they are stateless.

- SMB 3.0 is mentioned as a way to present stateful data to stateless containers.

- Microsoft is working with Docker and 3 containerization orchestration vendors: Docker Swarm, Kubernetes and Mesosphere.

- Coding: The bottom edge of Docker Engine has Linux drivers for compute, storage, and network. Microsoft is contributing Windows drivers. The upper levels of Docker Engine are common. The goal is to have common tooling to manage Windows Containers and Linux containers.

- Can you do some kind of IPC between containers? Networking is the main way to share data, instead of IPC.

Lesson: run your applications in normal VMs if:

- They are stateful and that state cannot be separated

- You cannot handle HA at the application layer

Personal Opinion

Containers are quite interesting, especially for a nerd like me that likes to understand how new techs like this work under the covers. Containers fit perfectly into the “treat them like cattle” model and therefore, in my opinion, have a small market of very large deployments of stateless applications. I could be wrong, but I don’t see Containers fitting into more normal situations. I expect Containers to power lots of public cloud task -based stuff. I can see large customers using it in the cloud, public or private. But it’s not a tech for SMEs or legacy apps. That’s why Hyper-V is important.

But … nested virtualization, not that it was specifically mentioned, oh that would be very interesting 🙂

I wonder how containers will be licensed and revealed via SKUs?