Speakers:

- Erin Chapple, General Manger Windows Server

- Chris Van Wesep, Director Product Marketing

Erin Chapple starts things. Today they’ll talk about what’s new in Windows Server, what’s the future, and the hybrid/migration opportunities.

WS2016 Looking Back

Most cloud-ready OS:

- Built-in security: Protection of identity (Credential Guard), secure the virtualization platform (shielded VMs, vTPM), and built-in layers of security (VSM, etc)

- Azure-inspired infrastructure: Storage Spaces Direct, Network Controller, learnings from hyper-scale, affordable.

- Hybrid application platform: Support for containers, built-for-purpose OS, Azure Hybrid Benefit for SA/Azure transition

Some customer case studies come up. Rackspace used Shielded VMs, Nano Server for applications (woops!) for hosting. A “large investigative government agency” needed to preserve lots of seized data (PB + per case). They used Storage Spaces Direct (S2D) on 8-node clusters, with data in VMs to isolate one investigation from another. biBERK used containers to deploy 22 apps on WS2016 Containers with Docker in less than 1 week.

The key for software-defined is the hardware. They leverage offloads so much that hardware must be more reliable. There is a Windows Server Software Defined Program (WSSD) and the site with all the info is http://docs.microsoft.com/en-us/windows-server/sddc.

Supporting You Wherever You Are

WS2016 is the basis of on-premises, Azure, and Azure Stack (hybrid). 80% of enterprises see themselves operating in a hybrid mode for the foreseeable future. 55% have a hybrid strategy in place as of a year ago. 87% are planning to integrate on-premises datacentres with public cloud.

Hybrid is not about a network connection. It’s about consistency right down to the API level: unified development, VMs, storage, data, identity, and much more.

Will Gries – Azure File Sync

This is a new hybrid service that is a part of Azure Files. Centralize storage in Azure Files, but without giving up the file server. You effectively cache data locally on file servers for fast local performance. The cloud enables sync between site, centralized backup, and easy DR.

He starts a demo. The file sync agent is installed on a WS2016 file server. It is syncing to Azure. He proves this by changing & deleting things on Azure and it syncs to the cloud. It’s all near realtime, using change notifications on file server to ensure that sync happens very quickly. Cloud Tiering enables the “cache” feature. The greyed files with an O attribute have a disk size of 0 bytes because they are stored in Azure. If he opens the file, it’s recalled from Azure Files seamlessly. Files that are able to do partial reads/writes can stream from Azure – he opens a video and we can see in the UI that it is streaming from Azure. In file properties, we can see it has downloaded the blocks via the stream, optimizing the download to only required blocks, thanks to streaming.

Back to Erin.

Windows Server Cadence

Industry is moving incredibly fast. Industries in that fast lane need server improvements faster. There will be two channels of Windows Server:

- Semi-annual channel. An opt-in for SA or Azure customers, releasing every spring/autumn. Each release is supported for 18 months, so you can choose to skip every second release. Build = approx year/month, e.g. 1709 will be released in month 10 of 2017.

- Long-term Servicing Channel: For everyone outside of SA/Azure or not wanting to upgrade every 6-12 months. Typical 5+5 years support program and in all channels. Name = Windows Server + Year.

Many companies will use a mix of both channels, selecting the channel based on demands of an application/service.

Windows Server Insiders will give you a sneak peek of semi-annual channel releases.

The date of the next LTSC release is not announced, but it’s going to be after 2018.

Introducing Server Core to Semi-Annual Channel

Server Core is replacing Nano Server for infrastructure and VM roles. Nano Server adoption was very low in these areas. In 1709, Nano Server is completely focused on containers. It is much smaller for containers by stripping out the infrastructure pieces. Server Core should be a “soft landing” for moving applications from Nano Server. Server Core is the MS recommended choice for infrastructure roles.

Note by me: I will continue to recommend full installations for infrastructure roles. The full GUI is not in the semi-annual channel. So if you want rapid upgrades, you better learn some PowerShell to troubleshoot your networking and drivers/firmware.

What’s New in 1709

Hybrid Application platform and Modern Management

Jeff Woolsey

Jeff tells us that containers are the same journey that we went through with virtualization. Containers will happen, but they won’t kill virtualization – they work together. We’re at the beginning of the next 10 year journey with containers. Jeff says that cloud admins, hybrid admins, IT pros, must learn containerization.

Hybrid Application Platform

- Nano Server just wasn’t right for virtualization: drivers, installation, patching, etc. So they switched the focus entirely to containers to make it faster to deploy/update, and to get higher levels of density & performance.

- .NET Core 2.0 and SMB support was added for containers … allows containers to store data on SMB 3.0 storage.

- Linux containers with Hyper-V Isolation enables a cross-platform to run all kinds of containers but in a secure way (each container running real Linux kernels n a Hyper-V child partition), and Windows Subsystem for Linux. When Win10 added WSL, Microsoft wasn’t planning to do it for Windows Server. With Linux Containers, the case for Bash management on the host made this a viable option.

Telemetry shows that most people using Windows Server containers are choosing the Hyper-V model for security.

All of this is wrapped up in Modern Management.

Demo: Enabling Cloud Apps with Nano Server & Containers

This is the next generation P2V … moving applications (Docker Convert) from VMs to containers. In the demo, Jeff uses Docker to deploy a Hyper-V container in a container. It runs SQL Server & IIS. The Docker tools on GitHub converted the app to an image in less than 1 hour. Now the image is a container image which is easy to deploy. When running in a container, it uses a fraction of the resources that were used by VMs.

Next he deploys a Linux container image with Tomcat Server, on the same Windows Server host as the Windows container.

Nano Server

The base image for WS2016 Nano Server was 383 MB. In 1709 is 78 MB. With .Net it went from 413 MB to 107 MB. Those are the compressed numbers.

Uncompressed: the base image wen from 1.05 GB to 195 MB, and with .NET it went from 1.15 GB to 262 MB.

Management Re-Imagined

- This is next-generation of “in-box” tooling.

- Simplified, integrated and secure.

- Extensible

Required for Server Core in the real world. The UI is HTML5 and touch friendly. It has to manage the h/w, the local VMs, and VMs in Azure.

Today we use Task Manager, MMC based tools like Hyper-V Manager, Perfmon, Device Manager, etc, CMD.EXE, PowerShell, Serer Manager, etc. Jeff mentions lots more tools

Project Honolulu

A HTML5-based touch-friendly UI. It’s running on Jeff’s laptop against 4 servers under his desk back in the office. He opens the Overview (Task Manager info). Computer name and domain join are there. Environment variables, RDP are here. Restart/shutdown are here.

Roles and Features is next. No more need for Server Manager (yay!). Roles & features easily installed remotely. Events shows all the event viewer info. Note that filtering UI is much better here than in the MMC. Files allows you to browse and edit the file system on a managed server. Virtual machines allows Hyper-V VM management.

The system is agentless. Honolulu is a 30 MB MSI download to a management node which you browse to. It even works on Safari on Mac.

Honolulu will be a free download when it goes GA.

Back to Erin

What’s Next For Project Honolulu

A peek into the pipeline … things they are exploring and experimenting with.

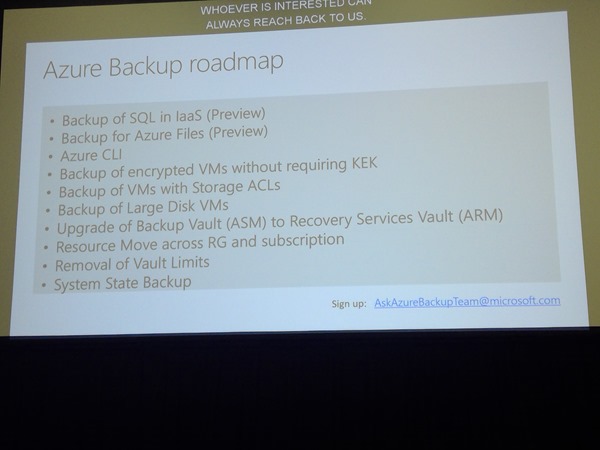

Azure Backup in Honolulu – a wizard to set up the Azure bits and start backing up items/system state. They show some mockups of it all being driven from Honolulu instead of the Azure Portal.

The Azure Connection

Chris comes on stage to talk about Hybrid scenarios.

He starts off by talking about Software Assurance. Highlighted features:

- Required for Semi-Annual releases

- Hybrid Use Benefit to move to Azure – up to 40% savings on the cost of Windows Server Azure VMs

Premium Assurance add-on adds 6 years of support to the normal 5+5 model (16 years total) for applications that cannot stay up to date, but can continue to get security updates.

If you watch this session, please note that Chris over-simplifies (a lot) the Hybrid Use benefit. It’s actually quite complex, regarding moving & co-using licenses and core counts.

End of Support

W2008/R2 end of support is Jan 2020 – 1/3 of servers fall into this space. SQL 2008/R2 end of support is July 2019. For larger companies, they should look at cloud and/or containerization, or even re-development in serverless cloud.

Questions

- Honolulu can manage all the way back to Ws2012

- Not every app can/should be containerized – key thing is that you need remote management because containers don’t have a GUI.

- Where is Honolulu installed. Can be on a PC, on the managed server, or on a centrally dedicated management server. Honolulu uses WMI and PowerShell to talk to the managed servers.