In this post, I’m going to discuss how to solve an age-old problem that still hurts us in The Cloud with DevSecOps: the on-going friction between devs and ops and how the adoption of the cloud is making this worse.

Us Versus Them

Let me say this first: when I worked as a sys admin, I was a “b*st*rd operator from hell”. I locked things down as tight as I could for security and to control supportability. And as you can imagine, I had lots of fans in the development teams – not!

Ops and devs have traditionally disliked each other. Ops build the servers perfectly. Devs write awesome code. But when something goes wrong:

- Their servers are too slow

- Their architecture/code is rubbish

Along Came a Cloud

The cloud was meant to change things. And in some ways, it did. In the early days, when AWS was “the cloud”, devs got a credit card from somewhere and started building. The rush of freedom and bottomless resources oxygenated their creativity and they build and deployed like they were locked in a Lego shop for the weekend.

Eventually, the sober-minded Ops, Security, and Compliance folks observed what was happening and decided to pull the reigns back. A “landing zone” was built in The Cloud (now Azure and others are in play) and governance was put in place.

What was delivered in that landing zone? A representation of the on-premises data center that the devs were trying to escape from. Now they are told to work in this locked-down environment and the devs are suddenly slowed down and restricted. Change control, support tickets, and a default answer from Ops of “no” means that agility and innovation die.

But here’s the thing – the technology was a restricting factor when working on-premises: physical hardware means and 100% IaaS means that Ops need to deliver every part of the platform. In the cloud, technology wasn’t the cause of the issue. The Cloud started with self-service, all-you-can-eat capacity, and agility. And then traditional lockdowns were put in place.

Business Dissatisfaction

A good salesperson might have said that there can be cost optimisations but cost savings should not be a primary motivation to go with the cloud. Real rewards come from agility, which leads to innovation. The ability to build fast, see if it works, develop it if it does, dump it if it doesn’t, and not commit huge budgets to failed efforts is huge to a business. When Ops locks down The Cloud, some of the best features of The Cloud are lost. And then the business is unhappy – there were costly migration projects, actual IT spend might have increased, and they didn’t get what they wanted – IT failed again.

By the way, this is something we (me and my colleagues at work) have started to see as a trend with mid-large organisations that have made the move to Azure. The technology isn’t failing them – people and processes are.

People & Processes

Technology has a role to play but we can probably guesstimate that it’s about 20% of the solution. People and processes must evolve to use The Cloud effectively. But those things are overlooked.

Microsoft’s Cloud Adoption Framework (CAF) recognises this – the first half of the CAF is all about the soft side of things:

The CAF starts out by analysing the business wants from The Cloud. You cannot shape anything IT-wise without instruction from above. What does the business want? Do you know who you should not ask? The IT Manager – they want what IT wants. To complete the strategy definition, you need to get to the owners/C-level folks in the business – getting time with them is hard! Once you have a vision from the business you can start looking at how to organise the people and set up the processes.

Organisational Failure

Think about the structure of IT. There is an Ops team/department with a lead. That group of people has pillars of expertise in a mid-large organisation:

- The Windows team

- Linux

- Networking

- SAN

- And so on

Even those people don’t work well in collaboration. There is also a Dev department that is made up of many teams (workloads) that may even have their own pillars of expertise – some/many of those are externals. There is no alignment or collaboration between all the parties involved in building, running, and continuously improving a workload.

DevOps

DevOps is a methodology that brings Ops and Devs together in actual or virtual teams for each workload. For example, let’s say that a workload requires the following skills from many teams/departments:

- .NET developers

- Application architect

- Infrastructure architect

- Azure operators

That might be skills from 4 teams. But in DevSecOps, the workload defines a virtual or actual team of people that will work on that application and its underlying infrastructure together. The application and infrastructure architects will design together. The devs and ops skills will work together to produce the code that will create the underlying platform (PaaS and/or IaaS) that will be continuously developed/improved/deployed using GitHub/DevOps actions/pipelines.

Agile methodologies will be brought into plan:

- Work through epics, user stories, features and tasks (backlog)

- That are scheduled to sprints (kanban board)

- And are assigned to/pulled by members of the DevOps team (resource planning)

What has been accomplished? Now a team works together. They have a single vision through a united team. They share a plan and communicate through daily standup meetings and modern tooling such as Teams. By working as one, they can produce code fast. And that means they can fail fast:

- Produce a minimally viable product

- Test if it works

- If it does, improve on it in sprints

- If it doesn’t, tear it down quickly with minimal money lost

DevSecOps

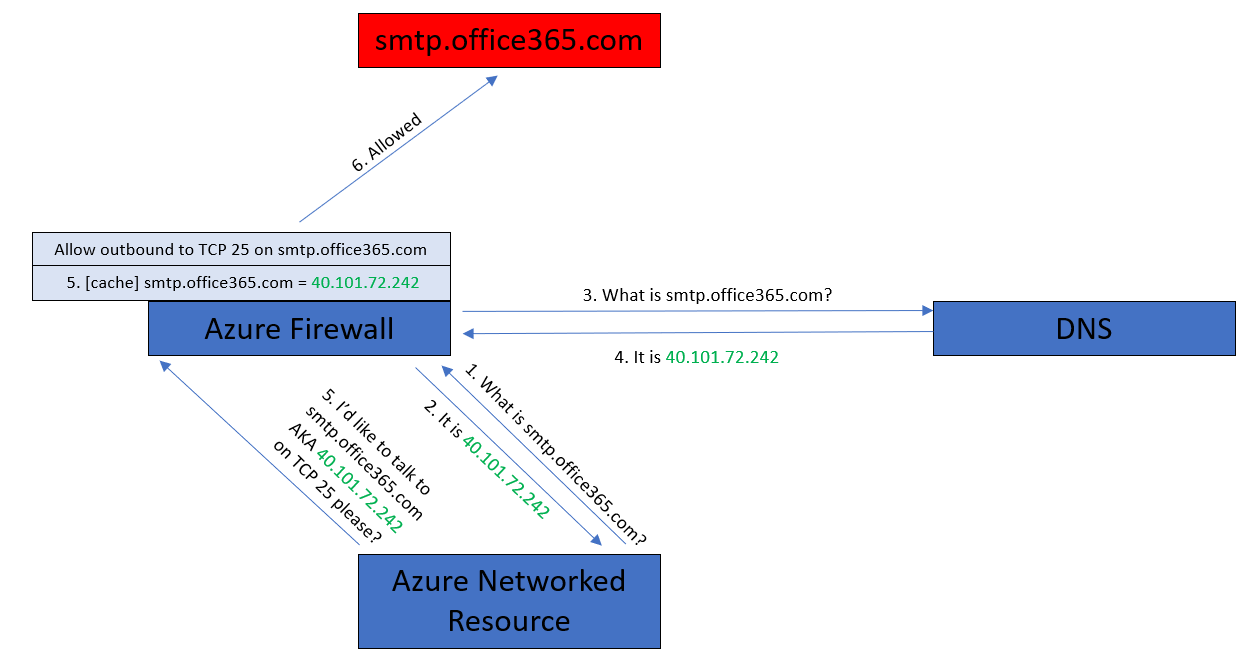

In The Cloud, modern workloads are presented to clients over the Internet using TLS. The edge means that there is a security role. And in a good design, micro-segmentation is required, which means an expanded security role. And considering the nature of threats today, the security role should have some developer skills to analyse code and runtimes for security vulnerabilities.

If we don’t change how the security role is done then it can undo everything that DevOps accomplishes – all of a sudden a default “no” appears, halting all the progress towards agility and innovation.

DevSecOps adds the security role to DevOps. Now security personnel is a part of the workload’s team. They will be a part of the design process. They will be the ones that either implement in code and/or review firewall rules in the pull request. Elements of security are moved from a central location out to the repos for the workloads – the result is that the what and who don’t change; all that changes is the where.

Influence

Introducing the sort of changes that DevSecOps will require is not going to be easy or quick. We can do the tech pieces in Azure pretty easily, actually, but the people might resist and the processes won’t exist in the organising. Introducing change will be hard and it will be resisted. That’s why the process must be lead from the C-level.

Got Something To Add?

What do you think? Please comment below.