This post will show how you can use an Azure Linux virtual machine to implement SNAT on an ExpresssRoute circuit to a remote location.

Scenario

You must have a low-latency connection to a remote location. That remote location is a partner. That partner uses IP ranges. That partner has many organisations, such as yours, that connect in. All of those organisations could have address overlap, which prevents the use of site-to-site networking without using SNAT. Your solution will make outbound connections to the partner’s services over the ExpressRoute circuit. The partner will use a firewall to restrict traffic. You must also use a firewall to protect your network – you will use Azure Firewall.

The scenario requires:

- You use a partner-assigned address space

- All traffic leaving your site and going to the partner network must use a source IP address from the partner assigned address space (SNAT)

Normally, you would accomplish this using your firewall appliance. However, Azure Firewall does not offer SNAT for private IP connections.

You might think “I’ve read that Virtual Network Gateway can do NAT rules”. Yes, the VPN Gateway can do NAT rules but the ExpressRoute Gateway does not have that feature.

The solution in this post will use a Linux virtual machine to implement SNAT.

The Architecture

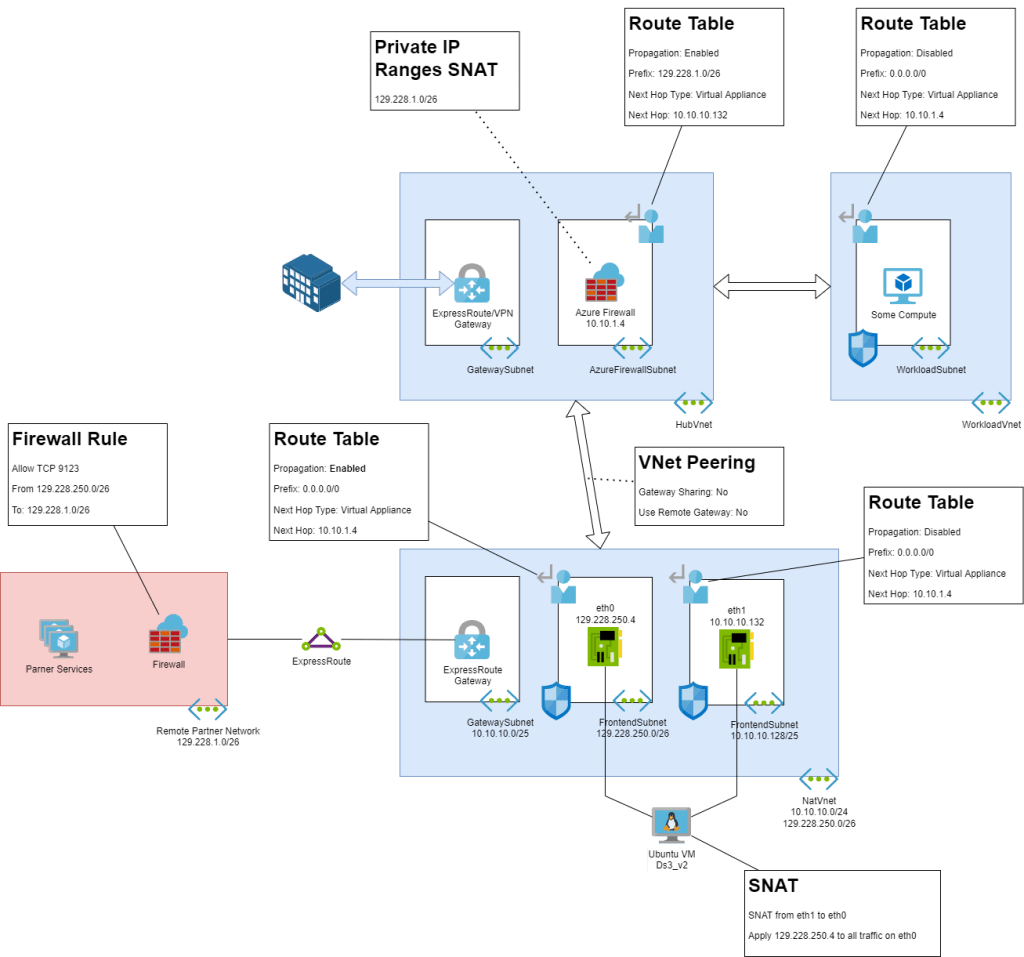

Here is an image of the architecture:

A feature of this design is that the workload that will use the partner service is separated from the NAT appliance and the ExpressRoute circuit. This is because:

- It allows flexibility with the workload to change location, design, platform, etc.

- The partner connection is isolated and must route through a firewall appliance in the hub, ideally with advanced security features enabled.

The Workload

Let’s start with the description of the workload. The workload, some kind of compute capable of egress traffic on a VNet, is deployed in a spoke virtual network. The virtual network is a part of a hub-and-spoke architecture – it is peered to a hub. The workload has a route table that forces all egress traffic (0.0.0.0/0) to use the Azure Firewall in the hub as the next hop.

The Hub

The hub features an AzureFirewallSubnet with the Azure Firewall. There is a route table assigned to the subnet. Route propagation is enabled – to allow routes to propagate from site-to-site networking that is used by the organization. The purpose of this route table is to add specific routes, such as this scenario where we want to force traffic to the partner address space (129.228.1.0/26) to travel via the backend interface of the NAT appliance.

The partner address space (129.228.1.0/26) should be added as an additional private IP address (SNAT) range on the Azure Firewall – traffic to this prefix should not be forced out to the Internet.

Ideally, this firewall is the Premium SKU and has IDPS enabled.

The NAT Solution

The NAT solution is deployed in a “NAT virtual network”, dedicated to the partner ExpressRoute circuit. The hub is peered with the NAT virtual network – “gateway sharing” and “use remote gateway” are disabled – this is to prevent route propagation and to prevent incompatibilities between the hub and the NAT virtual network because they both have Virtual Network Gateways.

The NAT virtual machine (I used Ubuntu) is deployed as a Ds3_v2 – a commonly used series in NVAs because it has good network throughput compared to price (there is no Hyperthreading). The VM has two network interfaces:

- eth1: This is the backend NIC. This NIC is the next hop that is used by the AzureFirewallSubnet route table in the hub for traffic going to the partner subnet. In other words, traffic from the organisation workload will route through the firewall, and then through this interface to get to the partner. This subnet uses an internal address range. A route table forces all traffic to 0.0.0.0/0 to use the hub firewall as the next hop. Route propagation is disabled – we do not want this NIC to learn routes to the partner. An NSG on this subnet denies all inbound traffic – we want to reject packets from the partner network and all connections will be outbound.

- eth1: This is the interface that will communicate with the partner over ExpressRoute. This subnet uses an address range that is assigned by the partner. All traffic going to the partner from the organisation will use the IP address of this NIC. A route table forces all traffic to 0.0.0.0/0 to use the hub firewall as the next hop. Route propagation is enabled – this NIC must learn routes to the partner from the ExpressRoute Gateway (a useful place to verify BGP routing via Effective Routes). An NSG on this subnet will only accept connections from the IP address of the workload compute (resource or subnet depending on the nature of networking) with the required protocol/port numbers.

An ExpressRoute Gateway is deployed in the NAT virtual network. The ExpressRoute Gateway is connected to a circuit that connects the organisation to the partner.

The partner has a firewall that only permits traffic from the organisation if it uses a source IP address from the address range that they assigned to the organization.

Configuring Linux

I am allergic to Penguins so this took some googling 🙂 Here are the things to note:

- 129.228.1.0/26 is the partner network.

- 129.228.250.4 is the address of eth0, the frontend or SNAT NIC on the Linux VM.

You will log into the VM (Azure Bastion is your friend) and elevate to root:

sudo -iYou will need to install some packages:

apt-get update

apt-get -y install net-tools

apt-get -y install iptables-persistent

apt-get -y install ncVerify that that eth0 is the (default) frontend NIC and that eth1 is the backend NIC.

ifconfigEnable forwarding in the kernel:

*echo 1 > /proc/sys/net/ipv4/ip_forwardConfigure the change persistently by editing the sysctl.conf file using the vi editor:

vi /etc/sysctl.confFind the below line and remove the comment so that it becomes active:

net.ipv4.ip_forward = 1Now for some vi fun: Type the following to save the changes:

:wpApply change:

sysctl -pVerify the above change:

sysctl net.ipv4.ip_forwardNext, you will configure routing from eth0 to eth1.

iptables -A FORWARD -i eth1 -o eth0 -j ACCEPT

iptables -A FORWARD -i eth0 -o eth1 -m state --state RELATED,ESTABLISHED -j ACCEPTAnd then you will enable iptables Masquerading

iptables -t nat -A POSTROUTING -o eth1 -j MASQUERADEAt this point, routing from eth1 to eth0 is enabled but the source address is not being changed. The following line will change the source address of traffic leaving eth0 to use the partner assigned address.

iptables -t nat -A POSTROUTING -o eth0 -j SNAT --to 129.228.250.4You can now test the connection from your workload to the partner. If everything is correct, a connection is possible … but your work is not done. The iptables configuration is not persistent! You will fix that with these commands:

sudo apt install iptables-persistent

sudo iptables-save > /etc/iptables/rules.v4

sudo ip6tables-save > /etc/iptables/rules.v6Now you should reboot your virtual machine and verify that your iptables configuration is still there:

iptables -t nat -v -L POSTROUTING -n --line-numberA good tip now is to make sure that you have enabled Azure Backup and that your VM is being backed up. And also do other good practices such as managing patching for Linux and implementing Defender for Cloud for the subscription.

Wrapping Up

There you have it; you have created a “DMZ” that enables an ExpressRoute connection to a remote partner network. You have protected yourself against the partner. You have complied with the partner’s requirements to use an IP address that they have assigned to you. You still have the ability to use site-to-site networking for yourself and for other partners without introducing potential incompatibilities. And you have not handed over fists full of money to an NVA vendor.