This post is going to explain why you should not be putting any compute into your hub VNet.

Background

I was looking at some Azure Landing Zones (reference architectures) from Microsoft before the end of 2023. I was shocked to see compute (VMs) being placed in the hub. Years ago, I learned that putting any kind of compute in the hub eventually leads to issues that are not obvious at first. I would have expected Microsoft to know better.

I posted something on Twitter and LinkedIn. Sure, there were plenty of people that agreed with me. However, there were respondents from Microsoft and elsewhere who didn’t see the problem. I explained it, as best as one could in a limited chat, but either people didn’t see the responses, were lazy, or something else 🙂

I decided to write this post to explain the problems with placing things in a hub.

Problem Summary

There are two issues with placing things in a hub:

- Routing complexity: When one expands to more than one hub & spoke (regional footprints), the network requirements for a micro-segmented security model will become complex. Complexity breaks security eventually. Keep it simple, stupid!

- “Shared services syndrome”: Once you place any kind of shared service in the hub, someone will start asking about putting web servers, databases, and file shares in the hub. Then why do you have spokes? And then we make problem 1 even worse.

Routing Simplicity

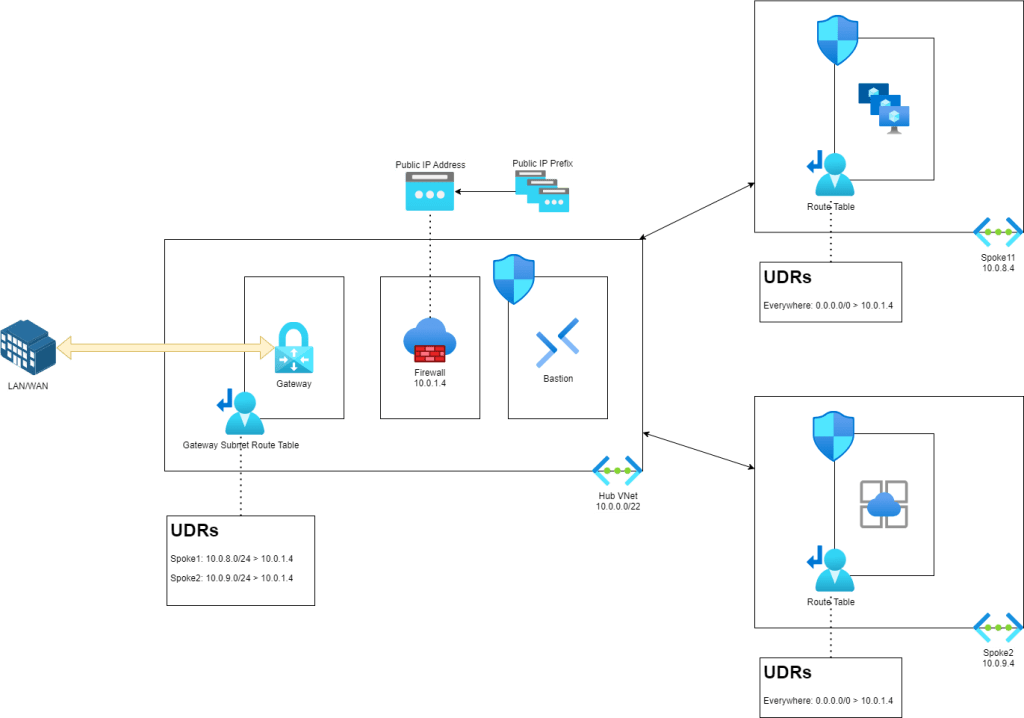

I want to start with the ideal – simplicity. My hub and spoke design is far from unique. It’s actually quite simple – making it easy to understand, troubleshoot and secure.

The hub contains only the minimum required networking items with no compute. The above hub contains:

- A GatewaySubnet with Azure VPN and/or ExpressRoute gateway(s)

- An AzureFirewallSubnet for the Azure Firewall

- An AzureBastionSubnet for Azure Bastion must go in the hub (for routing reasons) in a VNet hub and spoke scenario where the Bastion will be shared.

There is flexibility:

- NVA router for SD-WAN

- Azure Route Server

- Azure Firewall management subnet (for tunneling today)

- Swap out Azure Firewall for an NVA (yuk!)

The beauty is the simplicity. The routing model controls the micro-segmentation security. Nothing is trusted.

- Inbound from on-premises: The UDRs in the GatewaySubnet forces traffic through the Azure Firewall to reach the spokes. Have a look at this BGP-powered alternative using Azure Route Server by Jose Moreno.

- Egress and East-West: Any traffic leaving a spoke must route through the firewall in the hub – including spoke-to-spoke, spoke-to-Internet(Azure), and spoke-to-LAN/WAN. Routes to Internet and on-prem are present/propagated to the AzureFirewall Subnet and any traffic to those destinations is handled by that subnet.

Two routes control everything for any given spoke. Note that traffic inside of a spoke is subject to the default Virtual Network route (direct from A to B via VXLAN).

What happens if I need to scale out to more Azure regions? I’ll drop in another hub & spoke and peer the hubs. My micro-segmentation model states that nothing trusts anything else, so footprint1 does not trust footprint2. To accomplish this we will peer the hub VNets to force traffic to route via the firewalls.

I’ve dropped in another hub & spoke with a different IP range. Footprint 1 was 10.0.0.0/16. The new footprint, Footprint2 is 10.10.0.0/16. Connecting the footprints is easy – you peer the hubs. The two hub VNets can route to each other. There’s no compute or data in the hubs so I don’t need to do any isolation. But I do need spokes in the two footprints to be able to route to each other.

We can enable end-to-end connectivity with one route per hub. A route table is added to the AzureFirewallSubnet. A UDR for the neighbouring footprint is added, with the next hop being the firewall in the neighbour.

For example, in Footprint1, I want to be able to reach the spokes in Footprint2. Footprint2 is 10.10.0.0/16. In the Footprint1 AzureFirewallSubnet, I will add a UDR to 10.10.0.0/16 with the next hop of the Footprint2 firewall, 10.10.1.4. Now, subject to the firewall and NSG routes, Spoke1 in Footprint1 can route to Spoke3 and Spoke4 in Footprint 2 and vice versa. Simple!

Simplicity is the key to security. Nothing breaks this model as long as I keep the hub empty of compute.

Everything in IT is “shared”. That’s why a “server” serves – it shares something, not only to users but to other servers in the same workload and to other workloads. Where do I place that “server”? All “servers” go into a spoke.

In micro-segmentation, there is no difference between the VNets. They’re all isolated. There are no DMZs. There are no secure zones. All VNets are isolated from all other VNets and there is no trust – we assume breach at all times. Welcome to modern network security following the guidance from various national agencies to combat APTs.

By the way, in this case, if I need a DMZ DNS server (not that it makes sense to have one anymore – that’s another post) – it goes into a spoke 🙂

Putting Stuff In The Hub

Now we will start copying what some of those Microsoft ALZs do: we will put some compute into the hubs.

If you inspect the hubs you will find a new subnet of x.x3.0/24 with some VMs in there – some DNS servers 🙂 Good security practice will mandate that I force traffic from 10.0.3.0/24 to route via the two firewalls. That’s easier said than done.

By default, traffic from the subnets in peered VNets will route directly from the source to the destination. Peering expands the VXLAN connections from a single VNet to peered VNets. There is no automated interpretation of intent. We will have to add a route to the compute subnets to state that the next hop to the remote compute subnet is via the local firewall. Then we need a route in the AzureFirewallSubnet to state that the next hop to the remote compute subnet is the remote firewall.

Oh – one more thing – and the diagram does not show this. Each network resource in the spokes now talks to the compute subnet in the local hub directly without going through the firewall – and vice versa. If that central compute is compromised, then the firewall will play no role in isolating the spokes from it or in detecting the spread of the APT. We will need to add routes:

- Compute subnet: for each spoke, similarly to the AzureGatewaySubnet

- Spoke subnets: to force traffic to x.x.3.0/24 via the Azure Firewall to avoid asynchronous routing.

Oh – and just one more thing – which is also not in the diagram. Each GatewaySubnet will require a route to the local x.x.3.0/34 to use the Azure Firewall as the next hop. Otherwise, on-premises (where attacks will likely come from) will have free access to the Compute subnet. You’ll have to make sure that routes from the GatewaySubnet propagate to the Compute subnet to void asynchronous routing.

Now let’s scale that out to 3 or 4 footprints. How complex are things getting now? Is there room for mistakes?

Shared Services Syndrome

I saw this happen years ago. Many moons ago, I followed a reference architecture from Microsoft to create the reference network design for my employer. That reference included compute in the hub. It was a very special compute: domain controllers. I could see the logic: these are special machines that every Windows VM will talk to – they go into the hub.

Not long after, we had customers stating that they wanted databases and file serves to go into the hub. They simply followed our logic: domain controllers are shared services and so are the file server and the database. How do you argue against that.

In v2.0 of my design, which quickly followed v1.0, all compute was stripped out of the hub. The argument to put shared services into the hub was gone.

I can imagine the consultants saying “I won’t allow more compute in the hub”. OK, but what happens when you are gone or a less argumentative colleague who is willing to do stuff for the customer takes your place? Have you done your customer a disservice by setting a bad precedent?

Let’s add another subnet into the hub. Let’s add more. Let’s expand the address space of the hub – a colleague showed me a hub design (by a competitor) where the hub address space was expanded 5 times! Imagine how much compute is in that hub. How many routes must you inject to make that network secure? Is that network even secure at all? It would take quite an audit to discover what is going on there.

Keep It Simple, Stupid (KISS)

I am a fan of simplified engineering. When it is simple and easy to understand, then it is easy to maintain and to secure. To often, engineers are too clever. They want to make exceptions and show off how clever they are. KISS is the best approach to engineering – and to security.