After presenting on the topic of Hyper-V to over 250 people (including some VMM) over the last 3 weeks, I’ve become aware that the term “Snapshot” confuses people. There is an unfortunate amount of confusion created by many different but similar solutions/features:

Hyper-V Snapshot

This is the ability (just like in VMware) to capture a virtual machine’s state (memory, CPU, system state, and disk contents) in a point in time. You can do some work, and then revert back to that snapshot, thus returning the VM to where it was back then, undoing all the changes made since the snapshot. You can have lots of snapshots, all tiered, and branched.

Hyper-V snapshots are supported in production. But they are not supported by many of the applications you’d install in a VM, e.g. SQL Server, Exchange, etc. I’m not a fan of snapshots in production, in fact, I hate them because of the problems that people create for themselves (long story where people assume all sorts of silly things that are convenient for them at the time). But I do use Hyper-V snapshots in lab environments to reset tests or demos.

Checkpoint

This is what System Center Virtual Machine Manager (SCVMM) calls a snapshot. Yup, it’s confusing.

EDIT: Microsoft listened to feedback and renamed the Hyper-V snapshot to checkpoint in WS2012 R2. Now it matches SCVMM and shouldn’t be confused with other kinds of snapshot.

Volume Shadow Copy Service (VSS) Snapshot

This kind of snapshot is an NTFS volume snapshot that allows Windows to backup hot files that are being used (e.g. virtual machines) or databases with data/log consistency (e.g. SQL Server or Exchange).

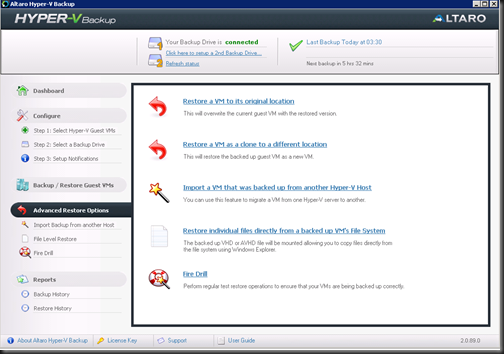

In the Hyper-V world, you can backup VMs (even running ones) using Hyper-V VSS compatible backup products such as DPM 2010 or Altaro. VSS creates a snapshot of the NTFS volume that contains the running VMs’ files and then backup can hit the snapshot.

This snapshot is a VSS snapshot, not a Hyper-V Snapshot. You won’t see it in Hyper-V Manager or in SCVMM. It exists purely as a hot file backup mechanism.

Interestingly, whereas Hyper-V snapshots may not be supported by many applications, this kind of backup can be, e.g. SQL Server and Exchange. However, some services, such as Domain Controllers, do not support restoring this kind of backup (in AD it causes USN rollback).

When I’m asked for advice, I tell people to use this kind of backup to “snapshot” a VM instead of Hyper-V snapshots. There isn’t the pain/mess of mismanaging VHDs, AVHDs and merges, and it is supported by almost every app you’ll need in a VM.

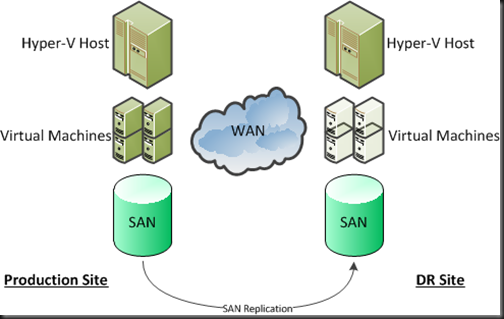

SAN LUN Snapshot

In a SAN, you can create a snapshot of a LUN. This duplicates the LUN. How the duplication works depends on the SAN.

The VSS Snapshot mechanism can leverage this to speed up backup by using a SAN manufacturer provided Hardware VSS provider. Instead of doing a software based VSS snapshot, it will create a SAN snapshot of the relevant LUN and that can then be used by the VSS enabled backup product. It’s especially useful for Hyper-V clusters with CSV where you want to minimise the amount of Redirected I/O (Mode or Access).

This week I heard that some are telling customer to use a manually created SAN LUN snapshot as a form of backup/restore on an hourly basis. Painful and it’s probably consuming expensive disk – they’d be better off using an efficient backup solution that writes to more economic disk.

Fixing the Confusion

As you can imagine, all this overuse of the term “snapshot” doesn’t help. It’s one thing for hardware VS Microsoft, but it’s another this when Hyper-V, SCVMM, and Windows VSS cause the confusion. If I had one suggestion then it would be this:

Change the term “Snapshot” in Hyper-V to “Checkpoint”. VSS isn’t going to change, and you’re not going to get the SAN vendors to change. Doing this would also increase consistency in Windows Server 8.