This post will show you how to migrate a cloud service-based virtual machine deployment from Classic Azure (Service Management or SM) to a different Azure subscription as an Azure Resource Manager (ARM) deployment. One example might be where you want to move virtual machines from a Direct/MOSP (credit card, trial, MSDN), Open, or EA subscription to a Cloud Solution Provider (CSP) subscription.

My focus is on migrating to CSP, but you can use this process to move VMs into ARM in any different subscription. Note that Microsoft has an official solution for migrating classic machines into ARM in the same subscription, that can feature zero downtime if you have used classic VNETs.

The Old Deployment

I have deployed a collection of virtual machines in a legacy style subscription. It’s a pretty classic deployment that was managed via the classic portal at https://manage.windowsazure.com. The virtual machines are stored on a single standard LRS storage account, they are connected to a VNet, and a cloud service is used to NAT (endpoints) the virtual machines.

One of the machines has endpoints for SMTP, another has endpoints for HTTP and HTTPS, and all of the machines have the usual RDP and remote management endpoints.

If you browse this deployment in the newer Azure Portal at https://portal.azure.com you’ll see that it’s deployed in resource groups, but the classic portal has no understanding of these groups, so there’s actually a messy collection of 3 default groups.

Migration Strategy

I’ve decided that I’m going to move these resources to my new CSP subscription using the free (unsupported) migAz toolset. I have data transactions happening on some of my machines, so I’m worried that the disk copy will leave me with data loss after a switchover. So here’s my plan:

- I will leave my original system running, and let users continue to use the old system during the migration.

- The new CSP deployment will be on a different network address.

- After the copy, I will create a VNet-to-VNet connection (requires a dynamic/route-based gateway, which might be incompatible with your on-premises VPN device) between the non-CSP and the CSP deployments.

- I will use tools like RoboCopy and SQL sync to keep the newer system updated while I test the new system.

- I will switch users over to the new system when I am happy with it and can schedule a very brief maintenance window.

- I will remove the old deployment after I am satisfied that the migration worked.

Otherwise I could schedule a maintenance window, shut down the older deployment, and do the migration/copy, and redirect users to the new deployment as quickly as I can.

Note that my cloud service has a reserved IP address, but I cannot bring that IP address with me to the CSP subscription. At some point, I am going to have to redirect users to a new static public IP address that is assigned to an ARM load balancer – probably by changing public DNS records. Any ExpressRoute/VPN connections will also have to be rebuilt to connect to a new gateway – I will have to manually deploy the gateway.

Preparation

First thing’s first: document your deployment and see if you can find anything that isn’t compatible with ARM or that you might need to re-create afterwards. We don’t have a way to migrate an Azure Backup vault at the moment, so document your Azure VM backup policies so that you can recreate them in the CSP subscription using a recovery services vault.

Next you need to update and get some tools on your PC:

- You need the latest version of the Azure and AzureRM PowerShell modules.

- Download the migAz zip file and extract it – mine is in C:\Temp\migAz.

- Launch PowerShell with elevated rights and allow unsigned scripts to run.

Time to start migrating!

Export ARM Template

The migAz tool creates an ARM template (JSON file) that describes how your non-ARM deployment would look if it was deployed in ARM (or CSP). This includes converting a cloud service into a load balancer, and converting endpoints and load balanced endpoints into NAT rules and load balancing rules (it really is quite clever). We can modify this file (optional). Then we import the file into CSP to create the machines, the networking components, and (importantly) the storage account – the disks aren’t copied yet, but we’ll do that later.

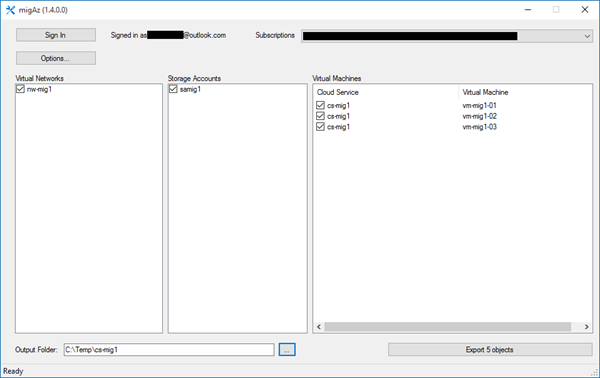

Browse to wherever you extracted migAz and run migAz.exe. Then:

- Log into your old subscription using suitable admin credentials.

- Select the subscription that you want to migrate from.

- You can click Options to tweak the export.

- Select the virtual network(s), storage account(s), and virtual machine(s) that you want to migrate.

- Enter an output folder where you want to store the created JSON files in.

- Click Export.

The JSON Files

It takes a few minutes for migAz to interrogate your old subscription to build up 2 JSON files:

- CopyBlobDetails.json: This file contains details of the virtual hard disks that must be copied to the CSP subscription. This includes the source URIs and the storage access keys – so keep this file safe because anyone can use these details to download the disks!

- Export.json: This file is the meat of the export, containing the template that will be used to redeploy diskless machines with all of their ARM dependencies.

We’ll return to CopyBlobDetails.json later on, so let’s focus on Export.json. If you open this file you’ll find it describes everything that will be created in ARM when you import it into your CSP subscription. You can edit this file to make changes. Maybe you want to tweak NAT rules or add machines. I want to make a few changes to my JSON file. Everything that follows in this section is optional!

Before you go anywhere near an editor, copy the two JSON files to allow you to undo edits and to have a reference to the original configuration.

When I browsed the file I noticed that the load balancer was going to be assigned a dynamic public IP address resource. I want a static IP address for external access and simple public DNS management. I also noticed that the name of the IP address will break my desired naming standard and that I want to change the domainNameLabel.

So I will edit the file and make two changes to the publicIPAddresses resource:

While I’m at it, I’m also going to rename the load balancer (under loadBalancers). Note that I also need to change the dependencies to match the new name of the public IP address:

There are loads of references (load balancer or NAT rules) to the name of the load balancer.

You need to update these references. The easy way to do a search replace. My old references were loadBalancers/cs-mig1 so I replaced them with loadBalancers/lb-mig1 to match the new name of the load balancer (above).

A load balancer requires an availability set so I’m renaming the new AV set to match my new naming standards:

There are loads of dependencies on this availability set, so do a find/replace to update those dependencies with the new name.

One possible gotcha is that the storage account won’t have a globally unique name (required). The options of migAz are configured by default to take the original storage account name and add a v2 to it for the ARM deployment. Make sure that this will still be unique. If it’s not, then you can edit the JSON file. You could also opt to change the resiliency level. Make sure that you edit CopyBlobDetails.json to make the same change.

I mentioned earlier that one of my plans was to change the network address of my deployment so that I could connect the non-ARM and the CSP deployments together to enable data synchronization before the production switchover. My old network is 10.0.0.0/16. I want the new network to be 10.1.0.0/16 because this will allow routing between the two VNETs if I create a VNET-to-VNET VPN. I will also need to update my subnet(s) and any DNS servers that are on the VNET.

My changes are:

All of my machines have reserved IP addresses so I’m going to do a find/replace to change 10.0.0 with 10.1.0.

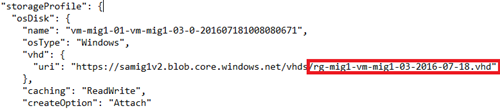

My naming stuff is almost all completely fixed up. Almost. What’s left? The virtual hard disks in the new CSP deployment are all going to be named after the original cloud service. My cloud service was called cs-mig1. I can see that the disks are called cs-mig1*.vhd.

I am going to change the names to match the name of my new resource group (which I will manually create later):

But that’s not enough for the disks. You will also need to edit CopyBlobDetails.json because that file contains instructions on how to name the virtual hard disks’ blobs when they are copied to the new CSP subscription.

Tweak the names to match your changes in export.json.

Now when I search export.json for the old cloud service name (cs-mig1) there are no more references to the cloud service, and I have configured my preferred ARM naming standard for every resource (prefix-name-optional number).

Create the ARM Deployment

Now the fun begins! Launch your Azure PowerShell window and sign into your CSP / ARM subscription using:

Login-AzureRMAccount

View the subscriptions that your account has access to:

Get-AzureRMSubscription

Copy the ID of the subscription that you want to deploy the VMs into, and run:

Select-AzureRMSubscription -SubscriptionID xxxxxxxx-yyyy-zzzz-aaaa-bbbbbbbbbbbb

You should then create a new resource group in the Azure region of your choice. My naming standard will have me create a group called rg-mig1, and I’ll create it in Dublin.

New-AzureRmResourceGroup -Location NorthEurope -Name "rg-mig1"

Now is the moment of truth. I am going to import my (heavily modified) export.json file into the CSP subscription to create all of my virtual machines and their dependencies.

New-AzureRmResourceGroupDeployment -Name "rg-mig1" -ResourceGroupName "rg-mig1" -TemplateFile "C:\Temp\cs-mig1\export.json" –Verbose

Note that the disks have not been copied yet, so there will be a bunch of errors at the end of this import. The errors refer to missing virtual hard disks.

Unable to find VHD blob with URI

We will fix those errors later.

Copy Virtual Hard Disks

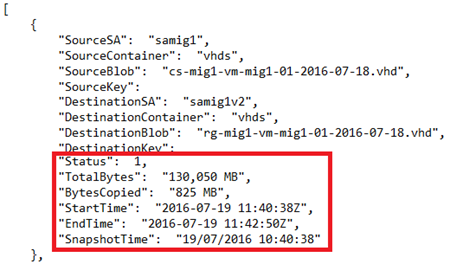

Browse (in PowerShell) to where you extracted the migAz zip file. You are going to run a script called BlobCopy.ps1, and point it at CopyBlobDetails.json. This script will create a snapshot of the disks in the source subscription, and copy the disks (using the Azure network) directly to the new storage account in the CSP/ARM subscription.

.\BlobCopy.ps1 -ResourcegroupName "rg-mig1" -DetailsFilePath "C:\Temp\cs-mig1\copyblobdetails.json" -StartType StartBlobCopy

You can track the progress of the copy using:

.\BlobCopy.ps1 -ResourcegroupName "rg-mig1" -DetailsFilePath "C:\Temp\cs-mig1\copyblobdetails.json" -StartType MonitorBlobCopy

If you paid attention, you might have noticed that CopyBlobDetails.json had fields for tracking the copy. You can get a bunch of information from that file about each of the disk copy operations.

Fix Up Virtual Machines

The previous creation of the virtual machines had disk-related errors. The disks are in place now, so we can re-run the import to fix up the machines.

New-AzureRmResourceGroupDeployment -Name "rg-mig1" -ResourceGroupName "rg-mig1" -TemplateFile "C:\Temp\cs-mig1\export.json" –Verbose

Verify the CSP/ARM Deployment

You should find that your virtual machines are now running in the ARM / CSP subscription. Note how everything is in the single eg-mig1 resource group and has my preferred naming standard:

The load balancer is configured with a public IP address with static configuration:

The inbound NAT rules have been copied over:

And the network has a new network address as I required to enable a VNET-to-VNET connection with the original deployment.

Troubleshooting

The migAz tool creates some log files in %USERPROFILE%\appdata\Local. Look for migAz-<YYYYMMDD>.log and migAz-XML-<YYYYMMDD>.log.

If you have issues during the import of the export.json then you need to pay attention to the errors in the PowerShell screen and manually troubleshoot the export file. In my case, my heavily edited exoprt.json had a typo in one of the renamed virtual hard disks so it didn’t match what was copied (details in CopyBlobDetails.json). The fix was easy:

- The error was clear that the specified disk (with the wrong name) didn’t exist.

- I corrected the JSON file.

- I removed the new virtual machine from the CSP subscription.

- I re-ran the import, which re-created that machine and attached the disk (no duplicates of existing resources are created).

Post-Migration

So what’s next?

- Re-deploy Azure Backup using the recovery services vault to protect my VM workloads.

- Deploy a gateway subnet and gateway.

- Create a VNET-to-VNET VPN with the old deployment to allow data synchronization.

- Test the new deployment.

- Schedule a maintenance window to switch production over to the new deployment in CSP.

- Change DNS, etc, to redirect users to the CSP deployment.

- Optionally reverse data synchronization.

- Remove the old non-CSP deployment after a suitable waiting period, and remove all inter-VNET comms.

Summary

If you want migAz to be easy, then it can be – just don’t modify the json files unless your new storage account name won’t be globally unique. It’s actually a pretty simple process:

- Export

- Import

- Copy disks

- Import (fix up)

The only complexity in my migration was caused by my desire to implement naming standards across all of my ARM resources.

The migAz toolset might not be supported, but it is the only way to migrate existing virtual machine workloads to Azure. It works pretty well, so I’m happy to use and recommend it.

The older management portal doesn’t do resource groups, so Azure created a messy collection of resource groups to store the classic resources. Site back and relax; Azure will start to deploy your virtual machines and all of their dependencies … except for the virtual hard disks! This will result in a warning, as you can see below.

We just published the new migAz ARM and migAz AWS version. Enjoy!

migaz.exe I can not find it.

It the very first result in my Google search.

I have discussion with my CSP partner since they say the public ipaddress of my VM will stay the same and is migrated and only ipaddresses of cloud services are not migrated. This makes me confused because i think reserved ipaddresses cannot be migrated, also from virtual machines. Am i right?

Using the approach I documented here, you are building new VMs and IPs, so the IPs will change. If you use the “platform supported method” (moving resources between subscriptions in the same tenant) then public IPs will not change because they are the same VMs and they stay online.