Speakers: Greg Cusanza, Serior PM, Microsoft.

Part 1 is getting things going from scratch. Part 2 will be about Hybrid Networking (configuring network fabric for HNV, network virtualization gateways, tenant self-service).

Recap on VMM 2012 SP1

- Connectivity: multi-tenancy, isolation, mobility, bring-your-own-IP. Result: VM Networks.

- Capability: QoS, security, optimizations, monitors, extensibility. Result: Logical Switch

Also worked on a partner ecosystem. Moving on …

Step 1: Plan

- Design: draw your network. Ask questions up front to get answers

- Hardware: use hardware that supports your design. Iterate back on your design. Configure the hardware.

- VMM configuration: Create logical objects. Configure hosts. Add tenants. Deploy workloads

Network Design

Questions: How do I provide isolation?

- Data center isolation: separation of infrastructure traffic as security boundary and for QoS

- Tenant islotion

Can do this via:

- Physical separation: physical switches and adapters for each type of traffic

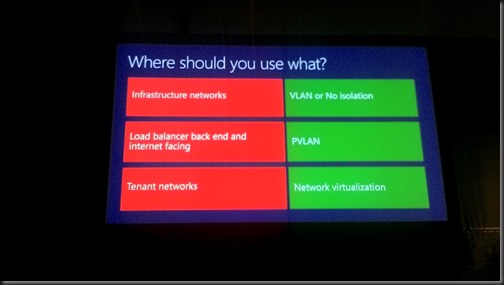

- Layer 2: VLAN: Tag is applied to packets to control forwarding. Very mature and well understood. Limited number (4096) and very complex after a while.

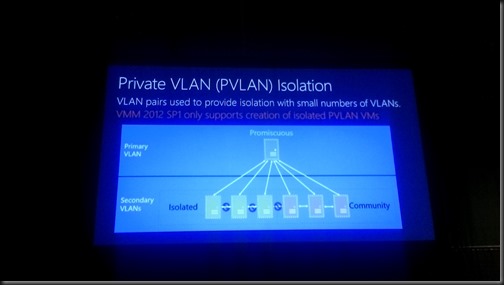

- Layer 2: PVLAN: Primary and secondary tags are used to isolate cliens while still giving access to shared services. Limited support in VMM 2012 SP1.

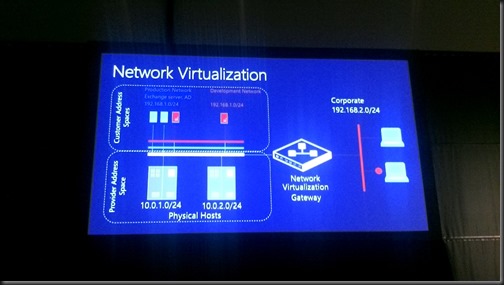

- HNV: Use NVGRE encapsulation to isolate tenants

You can simulate community in VMM by using network virtualization on the back end of your isolated PVLANs – a common VM Network.

Network Virtualization: you can create networks on the fly that are abstracted from the physical VLAN that they are connected to.

No Isolation

- Why: provides direct access to the logical network. VMM picks the right VLAN based on placement.

- Upgrade to SP1: Pre-SP1 VMs have direct connectivity to the logical network by default

- Direct access to infrastructure: Think of the system center in a VM scenario

Where should you use what?

Address spaces

- Size based on broadcasts and address utilization

- Can be DHCP and static

- IPv4 and IPv6: You have to choose between them when using HNV

SR-IOV

Great performance and scalability. The trade off is that you lose vSwitch management features. Limited support for Intelligent Placement.

RDMA

Great fast storage. Can’t be used on Virtual Switch NICs.

Teamed Adapters

3 models:

- Non converged. Physical NICs for every task/role/network. Cabling nightmare.

- Converged: Use fewer NICs and QoS to converge roles.

- Converged with RDMA: See my recent design

Networks in VMM

- Logical network: models the physical network. Separates like subnets and VLANs into named objects that can be scoped to a site. Container for fabric static IP address pools. VM networks are created on logical switch.

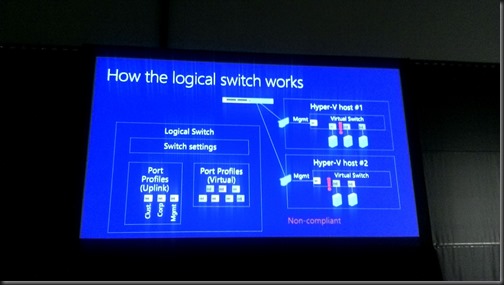

- Logical switch: central container for vSwitch settings. Consistent port profiles across data centre. Consistent extensions. Compliance enforcement.

Demo

It’s VMM 2012 R2. First, create a management network in Fabric – Logical Networks. Calls it management. He chhoses “One connected network”. Adds a Network Site that is scoped to a host group, and uses a DHCP subnet (and VLAN ID). Creates a clustering “One connected network” logical network with a network site/subnet with static IP (and VLAN ID). Creates a second network site with a static IP subnet (and VLAN ID).

Then creates IP pools for the 2 clustering network sites.

Now creates and External (name/purpose) logical network. Sets the Network site and IP subnet/VLAN. Then creates an IP pool for External.

For VLAN tenant isolation, he can create a logical network with lots of VLANs/subnets in a network site. Each subnet would require an IP pool.

VM Networks are required for connecting virtual NICs. For the tenant network (using VLANs) the VM Network will be assigned to a specific VLAN/subnet in the tenant logical network.

No HNV in this demo. That’s in part 2.

What’s New in VMM 2012 R2?

All network devices (except load balancers) and services are now “network services” (Virtual switch extension, network manager, network virtualization policy, gateway, physical switch):. New interfaces:

- Network manage: separation of virtual switch and network management

- Physical switch

IPAM as a network manager:

- Inbox plugin for Microsoft IPAM

- Exchange logical networks, sites, and subnets. Doesn’t use the manual/scheduled script of 2012 SP1. Plugin is shipped in VMM 2012 R2.

Can track utilization and expand as required.

In-box plugin for the standards based (CIM) network switch profile. Implemented and shipping with Arista EOS 4.12 – common across Arista switching platforms.

Logical Switch

Why:

- Automatic team creation

- Configuration for data centre on a single object

- Live Migration limited within a logical switch – remember that this is an abstraction so it doesn’t limit LM across a data center, etc.

VM Configuration

- VM Networks: All vNICs now only connect to VM Networks

- Port Classifications: Container for port profile settings. For Hyper-V switch port settings and extions port profiles. Reusable. Exposed to tenants through cloud (a classification)

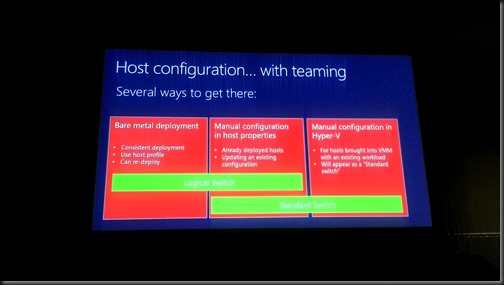

Demo (Logical Switch)

Everything is now called a port profile (they can be virtual or uplink, depending on what you choose in the wizard). Creates an uplink port profile and configure the NIC teaming configuration. You see the new Dynamic Mode there (only supports WS2012 R2). There is a new option: Host Default. Chooses the default for that particular OS (that is Dynamic on WS2012 R2). Then configures the Network Sites that can use this uplink port profile. You do not need to Enable Hyper-V Network Virtualization in this wizard if your hosts will be WS2012 R2. Doesn’t do any harm if you do.

Now creates a logical switch. Adds the new uplink port profile (meaning the switch will use that NIC team config). Configures the available QoS policies (virtual ports) for the virtual switches that will be created.

Now he creates a virtual switch on a host. New Logical switch, select the NIC, join it to the uplink port profile. Then add a second NIC and repeat. This teams the NICs. Can also use virtual network adapters here if you want to create converged networks – make sure one of them is marked for VMM management if using your default physical management NIC for the NIC team.

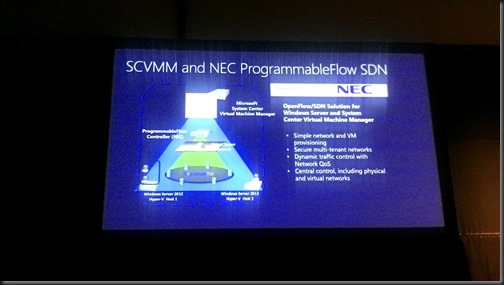

External Isolation

This is a feature you can do with a forwarding extension to the virtual switch.

Does a demo of the NEC PF1000 Programmable Flow OpenFlow forwarding extension, creating the above bits, after creating a VLAN.

Then a demo of the Cisco Nexus 1000V – which is now available for download/sale depending on the edition.

Forwarding Extensions in VMM 2012 R2

HNV and forwarding extensions can co-exist in WS2012 R2. Can enable network virtualization in the extension.

And that’s the end of part 1. You can find part 2 here.