When you are done reading this post, then see the update that I added for SMB Live Migration on Windows Server 2012 R2 Hyper-V.

Unless you’ve been hiding under a rock for the last 18 months, you might know that Windows Server 2012 (WS2012) Hyper-V (and IIS and SQL Server) supports storing content (such as virtual machines) on SMB 3.0 (WS2012) file servers (and scale-out file server active/active clusters). The performance of this stuff goes from matching/slightly beating iSCSI on 1 GbE, to crushing fiber channel on 10 GbE or faster.

Big pieces of this design are SMB Multichannel (think simple, configuration free & dynamic MPIO for SMB traffic) and SMB Direct (RDMA – low latency and CPU impact with non-TCP SMB 3.0 traffic). How does one network this design? RDMA is the driving force in the design. I’ve talked to a lot of people about this topic over the last year. They normally over think the design, looking for solutions to problems that don’t exist. In my core market, I don’t expect lots of RDMA and Infiniband NICs to appear. But I thought I’d post how I might do a network design. iWarp was in my head for this because I’m hoping I can pitch the idea for my lab at the office. ![]()

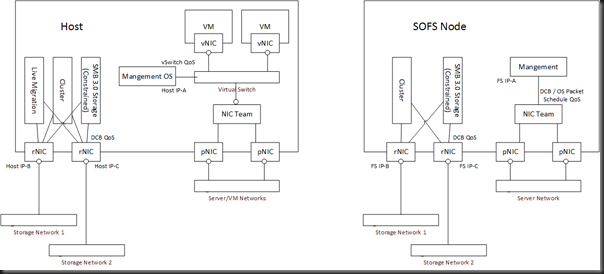

On the left we have 1 or more Hyper-V hosts. There are up to 64 nodes in a cluster, and potentially lots of clusters connecting to a single SOFS – not necessarily 64 nodes in each!

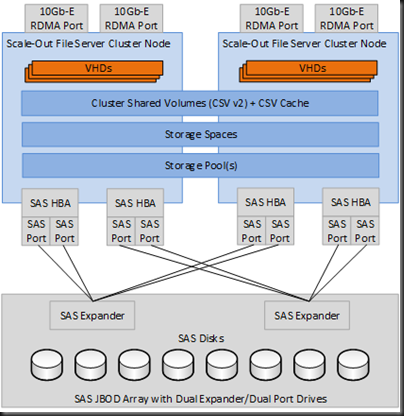

On the right, we have between 2 and 8 file servers that make up a Scale-Out File Server (SOFS) cluster with SAS attached (SAN or JBOD/Storage Spaces) or Fiber Channel storage. More NICs would be required for iSCSI storage for the SOFS, probably using physical NICs with MPIO.

There are 3 networks in the design:

- The Server/VM networks. They might be flat, but in this kind of design I’d expect to see some VLANs. Hyper-V Network Virtualization might be used for the VM Networks.

- Storage Network 1. This is one isolated and non-routed subnet, primarily for storage traffic. It will also be used for Live Migration and Cluster traffic. It’s 10 GbE or faster and it’s already isolated so it makes sense to me to use it.

- Storage Network 2. This is a second isolated and non-routed subnet. It serves the same function as Storage Network 2.

Why 2 storage networks, ideally on 2 different switches? Two reasons:

- SMB Multichannel: It requires each multichannel NIC to be on a different subnet when connecting to a clustered file server, which includes the SOFS role.

- Reliable cluster communications: I have 2 networks for my cluster communications traffic, servicing my cluster design need for a reliable heartbeat.

The NICs used for the SMB/cluster traffic are NOT teamed. Teaming does not work with RDMA. Each physical rNIC has it’s own IP address for the relevant (isolated and non-routed) storage subnet. These NICs do not go through the virtual switch so the easy per-vNIC QoS approach I’ve mostly talked about is not applicable. Note that RDMA is not TCP. This means that when an SMB connection streams data, the OS packet scheduler cannot see it. That rules out OS Packet Scheduler QoS rules. Instead, you will need rNICs that support Datacenter Bridging (DCB) and your switches must also support DCB. You basically create QoS rules on a per-protocol-basis and push them down to the NICs to allow the hardware (which sees all traffic) to apply QoS and SLAs. This also has a side effect of less CPU utilization.

Note: SMB traffic is restricted to the rNICs by using the constraint option.

In the host(s), the management traffic does not go through the rNICs – they are isolated and non-routed. Instead, the Management OS traffic (monitoring, configuration, remote desktop, domain membership, etc) all goes through the virtual switch using a virtual NIC. Virtual NIC QoS rules are applied by the virtual switch.

In the SOFS cluster nodes, management traffic will go through a traditional (WS2012) NIC team. You probably should apply per-protocol QoS rules on the management OS NIC for things like remote management, RDP, monitoring, etc. OS Packet Scheduler rules will do because you’re not using RDMA on these NICs and this is the cheapest option. Using DCB rules here can be done but it requires end-to-end (NIC, switch, switch, etc, NIC) DCB support to work.

What about backup traffic? I can see a number of options. Remember: with SMB 3.0 traffic, the agent on the hosts causes VSS to create a coordinated VSS snapshot, and the backup server retrieves backup traffic from a permission controlled (Backup Operators) hidden share on the file server or SOFS (yes, your backup server will need to understand this).

- Dual/Triple Homed Backup Server: The backup server will be connected to the server/VM networks. It will also be connected to one or both of the storage networks, depending on how much network resilience you need for backup, and what your backup product can do. A QoS (DCB) rule(s) will be needed for the backup protocol(s).

- A dedicated backup NIC (team): A single (or teamed) physical NIC (team) will be used for backup traffic on the host and SOFS nodes. No QoS rules are required for backup traffic because it is alone on the subnet.

- Create a backup traffic VLAN, trunk it through to a second vNIC (bound to the VLAN) in the hosts via the virtual switch. Apply QoS on this vNIC. In the case of the SOFS nodes, create a new team interface and bind it to the backup VLAN. Apply OS Packet Scheduler rules on the SOFS nodes for management and backup protocols.

With this design you get all the connectivity, isolation, and network path fault tolerance that you might have needed with 8 NICs plus fiber channel/SAS HBAs, but with superior storage performance. QoS is applied using DCB to guarantee minimum levels of service for the protocols over the rNICs.

In reality, it’s actually a simple design. I think people over think it, looking for a NIC team or protocol connection process for the rNICs. None of that is actually needed. You have 2 isolated networks, and SMB Multichannel figures it out for itself (it makes MPIO look silly, in my opinion).

The networking chapter of Windows Server 2012 Hyper-V Installation And Configuration Guide goes from the basics through to the advanced steps of understanding these concepts and implementing them: