I arrived in about an hour late for this event because I had to present at a cloud computing breakfast event in the city. Writing until midnight, doing work until 1am and getting up at 05:30 has left me a bit numb so my notes today could be a mess.

The ash cloud has caused last minute havoc with the speakers but the MS Ireland guys have done a good job adjusting to it.

System Center v.Next

I arrived in time for Jeff Wettlaufer’s session.

The VMM v.Next console is open with an overview of a “datacenter", giving a glimpse of what is going on. We see the library and shares which is much better laid out. It includes Server App-V packages, templates, virtual hard disks, MSDeploy packages (IIS applications), SQL DAC packages, PowerShell, ISO and answer files.

VMM v.Next

The VMM model is shown next. We can create a template for a service. This includes virtual templates for virtual machines: database, application, web, etc. The web VM is shown. We can see the MS deploy package from the library is contained within the template for this VM. The web tier in the model can be scaled out automatically using a control for the model. The initial instance count, maximum and minimum instance counts can be set. The binding to network cards can be sent too.

An instance of this model is deployed: lots of VM’s are included in the model. One deployment = lots of new VM’s. We now see the software update mechanism. The compliant and non compliant running VHD’s are identified. Normally we’d do maintenance windows, patching and reboots. With this approach we can remediate the running VM’s VHD’s. Because there are virtualised services, they can be migrated onto up-to-date VHD’s and the old VHD’s are remediated. The service stays running and there are no reboots or maintenance windows.

This makes private cloud computing even better. We already can have very high uptimes with current technology. The only blips are usually in upgrades. This eliminates that. The model approach also optimises the

Operations Manager 2007 R2 Azure Management Pack

You can use an onsite installation of OpsMgr to manage Azure hosted applications. This is apparently out at the end of 2010. We get a demo starting with a model, including web/database services, synthetic transactions and the Azure management pack containing Azure objects (a web front end that fronts the on-premises databases). We see the usual alert and troubleshooting stuff from OpsMgr. Now we see that tasks for Azure are integrated. This includes the addition of a new web role instance on Azure. In theory this could be automated as a response to underperforming services (use synthetic transactions) but it would need to be tested and monitored to avoid crazy responses that would cost a fortune.

Almost everything in the System Center world has a new release or refresh in 2011. It will be a BIG year. I suspect MMS 2011 will be nuts.

It looks like I missed 4 of the demos :-( That’s work for ya!

Configuration Manager v.Next– Jeff Wettlaufer

Woohoo! I didn’t miss it.

The focus on this release is user centric client management. The typical user profile has changed. Kids are entering the workplace who are IT savvy. The current generation knows what they want (a lot of the time). MS wants to empower them. Users should self-provision, connect from anywhere, access devices and services from anywhere.

There should be a unified systems management solution. Do you want point solutions for software, auditing, patching, anti-malware, etc.

Control is always important. Whether it is compliance for licensing, auditing, policy enforcement, etc. Business assets must be available, reliable and secure. Automation must be employed and expanded upon to remove the human element – more efficient, allow better use of time to focus on projects, less mistake prone.

ConfigMgr 2007 does a lot of this. However, it didn’t do the last step: remediating non-compliance with policy (software, security, etc).

Notes: 75% of American and 80% of Japanese workers will be mobile in 2011. The IT Pro needs to change: be more generalized and have a variety of skills capable of changing quickly. IT in the business has “comsumerized”: they are dictating what they want or need rather than IT doing that. I think many admins in small/medium organizations or those dealing with executives will say that there has always been some aspect to that. The new profile of user will cause this to grow.

System Center ConfigMgr is moving towards answering these questions. The end user will be empowered to be able to self-provision. Right now, the 2007 release translates a user to a device, and s/w distribution is a glorified script. It is also very fire and forget, e.g. an uninstalled application won’t be automatically reinstalled so there isn’t a policy approach.

The v.Next method changes this. It will understand the difference between different types of device the user may have. It is more flexible. It is a policy management solution, e.g. an uninstalled application will be automatically reinstalled because it is policy defined/remediated.

Software distribution in v.Next: relationships will be maintained between the user and devices. User assigned software will be installed only if the user is the primary user of the device – save on licensing and bandwidth. S/W can be pre-deployed to the primary devices via WOL, off-peak hours, etc.

Application management is changing too. Administrators will manage applications, not scripts. The deployments are state based, i.e. ConfigMgr knows if the application is present or not and can re-install it. Requirements for an application can be assessed at installation time to see if the application should even be installed at all. Dependencies with other applications can be assessed automatically too. All of this will simplify the application management process (collections) and troubleshooting of failed installations.

For the end user, there is a web based application catalog. A user can easily find and install application. A workflow for installation/license approval can back this up. S/W will install immediately after selection/approval – this uses Silverlight to trigger the agent. A user can define what their business hours are in the client to control installations or pre-deployments. They can also manage things like automated reboots – no one likes a mandated reboot (after 5 minutes) while doing something important, e.g. a live meeting, demo, presentation, etc. This is coming in beta2: there will be a pre-flight check feature where you can see what will happen with an application if you were to target it at a collection. You then can do some pre-emptive work to avoid any failures. I LIKE that!

We now see a demo of a software package/deployment. An installer package for Adobe Reader is imported. This isn’t alien from what we know now. There is a tagging mechanisms for searches. We can define the intent: install for user or install for system. You can add deployment types for an existing application. We see how an App-V manifest is added to the existing application which was previously contained _just_ an MSI package. Now you can do an install or an App-V deployment (stream and/or complete deployment) with the one application in ConfigMgr. So we now have 2 deployment types (packages) in a single application. This makes management much easier.

We see that the deployment of the application can be assigned to a user and will only be installed to their primary device. System requirements for the application can be included in the package.

A deployment (used to be called an advertisement) is started and targeted at a collection. The distribution points are selected. Now you can specify an intent, e.g. make the application available to the user or push it. The usual stuff like scheduling, OpsMgr integration are all present.

SQL is being leveraged more and more. A lot of the file system and copy operations are going away and being replaces with SQL object replication. It also sounds like the ConfigMgr server components might be 64-bit only.

The MMC GUI is being dropped. The new UI is more intuitive, better laid out and faster. It will filter content based on role/permissions in ConfigMgr. This will make usage of the console easier. Wunderbars finally make an appearance in ConfigMgr to allow different views to be presented: Administration, Software Library, Assets and Compliance, and Monitoring.

Role Based Administration: The MMC did cause havoc with this. A security role can be configured. This moves in the same direction as VMM and OpsMgr. 13 roles are built into the beta1 build. You can bound the rights and access in ConfigMgr, e.g. application administrator, asset analyst, mobile device analyst, read only roles, etc. We are warned that this might change before RTM. Custom roles can be created. When a role logs into the console they will see only what is relevant (permitted). Current ConfigMgr sites did this by tweaking files on site servers which is totally not supported and caused lots of PSS tickets.

Primary sites are needed only for scale out. The current architecture can be very complex in a large network. Content distribution can be done with secondary sites, DP’s (throttling/scheduling), BranchCache and Branch Distribution Points. Client agents settings are configurable in a collection rather than in a primary site.

Note: we see zero hands go up when we are asked if anyone is using BranchCache. That’s not surprising because of the licensing requirements, the limit of not having upload efficiencies (compared to network appliance solutions) and limited number of supported solutions.

Jeff says that client traffic to cross-wan ConfigMgr servers dropped by 92% when BranchCache was employed – the distribution point can be BITS (HTTPS) enabled.

Distribution point management has been simplified with groups. Content can be added based on group membershpip. Content can be staged to DP’s, as well as scheduled and throttled.

SQL investments mean that the inbox is gone in v.Next. Support issue #1 was the inbox. There are SQL methods for inter-site communications. SQL Reporting Services is going to be used. SQL skills will be required. MS needs to invest in training people on this.

ConfigMgr client health features have been expanded. There is configurable monitoring/remediation for client prerequisites, client reinstallation, windows services dependencies, WMI, etc. There are in-console alerts when certain numbers of unhealthy clients are detected – configurable threshold.

There is a common administration experience for mobile device management – CAB files can be added to ConfigMgr applications (not just App-V and MSI/installer). Cross-platform device support (Nokia Symbian) is being added. User centric application and configuration management will be in it. You can monitor and remediate out of date devices.

Software Updates introduces a group which contains collections. You can target updates to a group. This in turn targets the contained collections. Auto-deployment rules are being introduced. Some want to do patch tuesday updates automatically. You DEFINITELY need to auto-approve anti-virus/malware updates (Microsoft Forefront updates flow through Windows Updates). Auto-approved updates will automatically flow out to managed clients. This has a new interface but it’s a similar idea to what you get with WSUS.

Operating System Deployment is a BIG feature for MS in this product. We now get offline servicing of images. It supports component based servicing and uses the approved updates. This means that newly deployed PC’s will be up to date when it comes to updates. There is now a hierarchy-wide boot media (we don’t need one per site and saving time to create and manage it). Unattended boot media mode with not need to press <Next>. We can use PXE hooks to automatically select a task sequence so we don’t need to select one from a list. USMT 4.0 will have UI integration and support hard-link, offline and shadow copy features. In 2007 SP2, these features are supported but hidden behind the GUI.

Remote Control is back. Someone wants it. I don’t see why – the feature is built into Windows and can be controlled by GPO.

Settings Management (aka Desired Configuration Management) is where you can define a policy for settings and identify non-compliance. V.Next introduces automated remediation of this via the GUI. This is an option so it is not required: monitor versus enforce. Audit tracking (who changed what) is added.

Readiness Tips: Get to 64-bit OS’s ASAP. Start using BranchCache. Plan on flattening the hierarchy. Use W2008 64-bit or later. Start learning SQL replication. Use AD sites for site boundaries and UNC paths for content.

A VHD with a 500 day time bombed VHD will be made available by MS in a few weeks. Some hand-on labs will be made available soon after in TechNet Online.

Can you see why I reckon ConfigMgr is the biggest and most complex of the MS products?

Operations Manager

Irish OpsMgr MVP Paul Keely did this session. I missed the first half hour because I was talking to Jeff Wettlaufer and Ryan O’Hara from Redmond. When I came back I saw that Paul was talking about the updates that have been made available for OpsMgr 2007 R2. The demo being shown was the SLA Dashboard for OpsMgr.

Management pack authoring: “you need to have a PhD to author a management pack”. This is still so true.

Using a Viso/OpsMgr connector you can load a distributed application into Visio. You can then export this into SharePoint where the DA can be viewed on a site.

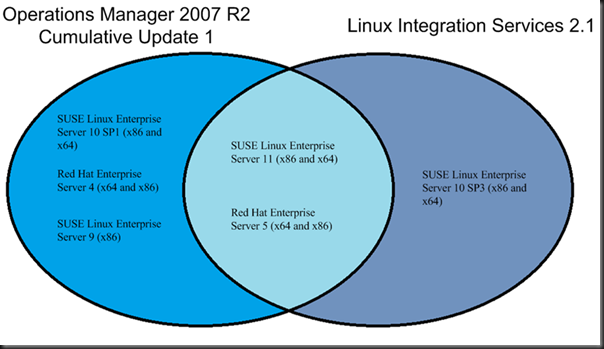

KB979490 Cumulative Update 2 includes support for SLES 11 32-bit and 64-bit and zones for all versions of Solaris.

V.Next: MS have licensed “EMC Smarts” for network monitoring. An agent can figure out what switch it is on and then figure out the network. This means OpsMgr can figure out the entire network infrastructure and detect when a component fails.

Management packs are changing. A new delay and correlation process will alert you about the root cause of an issue rather than alert you about every component that has failed because of the root cause. This makes for a better informed and clearer issue notification.

Opalis

This is a recent System Center acquisition for automated work flows. The speaker was to fly in this morning but the ash cloud caused airports to close. MS Ireland have attempted to set up a Live Meeting where the speaker can present to us from the UK.

The speaker is Greg Charman and is present in a tiny window in the top left of the projector screen.

We have a number of IT silos: SQL, virtualisation, servers, etc. Applications or processes tend to cross those silos, e.g. SQL is used by System Center. Server management relies on virtualization. Server management and virtualization both use System Center.

Opalis provides automation, orchestration and integration between System Center. Currently (because it was recently acquired) it also plugs into 3rd party products. Maybe it will and maybe it won’t continue to support 3rd party products in future releases.

Opalis provides runbook/process automation. You remove human action from the process to improve the speed and reliability. It also allows processes to cross the IT silos.

In the architecture, there is an Integrated Data Bus. Anything that can connect to this can interact with other services (in theory). Lots of things are shown: Microsoft, BMC, HP, CA, IBM, EMC, and Custom Applications.

A typical process today: OpsMgr raises an alert. Manually investigate if it is valid. Update a service desk ticket. Figure out what broke and test solutions. Maybe include a 3rd party service provider. All of these tasks take time and the issue goes on and on.

Opalis: sees the alert and verifies the fault. It updates the issue. It does some diagnostics. It passes the results back to the service desk. It might fix the problem and close the ticket. At the least it could provide lots of information for a manual remediation.

Opalis is used for:

- Incident management: orchestrate the troubleshooting. Maybe identify the cause and remediate the issue.

- Virtual machine life cycle management: Automate provisioning and resource allocation. Extend virtual machine management to the cloud. Control VM sprawl.

- Change and control management: This integrates ConfigMgr and VMM.

The integration for some products will be released later in 2010. The VMM and ConfigMgr integrations are in the roadmap, along with a bunch of other MS ones.

System Center Essentials 2010

This is presented by Wilbour Craddock. As most companies in Ireland are small/medium, SCE 2010 should be a natural fit for a lot of them. Remember that it is a little crippled compared to the full individual products. It can manage up to 50 servers (physical or virtual) and up to 500 clients.

- Monitor server infrastructure using the OpsMgr components.

- Manage virtual machine using the VMM 2008 R2 components. This include P2V and PRO tips.

- Manage s/w and updates using the ConfigMgr components.

The “SCE 2010 Plus” SKU adds DPM 2010 to the solution so you can backup your systems.

Inventorying: Runs every 22 hours and includes 60+ h/w and s/w attributes. Visibility is through reports. 180 reports available. New in 2010: Virtualization candidates.

Monitoring includes network management with SNMP v1 and SNMP v2. It uses the same management packs as OpsMgr. Third party and custom ones can be added. The product will let you know when there is a new MP in the MS catalog.

Only the evaluation is available as an RTM right now. The full RTM and pricing for it will be available in June.

Patching is done with WSUS and this is integrated with the solution. Auto-approval deadlines are available. It can synch with the Windows catalogue multiple times in a day. There is a simple view for needed updates.

SCE can deploy software but it cannot deploy operating systems. You can use the free WDS or MDT to do this. Note that a new version of MDT seems to be on the way. The software deployment process is much simpler than what you get with ConfigMgr, thanks to the reduced size of the network that it supports. It assumes a much simpler network.

At first glimpse of the feature list, it appears to include most of the VMM features, but it not not be as good as VMM 2008 R2. It cannot manage a VMware infrastructure but it can do V2V. Host configuration might be better than VMM. P2V is different than in VMM. The Hyper-V console is still going to be regularly used, e.g. you can’t manage Hyper-V networking in SCE 2010. Enabling a physical machine to run Hyper-V is as simple as clicking “Designate as a host”. PowerShell scripts are not revealed in the GUI like in VMM but you can still use PowerShell scripts.

Software deployment now include filtering, e.g. CPU type X and Operating System Y. You can modify the properties of existing packages.

The setup is simple: 10 screens. Configuration is driven by a wizard.

Requirements: W2k* or W2K8 R2 64-bit only. 2.8GHz, 4GB RAM, 150GB disk recommended. It can manage XP, W2003, and later.

The server with DPM will be around €800. Each managed device (desktop or server) will require a management license. You can purchase management licenses to include DPM support or not. This means you can backup your servers, maybe a few PC’s and choose to use the cheaper management licenses for the rest of the PC’s.

Intune

Will talks about this. Dublin/Ireland will be included in phase II of the beta. It provides malware protection and asset assessment from the cloud. It will be used in the smaller organizations that are too small for SCE 2010.

That was the end of the event. It was an enjoyable day and a good taster of what happened at MMS.